Why are using dimension 0 for taking the mean of the images

The text explains it pretty well. Not sure if this will do a good job explaining it differently:

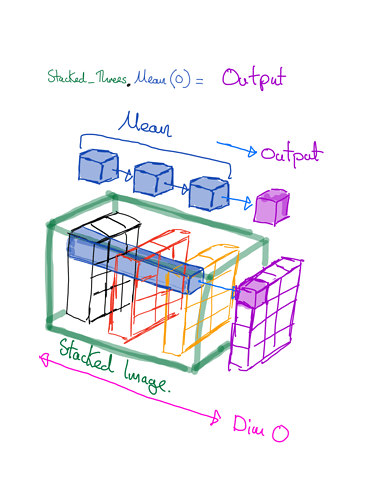

If I remember correctly here the context is to show us how a handwritten grayscale image (single channel) of “3” would look like on average. For that we stack some of the images of 3s and take a mean over the first index or dim=0. The selected images of 3s are stacked along the first index : stacked_threes[0] is the first image, stacked_threes[2] the third. Taking the mean in dim=0 simply means we see how on average a three will look like.

1 Like

Thanks for give me clear information about it, really appreciate.