Hi everyone:

My specs:

fastai: 1.0.42

torch: 1.0.1.post2

CUDA: 10.0

Nvidia driver: 410.79

I get very unstable results when training networks with custom dataloaders.

Case 1.

I create a dataset by subclassing TensorDataset and just adding a hardcoded c property.

class MyDataset(TensorDataset):

@property

def c(self):

return 2

All the X data are images:

- loaded from PNG files to ndarrays

- normalized to 0-1 range

- type set to float32

- converted to torch tensor, also float32

All Y data are segmentation masks:

- loaded from PNG files to ndarrays

- only values 0 and 1 (2 classes)

- type set to uint8

- converted to torch tensors: int64 (long)

I start the training in Jupyter (note - Iused this instance of Jupyter like for some other trainings, before I started this particular one).

The training protocol for all below cases is:

- 10 epochs

- lr: 1e-3

- learn.fit_one_cycle(10, slice(lr), pct_start=0.9)

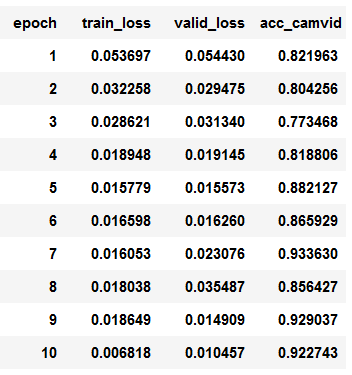

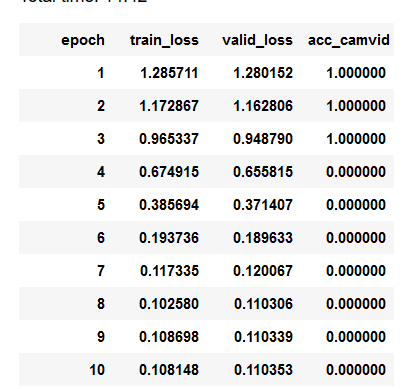

And here ate the results:

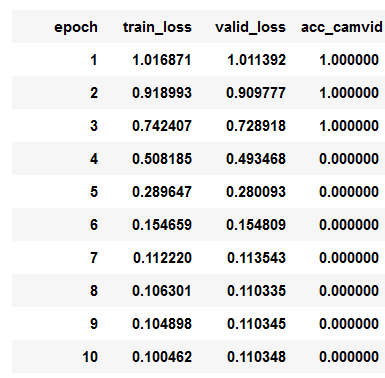

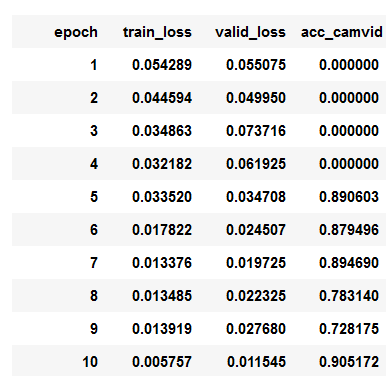

So now the comparison with exactly the same data, and all other training parameters. The difference is that the data are loaded with the following class:

class SegLabelListCustom(SegmentationLabelList):

def open(self, fn): return open_mask(fn, div=True)

class SegItemListCustom(ImageItemList):

_label_cls = SegLabelListCustom

src = (SegItemListCustom.from_folder(path)

.split_by_folder()

.label_from_func(get_y_fn, classes=codes))

As we can see the loss is starting from much lower point and the accuracy is climbing up pretty stable.

Of course this discrepancy could have to do with things like wrong preprocessing in my subclassed TensorDataset case, but I checked all that - the data is the same.

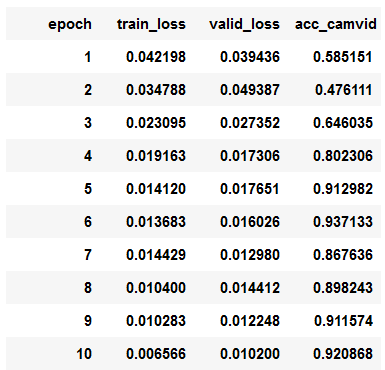

So I do the following thing: I close the Jupyter Notebooks (not this one notebook, but whole program) and open it one more time. I go straight to my TensorDataset notebook and and train as described. I get these results:

Now it looks stabe, and predictions are also quite good. But why it didn’t train at all in the first run?

Case2.

This case is almost the same, but the key difference is that I save all preprocessed PNG files to NPY ndarray tables and load them to TensorDataset.

I do the first training as in Case1. The behavior is the same.

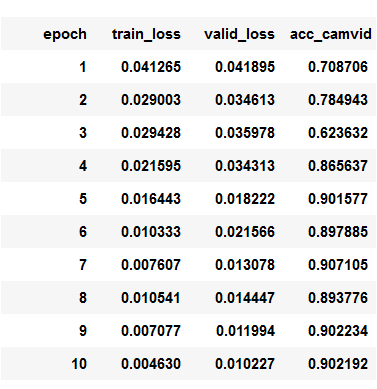

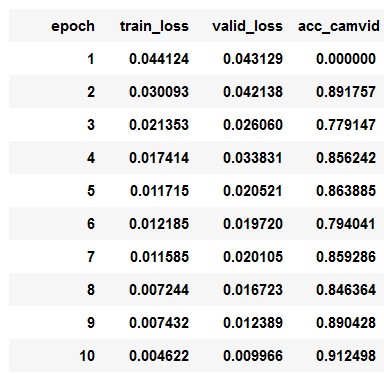

I shutdown the Jupyter Notebooks, start it again dothe training:

The bahavior is similar as in Case1 - the learner starts from lower loss, accuracy in the end is OK, and predictions are good. But in the first 4 epochs the accuracy is 0. Why?

To do one more test I restart the kernel and do another run - it looks more stable, but with small loss in the first epoch, the accuracy is 0, when - judging by earlier runs - it shouldn’t.

I tried and tried to debug this and find the reason of this behavior but for now I’m out of options left. Could any one of fastai people help me on this?