- return init_default(nn.Conv2d(ni, nf, kernel_size=ks, stride=stride, padding=padding, bias=bias), init)

-

- def conv2d_trans(ni:int, nf:int, ks:int=2, stride:int=2, padding:int=0, bias=False) -> nn.ConvTranspose2d:

- "Create `nn.ConvTranspose2d` layer."

- return nn.ConvTranspose2d(ni, nf, kernel_size=ks, stride=stride, padding=padding, bias=bias)

-

- def relu(inplace:bool=False, leaky:float=None):

- "Return a relu activation, maybe `leaky` and `inplace`."

- return nn.LeakyReLU(inplace=inplace, negative_slope=leaky) if leaky is not None else nn.ReLU(inplace=inplace)

-

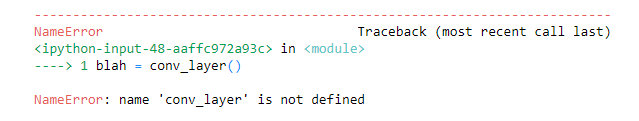

- def conv_layer(ni:int, nf:int, ks:int=3, stride:int=1, padding:int=None, bias:bool=None, is_1d:bool=False,

- norm_type:Optional[NormType]=NormType.Batch, use_activ:bool=True, leaky:float=None,

- transpose:bool=False, init:Callable=nn.init.kaiming_normal_, self_attention:bool=False):

- "Create a sequence of convolutional (`ni` to `nf`), ReLU (if `use_activ`) and batchnorm (if `bn`) layers."

- if padding is None: padding = (ks-1)//2 if not transpose else 0

- bn = norm_type in (NormType.Batch, NormType.BatchZero)

- if bias is None: bias = not bn

- conv_func = nn.ConvTranspose2d if transpose else nn.Conv1d if is_1d else nn.Conv2d

- conv = init_default(conv_func(ni, nf, kernel_size=ks, bias=bias, stride=stride, padding=padding), init)

- if norm_type==NormType.Weight: conv = weight_norm(conv)

- elif norm_type==NormType.Spectral: conv = spectral_norm(conv)