So I just finished with Lesson 2 and tried hands on with some other datasets ,Now for most of them like dogs vs cats or like car vs planes and some more similar i am getting very good results.But when i tried using the same on MNIST dataset ,the results were bad.Any Suggestions on what i might have been doing wrong.On training set t shows an accuracy of over 99% but on test set i barely reach 80%.I am usually around 60-75% range.I tried decreasing substantially the learning rate in the middle layer and about twice the learning rate in first layer and that didnt work

How about validation ? What are your scores there?

the accuracy on validation is around 80%

What’s you architecture?

I am using the resnet34

Can someone please help me with it?

Can you upload your notebook in github gist?

It would be easier for us to look into the problem.

Could it be that the original training set for the model has too little in common with hand written characters?

Jeremy mentioned that this approach would not work well on satellite images because they are not typical photos that you could take with a camera. Perhaps hand written characters are the same.

Just a thought.

PS, did you find a resolution already? I’d be interested to hear what you learned.

Might have overfitted it to the training data. Restart your model, train it until the training and validation loss are close, and then try unfreezing the first few layers and train those. When that starts to overfit, try resizing the images and retraining it. I was able to get 99.5% on the Kaggle competition using resnet34.

I have one beginner doubt,now we know that there are ten categories ,How do i determine which probability Range Determines which Value,How do i Modify the code for dealing with more than two classes

The one which is having the maximum probability, take the index of that value in case of MNIST as nos are from 0-9

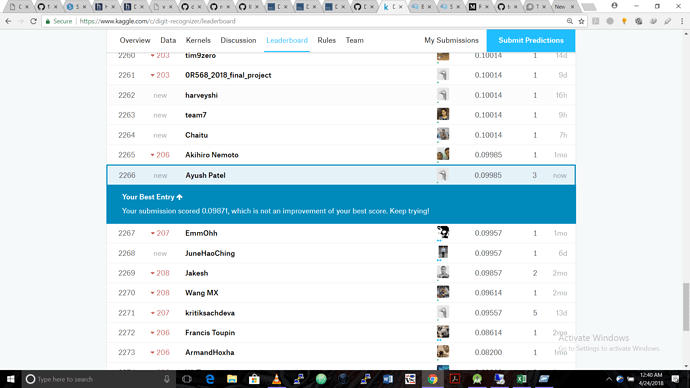

I tried some changes in learning rate and got the training accuracy to 99 percent but when i submitted to kaggle it only gave me a score of 9 percent ,Also the validation loss and training loss dont differ by a lot,Here is a link to my notebook,Could anyone please look into it.Also for some datasets when ii set the learn.precompute=False it actually worsens the loss,Is that happening with someone else too.Sorry for the trouble,i am just a beginner

Could anyone please help?