Seeing the results in Multi-Sample Dropout for Accelerated Training and Better Generalization I thought about testing it on cyclic learning methods as the paper had used constant learning rate for their experiments.

You can check the notebook here with the implementation Testing Multi Sample Dropout using fastai. I tested using CIFAR-100.

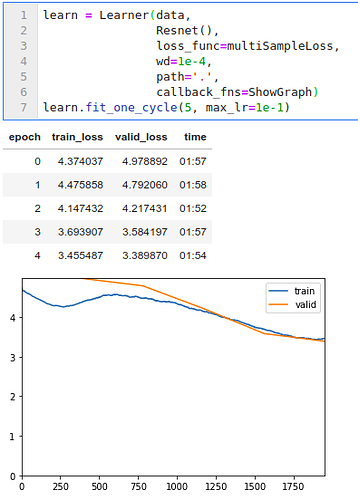

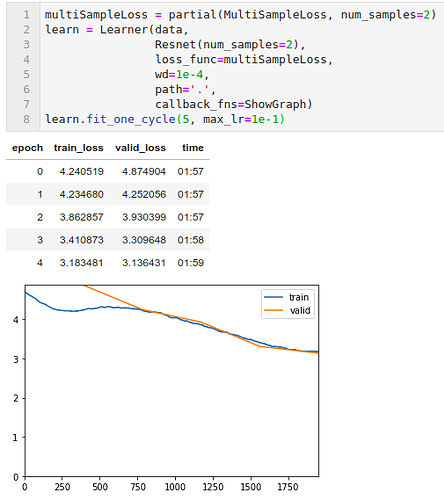

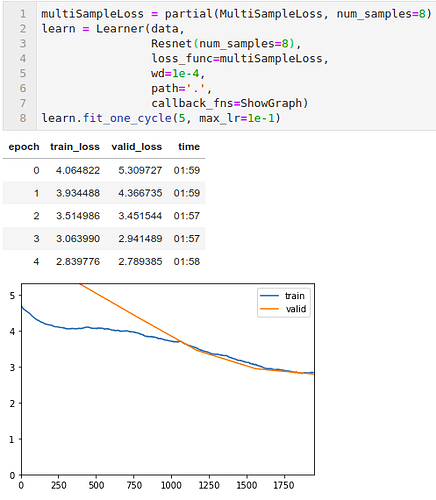

The results from the notebook

-

Baseline model with num_samples=1

-

With num_samples=2

-

With num_samples=8

Extra:

- To keep tests consistent I used the same hyper-parameter values as the baseline model with num_sample=1.

- There is some inconsistency with the times reported to run each cycle, as I was streaming youtube while the models trained. But for my Quadro M1200 4GB GPU I did not notice much increase in the computation time.

- The paper said to use more diversity and this is where I missed one thing. The paper used zero-padding for pooling operation to increase diversity. I don’t like to use max-pool in my final layers as I use Adaptive pool for that. Due to this, I did not use the zero-padding technique that they used in the paper.

- Data bunch used

data = (ImageList.from_folder(path) .split_by_folder(valid='test') .label_from_folder() .transform(get_transforms(), size=(32,32)) .databunch(bs=128, val_bs=512, num_workers=8) .normalize(cifar_stats))

@jeremy have you tested multi-sample dropout. Also, should we use zero-padding for the pooling layers. I don’t think that this would work with adaptive pooling. But maybe we can do like padding=4 and then taking a random crop.