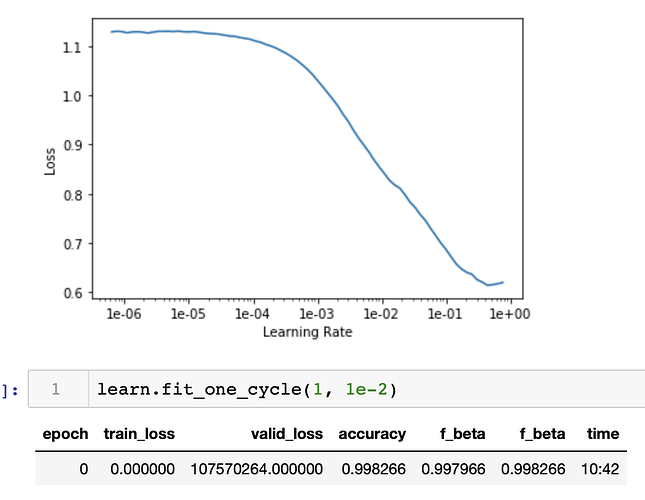

Hey everyone, I have been trying to find out why this is the case and if it could be possible. So I ran a tabular neural network. It has an extremely high accuracy & FBeta (macro and micro). However, the validation loss is extremely large compared to a zero training loss. Has anyone encountered this before? And what can I do to debug this better?

Found it. There was leakage going on with the continuous variables containing the y-variables.

1 Like