Hi everyone,

I took the exercise 2 from Andrew NG’s ML course on Coursera, and now, I’m trying to do it in pytorch …

I did the first exercise, a simple univariate linear regresion, and it worked well. In the exercise 2, a multivariate linear regresion, the gradient descent seems to work

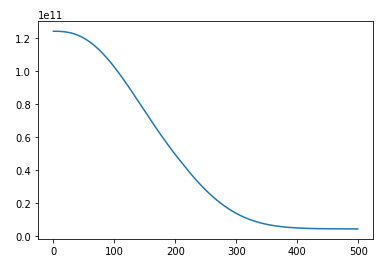

However, I can’t get why the y-axis of the loss/epoch plot is to high (1e+11), eventhoug the r2 scores are well for train and test sets (you can see the plots at the bottom)

Can you help me find the correct conceptual and technical answer to this question?

Here is the NB ( Pytorch NN) Linear Regression II.ipynb )

Best regards