Added: The complete notebook with all these functions in use is now online

Getting predictions for a model on a new dataset

There could be a lot of reasons for getting predictions on a another (nor test, nor validation) set of data (dataframe).

The obvious one is when you just want to predict on a new dataset, when you want preditions themselves.

The second group of reasons lays in the field of data exploration. What if we would like to do some feature importance or partial dependence analysis, in each of these cases we need an instrument to get predictions on a bunch of new (altered) dataframes. Definitely we can use learn.predict(row) for each of well… row in each of dataframe, but it is a pretty long process (in fact in my setup learn.predict in for-loop for 200 rows lasts 45+ seconds, and the process of calculating error for whole dataframe consists of 10,000 rows with standard tools take less than a second).

So I’ve accepted a challenge © and was wrestling with this problem for 1.5 weeks (ok, to be frank, for a half of dozen of evenings, but it’s still much longer than I was expecting  ). Eventually, after discovering of editable install’s hackery and some print-debugging, I’ve managed to understand where does the info on categorification and normalizing parameters (this was muuuuch harder) are stored (as we have to apply these exact transformations to a new dataframe).

). Eventually, after discovering of editable install’s hackery and some print-debugging, I’ve managed to understand where does the info on categorification and normalizing parameters (this was muuuuch harder) are stored (as we have to apply these exact transformations to a new dataframe).

So now I present you a number of function that can help you to predict on a new dataframe almost as quick as original predition on trining set.

Presumption is that you use standard tabular learning cycle (for ex. procs=[FillMissing, Categorify, Normalize] ).

The only trick here is to split standard databunch creation process into two phases:

-

First you apply all the functions before .databunch e.g.:

data_prep = (TabularList.from_df(df, path=path, cat_names=cat_vars, cont_names=cont_vars, procs=procs)

.split_by_idx(valid_idx)

.label_from_df(cols=dep_var, label_cls=FloatList, log=True))

data_prep is now a valid LabelLists object, that can be used to get data processing parameters -

Then you can apply .databunch as well, to get DataBunch object (that is needed for learning process itself) e.g. data = data_prep.databunch(bs=BS)

data_prep is what we do the split for. It will be used in our function.def get_model_real_input(df:DataFrame, data_prep:LabelLists, bs:int=None)->Tensor: df_copy = df.copy() fill, catf, norm = None, None, None cats, conts = None, None is_alone = True if (len(df) == 1) else False proc = data_prep.get_processors()[0][0] if (is_alone): df_copy = df_copy.append(df_copy.iloc[0]) for prc in proc.procs: if (type(prc) == FillMissing): fill = prc elif (type(prc) == Categorify): catf = prc elif (type(prc) == Normalize): norm = prc if (fill is not None): fill.apply_test(df_copy) if (catf is not None): catf.apply_test(df_copy) for c in catf.cat_names: df_copy[c] = (df_copy[c].cat.codes).astype(np.int64) + 1 cats = df_copy[catf.cat_names].to_numpy() if (norm is not None): norm.apply_test(df_copy) conts = df_copy[norm.cont_names].to_numpy().astype('float32') # ugly workaround as apperently catf.apply_test doesn't work with lone row if (is_alone): xs = [torch.tensor([cats[0]], device=learn.data.device), torch.tensor([conts[0]], device=learn.data.device)] else: if (bs is None): xs = [torch.tensor(cats, device=learn.data.device), torch.tensor(conts, device=learn.data.device)] elif (bs > 0): xs = [list(chunks(l=torch.tensor(cats, device=learn.data.device), n=bs)), list(chunks(l=torch.tensor(conts, device=learn.data.device), n=bs))] return xs def get_cust_preds(df:DataFrame, data_prep:LabelLists, learn:Learner, bs:int=None, parent=None)->Tensor: ''' Using existing model to predict output (learn.model) on a new dataframe at once (learn.predict does it for one row which is pretty slow). data_prep is a LabelLists object which you can get if you split standard databunch creation process into two halfs. First you apply all the functions before .databunch e.g.: data_prep = (TabularList.from_df(df, path=path, cat_names=cat_vars, cont_names=cont_vars, procs=procs) .split_by_idx(valid_idx) .label_from_df(cols=dep_var, label_cls=FloatList, log=True)) data_prep is now a valid LabelLists object Then you can apply .databunch as well to get DataBunch object (that is needed for learning process itself) e.g. data = data_prep.databunch(bs=BS) ''' xs = get_model_real_input(df=df, data_prep=data_prep, bs=bs) learn.model.eval(); if (bs is None): return to_np(learn.model(x_cat=xs[0], x_cont=xs[1])) elif (bs > 0): res = [] for ca, co in zip(xs[0], xs[1]): res.append(to_np(learn.model(x_cat=ca, x_cont=co))) return np.concatenate(res, axis=0) def convert_dep_col(df:DataFrame, dep_col:AnyStr, learn:Learner, data_prep:LabelLists)->Tensor: ''' Converts dataframe column, named "depended column", into tensor, that can later be used to compare with predictions. Log will be applied if it was done in a training dataset ''' actls = df[dep_col].T.to_numpy()[np.newaxis].T.astype('float32') actls = np.log(actls) if data_prep.log else actls return torch.tensor(actls, device=learn.data.device) def calc_loss(func:Callable, pred:Tensor, targ:Tensor, device=None)->Rank0Tensor: ''' Calculates error from predictions and actuals with a given metrics function ''' if (device is None): return func(pred, targ) else: return func(torch.tensor(pred, device=device), targ) def calc_error(df:DataFrame, data_prep:LabelLists, learn:Learner, dep_col:AnyStr, func:Callable, bs:int=None)->float: ''' Wrapping function to calculate error for new dataframe on existing learner (learn.model) See following functions' docstrings for details ''' preds = get_cust_preds(df=df, data_prep=data_prep, learn=learn, bs=bs) actls = convert_dep_col(df, dep_col, learn, data_prep) error = calc_loss(func, pred=preds, targ=actls, device=learn.data.device) return float(error)

The main function here is – get_cust_preds

We use it for new dataset prediction. Parameters there are:

- df – New dataframe which you want to predict on

- data_prep – LabelLists object that can be obtained during databunch creating process (see above)

- learn – learner with trained model inside

Function calc_error will help you if your goal is to determine error rather than preditions themselves (it is useful when you for ex. want explore you data with feature importance or partial dependence technics).

The parameters are the same, except:

- dep_col – string with column name of depended variable (df, obviously, should contain this column, in fact you can use the same dataframe even for get_cust_preds if you wish, as it uses only categorical and continuous columns from training and ignores the rest)

- func – function that is used to calculate an error (our metrics). This function (standard or written beforehand) should take 2 parameters: predictions and actuals, and calculate an error (float scalar).

Hope you will find this useful for your own experiments.

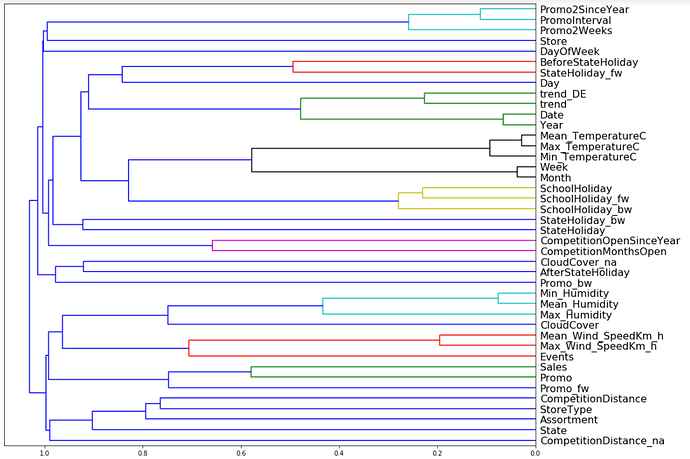

As for me, I plan to implement some interpretation technics (partial dependence, feature importance, euclidean distance for embeddings and maybe some dendrograms) for tabular data.

PS I’ve updated the code to add batch support and for some refactoring reasons