I need to fine-tune sequentially the AWD_LSTM language model on two data sets: first I fine-tune on the first data set, save the model and then continue fine-tuning on the second. I can’t mix them together as the second resides within a secure environment and I can’t bring new data it. The data sets are quite similar (from the same domain), but their vocabularies are different.

First, I trained as usual:

data_lm = (TextList.from_df(df_pretrain_data)

.split_by_rand_pct(0.1)

.label_for_lm()

.databunch(bs=48))

len(data_lm.vocab.itos)

->60000

learn = language_model_learner(data_lm, AWD_LSTM, drop_mult=0.5)

learn.fit_one_cycle(1, 1e-2, moms=(0.8,0.7))

learn.unfreeze()

learn.fit_one_cycle(10, 1e-3, moms=(0.8,0.7))

learn.save('fine_tuned')

learn.save_encoder('fine_tuned_enc')

learn.export()

When I move the save model to the secure env. and wanted to continue fine tuning, I received the following error:

data_lm_new = (TextList.from_df(df_pretrain_data_new)

.split_by_rand_pct(0.1)

.label_for_lm()

.databunch(bs=48))

len(data_lm_new.vocab.itos)

-> 4224

learn_new = language_model_learner(data_lm, AWD_LSTM, drop_mult=0.5, pretrained=False).load('path/to/fine_tuned')

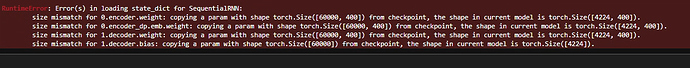

Error in loading state_dict for Sequential RNN:

Obviously, there is a problem that the data sets are different. What is the general solution to such a problem? I can’t find it on the forum I’m afraid. Thanks!