Did anyone tried to reimplement seq2seq translation code using the material presenting in v3 of the course? Seems, all I can find is about fastai v0.7 or something…

See the NLP course, there is a seq2seq notebook github.com/fastai/course-nlp

It uses fastai 1.0

Yes, https://github.com/fastai/course-nlp/blob/master/7-seq2seq-translation.ipynb is what I’ve checked before posting. But it doesn’t use modularized preprocessing that was used in https://github.com/fastai/course-v3/blob/master/nbs/dl2/12_text.ipynb

The later I feel to be much more clean.

Still cannot reproduce.

Can someone take a look at https://github.com/akhavr/fastai-lectures-v3-seq2seq/blob/master/seq2seq.ipynb ? Might be I’m missing something stupid, since the model doesn’t train at all.

Got a deep belief that something is wrong with optimization routines in v3 course.

Gave up, so far, trying to reproduce seq2seq on fastai-dev v2

I’m working on major updates to this including transformer implementations and huggingface integration.

Is there a “work in progress” snapshot somewhere? Because, right now it uses fastai v1, and there’s a seq2seq fastai-v1 notebook here https://github.com/fastai/course-nlp/blob/master/7-seq2seq-translation.ipynb

Not at the moment.

Well, I can’t reproduce seq2seq results with fastai-dev too.

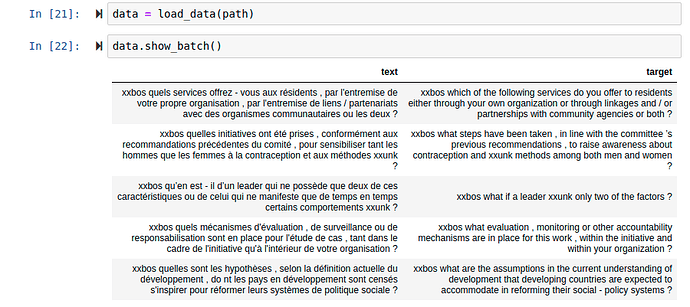

I tried to reproduce results as well with the notebooks. However, I have problems with the data (even before training). When I look at the results with data.show_batch() , i see that the data is repeated like this:

|text|target|

|—|---|

|xxbos pourquoi avons - nous réussi à atteindre l’indépendance de la xxunk alors que d’autres ont encore de la difficulté à comprendre ce concept ?|xxbos why is it that we have achieved judicial independence when others have a hard time even understanding it ?|

|xxbos pourquoi avons - nous réussi à atteindre l’indépendance de la xxunk alors que d’autres ont encore de la difficulté à comprendre ce concept ?|xxbos why is it that we have achieved judicial independence when others have a hard time even understanding it ?|

(…)

Do you have the same issue, I even tried other to load data using csv and from folder, it gives repeated results. I’m using fastai version : 1.0.59

Do you have any ideas for how to fix this?