Hello!

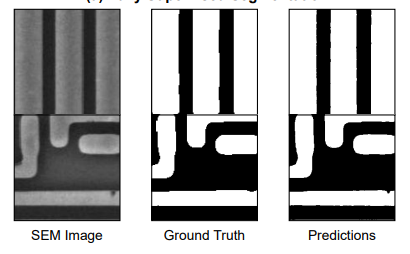

I am working on the task of semantic segmentation of simple circuitry images.

It is a simple one-class segmentation problem.

I have tried to replicate the training loop in pure PyTorch, keeping the following things the same:

- Dataset (incl. train,val,test splits)

- Augmentations applied

- Optimizers (I used fastai’s implementation of Adam, but with the same hyperparameters)

- Schedulers

- Network architecture (Custom Unet in Pure PyTorch, random initialization)

- Training hyperparameters (bs,epochs,lr,etc)

- Loss functions

For fastai, I called the fit() function whilst for PyTorch, I did the standard iteration loop through n_epochs.

However, performing multiple runs across different seeds, the performance of the fastai trained model (tested on the same test set, with same testing script) is consistently 0.5-1% better in terms of IoU. This is also true for the highest validation IoU obtained when training.

Is there something that is happening under the hood in fastai’s optimization process that I’m missing out here?

Any insight would be well appreciated, thanks!