I am currently training a custom model on the Imagenette dataset and I am experiencing some issues which I don’t fully understand.

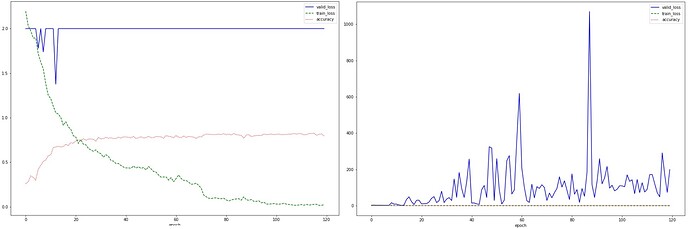

Even though the model appears to learn correctly and reaches an accuracy of about 82%, the validation loss explodes (the left image is just zoomed in).

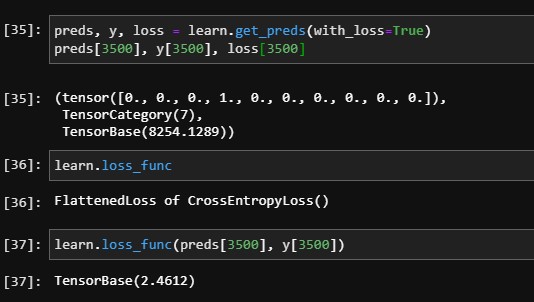

I realized this problem appears to occur because even though the model predicts most of the images correctly, some are predicted wrong with a really high loss:

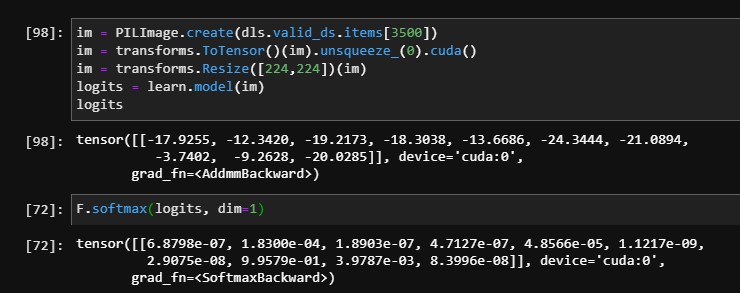

I am predicting the top loss image separately but can’t confirm how this high loss could be calculated.

My guess was the loss is calculated via the logits before Softmax is applied.

When I am loading the image from the path as a tensor and calculate the logits, it appears to work correctly.

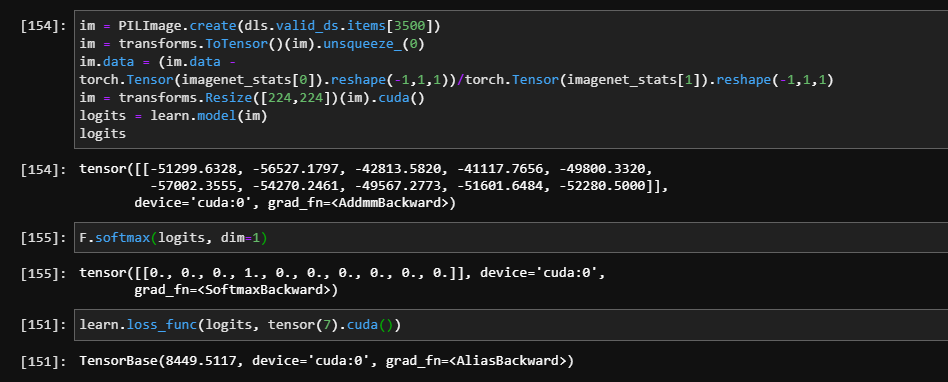

I realized though that I am now missing my data augmentation. Consequently, I normalize the input image by hand which finally leads to the occurring problem:

Apparently, the logits blow up for green images and lead to those large losses. Why does that happen and is there a way to work around it?