In a GAN that, for example, generates faces, the input is a random noise, and the output is a random face image.

However, I am contemplating an idea where the input isn’t random noise. Let’s say I have lots of blank Map images for lat/longs, each covering an area of let’s say 5 sq miles, as well as same Map images for the same lat/longs, containing a layer of different colored dots, where the color of the dot denotes a producing oil well, or a dry hole etc. I would like to train the GAN where the input would be the blank images and the desired output will be images where the network predicts future oil wells should be sited, by way of dots of the correct color. Given a large number of such blank and annotated location based images, I theorize that the generator should learn some aspects of the sub-surface geology as a function of location and be able to predict candidate area to drill well (or areas to avoid, as the case may be)

I have these questions:

- Does this idea even make sense, especially the bit about GAN learning about the properties of an oil field, as a function of location?. Am I am over-estimating the capabilities of GANs?

- Is it even necessary to supply a blank map image. Could I just input lat/long pair to the generator? Is one approach better than the other for accuracy?

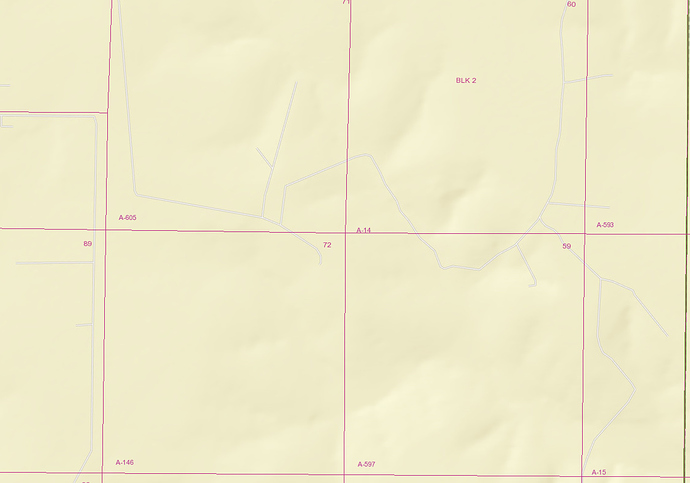

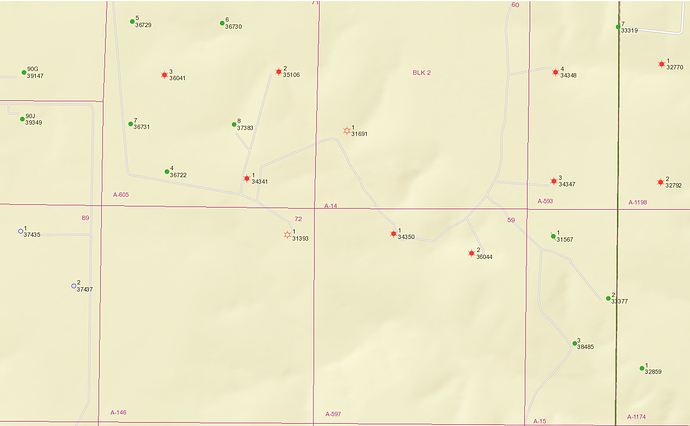

Sample blank and annotated images are attached below:

Sample blank image for a location:

Sample image for the same location as above containing dots of various types of oil wells