Is there a pre-trained model that will work with QRNN units. It looks like URLs.WT103_1 and URLs.WT103 will only work with the AWD-LSTM units.

Not yet no, as we didn’t get the same results as with LSTMs.

I understand. Can you tell me how much effort it would be for me to create a QRNN pre-trained model (using WikiText or another large dataset). Is it as simple as creating a language model learner and pointing it to the WikiText corpus and training for a number (50?) of epochs and save? Then create another language model learner and load the weights from the saved WikiText language model. I am having success with the QRNN on a small medical dataset and wanted to see if transfer learning can boost performance even further.

Can you tell the how long it takes to train the LSTM pre-trained model? Could you point me to the code (or scripts) you used to create it?

Thanks

You have an example in a notebook here. It takes 1 hour per epoch (roughly) on a p3. Note that the first training of 10 epochs should already give you something that is fine.

Thanks for your help!

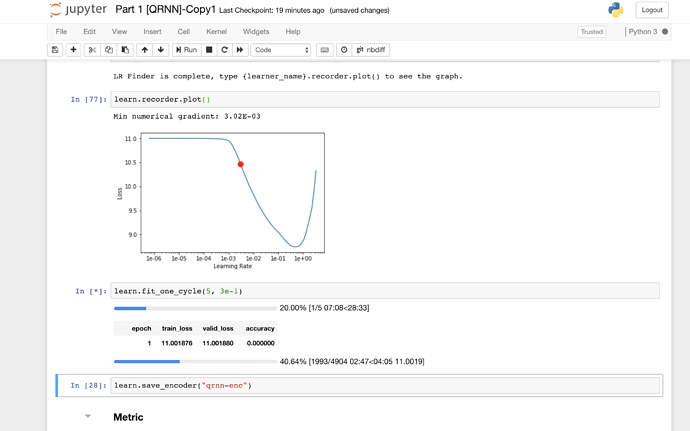

Hi there, I am trying to compare different language models on quora dataset ~100K documents. AWD-LSTM with LSTM layers gave reasonably good results. Then I copied the same notebook and just changed qrnn=True. Now the problem is even though lr_find() seems to show the opposite the model doesn’t converge even with clipping.

I am hoping someone with more experience training QRNN layers will illuminate me, maybe there is a technical side of it that I am missing.

Here is the git repo

Here is what happens (Tried several learning rates, clip-no clip, etc., non of them seems to help)

btw, nice Excel examples in your blog Understanding building blocks of ULMFIT. After reading it, I realized I should dig deeper into Deep learning NLP, starting first with MultiBatchEncoder.

Thanks glad it helps  I also felt like diving deeper last week and it helps a lot, totally recommend

I also felt like diving deeper last week and it helps a lot, totally recommend

For the latest version of fastai it looks like they are moving away from supporting QRNN (arch=QRNN looks to be missing) or they just for got to include it as one of the supported architectures. I don’t think it is consistent to have qrnn=True and arch=AWD_LSTM in the learner. If you roll back a few versions (before they added the arch= option) you can train a QRNN language model just fine. I trained a bunch from scratch on WikiText-103. In general they train much faster than the AWD_LSTM version, but do not perform as well (1-2% worse in accuracy). I used the same code that @sgugger proved in the notebook above.

In new version you pass a config file:

\awd_lstm_lm_config = dict(emb_sz=400, n_hid=1150, n_layers=3, pad_token=1, qrnn=True, output_p=0.25,

hidden_p=0.1, input_p=0.2, embed_p=0.02, weight_p=0.15, tie_weights=True, out_bias=True)

awd_lstm_clas_config = dict(emb_sz=400, n_hid=1150, n_layers=3, pad_token=1, qrnn=True, output_p=0.4,

hidden_p=0.2, input_p=0.6, embed_p=0.1, weight_p=0.5)

Yes, but I don’t think it makes sense to have arch=AWD_LSTM and qrnn=True, two different architectures. How could a learner be both? Would need to be one or the other.

The only difference is you use QRNN layer vs LSTM layer. Yes, you can construct both models and check learn.model to see how they differ.

I don’t think the library intended to have a given language model learner to have both QRNN and LSTM layers at the same time. Your code would suggest you are trying to do that.

awd_lstm_lm_config = dict(emb_sz=300, n_hid=1150, n_layers=3, pad_token=1, qrnn=True, output_p=0.25,

hidden_p=0.1, input_p=0.2, embed_p=0.02, weight_p=0.15, tie_weights=True, out_bias=True)

learn = language_model_learner(data=data_lm, arch=AWD_LSTM, config=awd_lstm_lm_config, drop_mult=1.0, pretrained=False)

I think for a given learner object you can only have one architecture.

I am 100% sure reality is not what you imply. This is how you create an AWD-LSTM model with either LSTM or QRNN layers. You should read the docs: https://docs.fast.ai/text.models.html (they are written for a reason) and my blog post as well

I confirm that what Kerem is saying is True even if the names can be confusing for now.

The name of the architecture was first AWD-LSTM and their inventors added support for QRNN later. When there are pretrained AWD-QRNNs available, I’ll probably add AWD-QRNN as a proper arch, but for now, just used the config dictionary to create them.

Yes, I was mistaken, my bad. Adding a AWD-QRNN arch in the future will make things a little more clear.

BTW, qrnn model also converges normally. I don’t know what was the issue, tried reproducing by creating data and model fresh but it just worked

There was a serious issue with v1.0.43 that has been fixed yesterday night, maybe that’s why?

Maybe  , thanks for pointing it.

, thanks for pointing it.

Hi guys, does anyone already have the pretrained weights of the Wikitext 103 for QRNN available somewhere?