I am also posting this here because it could be a potential bug in the library:

It’s not a bug, it’s just that unet_learner only fully supports resnets for now.

Why is that? does this have something to do with the architecture of other CNN architectures that makes it not compatible with u-nets? How can one utilize other CNN architectures with U-nets?

The unet uses intermediary features at several level, which are grabbed with hooks. We designed those while working with resnets of different sizes, so that’s why I say those architectures are fully supported. We didn’t try with others (and have no plans to do so mid-term) so it’s possible that they don’t return exactly the same tensors at the correct times.

If you manage to debug why this doesn’t work and find a fix, a PR would be most welcome!

Here is how you can create DynamicUnet model with a densenet with a little manual reconstruction.

The main reason unet_learner fails to create a unet model is because torchvision’s densenet has a single sequential layer, e.g.

len(model_sizes(densenet_body)) == 1

We can manually regroup layers with nn.Sequential, but of course by not changing the order of computational graph:

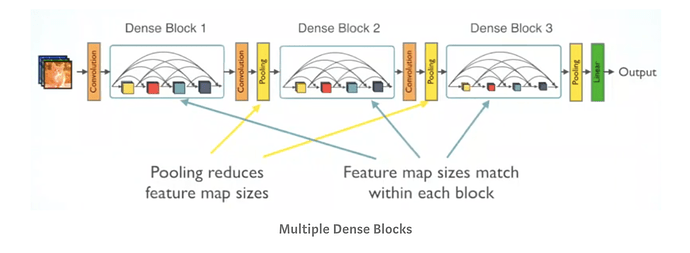

I decided to group by splitting architecture before each denseblock.

Here is the code:

from fastai.callbacks.hooks import model_sizes

from fastai.vision.learner import cnn_config

body = create_body(models.densenet121, False, None)

# LOAD PRETRAINED WEIGHTS

chx_state_dict = torch.load("/home/turgutluk/data/models/chx-mimic-only-pneumo-densenet121-320.pth")

# load state dict from chx model until last layer group

for n, p in list(body.named_parameters()): p.data = chx_state_dict['0.' + n].to(torch.device('cuda'))

body = body[0].cpu()

densenet_children = list(body.children())

unet_densenet_body = nn.Sequential(nn.Sequential(*densenet_children[:3]),

nn.Sequential(*densenet_children[3:5]),

nn.Sequential(*densenet_children[5:7]),

nn.Sequential(*densenet_children[7:9]),

nn.Sequential(*densenet_children[9:]))

model_sizes(unet_densenet_body)

# [torch.Size([1, 64, 16, 16]),

# torch.Size([1, 128, 8, 8]),

# torch.Size([1, 256, 4, 4]),

# torch.Size([1, 512, 2, 2]),

# torch.Size([1, 1024, 2, 2])]

def _new_densenet_split(m:nn.Module): return (m[1])

try:size = data.train_ds[0][0].size

except: size = next(iter(data.train_dl))[0].shape[-2:]

model = to_device(models.unet.DynamicUnet(unet_densenet_body, n_classes=data.c, img_size=size), data.device)

learn = Learner(data, model)

learn.split(_new_densenet_split);

apply_init(model[2], nn.init.kaiming_normal_)

So it’s still possible to create a DynamicUnet model from densenet with current library, only not directly from unet_learner. Hope this helps

Note: DenseNet is indeed a dense model so it will consume lot of GPU memory.

Hi @kcturgutlu,

I tried your approach but I’m getting following error :

RuntimeError: Input type (torch.cuda.FloatTensor) and weight type (torch.FloatTensor) should be the same

I have checked that my data and model both are on GPU. Any help is appreciated.

Can you debug by checking each parameter’s device in your model?

Ok. I will check it.