Is it just me or does it take forever for a notebook on paperspace to open up? I typically have to wait 10 -15 mins when it used to be instantaneous.

I have noticed this as well. It doesn’t seem to make much difference whether it’s a paid or a free runtime, either.

UPDATE: I got a message back from Paperspace support, saying:

" Thanks for contacting Paperspace. It looks like you are currently running the notebook ’ REFERENCENUMBER. ’ Please make sure to store all of your files within the

/storagefolder. This alone will improve your provisioning times and decrease any chance of errors.

I haven’t fully figured out how to do this yet, or whether it’s even advised given the course’s instructions, but in case this is useful for someone else, I’m posting the message here. I removed the reference number as it was only specific to me.

UPDATE2: what I did was to move the fastbook folder and the course-v4 folder into the storage folder. You can do this with the GUI. This seems to result in significantly faster load times when starting up the server. YMMV.

Further UPDATE: I returned to my Paperspace machine today, and all the folders with all my work were gone. there was no fastbook folder, and no course-v4. I hadn’t done much, so it wasn’t too big a deal, but I think the issue here was the moving things into the storage folder. I’m not sure I’d follow the advice from the support email above any more, given this experience, at least until they explain a bit more what happened.

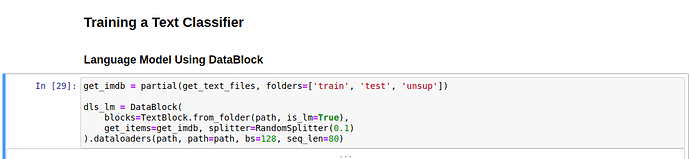

I have problem with Paperspace. From book page 44 is simple IMDB sample. When text_classifier_learner(…) is run, I get always error. Index out of range. Other small examples from books chapter 1 worked. I have not done any updates or special steps. Just create new notebook (and used Paperspace fastai v4 premade gradient environment).

Should I do some steps after new notebook to get text_classifier_learner to work with IMDB dataset?

Hey there did you a fix for this problem?

I’m using paperspace gradient with free-gpu. I’m on Lesson 8. (notebook 10_nlp)

When i run this:

I get this error:

FileNotFoundError: [Errno 2] No such file or directory: ‘/storage/data/imdb_tok/counter.pkl’

I could not find counter.pkl in any of the folders yet. How can this be solved?

To anyone looking to solve this issue:

delete the folder by launching a terminal in /storage/data/

Hi. I’m trying to get set up on Paperspace. For step 3 in the Basic steps above, I’m not seeing the container “Paperspace + Fast.Ai 2.0 (V4).” When I look under the all containers tab, the only fastai containers I’m seeing are “Paperspace + Fast.AI” and “Paperspace + Fast.AI 1.0 (V3).” I initially selected “Paperspace + Fast.AI” based on the instructions given here https://course.fast.ai/start_gradient.html#step-2-create-notebook

However, with this container, the import statements in /course-v4/nbs/01_intro.ipynb are failing. Any guidance on what is the correct container I need to use?

Hi @tomg or @dkobran. Should there be a “Paperspace + Fast.Ai 2.0 (V4)” container available on Paperspace?

Hi @agrahame – These instructions are correct, this container is the latest and all encompassing container. Meaning it has both fastbook and course-v4 in it and has been tested with both.

Couple of tips for success:

You MUST run on a GPU capable instance, you cannot run fastai without a GPU.

As always, please run a refresh of the repo once inside course-v4 (or fastbook) directory via a git pull.

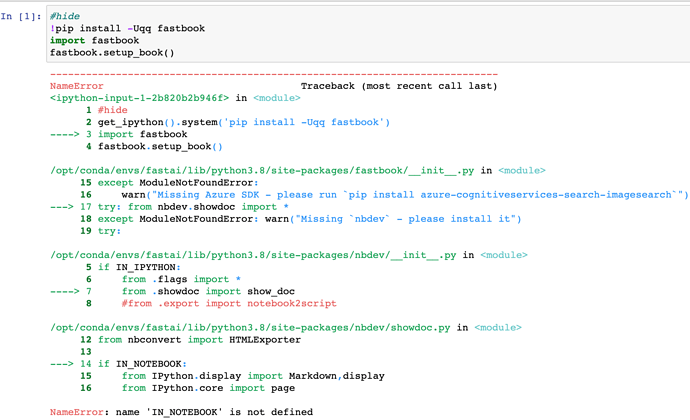

@tomg thanks for your quick response! I’ve ensured that I’m running on a GPU enabled instance and that my fastbook repository is up-to-date. However, if I try to execute the setup cell inside the /fastbook/01_intro.ipynb notebook, I’m getting the below errors:

I even tried adding “pip install azure-cognitiveservices-search-imagesearch” and “pip install nbdev” but in both cases I get the output that they’re already installed. However, I still just get the same NameError. Not sure if I’m missing something.

Hmm, this is very strange indeed @agrahame since all of these dependencies are pre-installed. Perhaps you’ve modified your notebook? I was unable to replicate this issue just now.

I suggest creating a fresh new notebook using the Papersapce + Fast.AI container found under our Recommended containers – then once launched open a terminal and cd into fastbook and pull down the latest version via git pull. These are the steps I followed and was able to start lesson 1 without issues.

@tomg I think I’ve identified the issue. In the instructions found here https://course.fast.ai/start_gradient#step-3--update-the-fastai-library it says to run “pip install fastai fastcore --upgrade” after starting the machine. However, this seems to be what’s leading to the errors I was seeing. I just created a notebook from scratch and didn’t run that pip install and now I’m not seeing the error. Thanks for your help.

I had the same error message (“IN_NOTEBOOK is not defined”) with the first cell (“from utils import *, from fastai.vision.widgets import *”) when trying to run the original 02_production notebook (fresh new notebook as Tom suggested, followed the gradient instructions including the “pip install fastai fast core --upgrade”). Tried it without the “pip install” command and it ran without errors. Thanks for the help

To solve the problem, run

pip install nbdev --upgrade

Thanks worked for the IN_NOTEBOOK error

I’m having trouble getting through errors in Lesson 1.

I’m using the free trial, and I’ve gone through the instructions to update things in terminal,

ie:

pip install fastai fastcore --upgrade

cd fastbook

git pull

Running the first cell from utils import * had a hitch, this was fixed with pip install nbdev --upgrade, but now for “Running my First Notebook” I get the following error:

<ipython-input-2-3244ec10e8a7> in <module>

9

10 learn = cnn_learner(dls, resnet34, metrics=error_rate)

---> 11 learn.fine_tune(1)

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastcore/utils.py in _f(*args, **kwargs)

471 init_args.update(log)

472 setattr(inst, 'init_args', init_args)

--> 473 return inst if to_return else f(*args, **kwargs)

474 return _f

475

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastai/callback/schedule.py in fine_tune(self, epochs, base_lr, freeze_epochs, lr_mult, pct_start, div, **kwargs)

159 "Fine tune with `freeze` for `freeze_epochs` then with `unfreeze` from `epochs` using discriminative LR"

160 self.freeze()

--> 161 self.fit_one_cycle(freeze_epochs, slice(base_lr), pct_start=0.99, **kwargs)

162 base_lr /= 2

163 self.unfreeze()

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastcore/utils.py in _f(*args, **kwargs)

471 init_args.update(log)

472 setattr(inst, 'init_args', init_args)

--> 473 return inst if to_return else f(*args, **kwargs)

474 return _f

475

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastai/callback/schedule.py in fit_one_cycle(self, n_epoch, lr_max, div, div_final, pct_start, wd, moms, cbs, reset_opt)

111 scheds = {'lr': combined_cos(pct_start, lr_max/div, lr_max, lr_max/div_final),

112 'mom': combined_cos(pct_start, *(self.moms if moms is None else moms))}

--> 113 self.fit(n_epoch, cbs=ParamScheduler(scheds)+L(cbs), reset_opt=reset_opt, wd=wd)

114

115 # Cell

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastcore/utils.py in _f(*args, **kwargs)

471 init_args.update(log)

472 setattr(inst, 'init_args', init_args)

--> 473 return inst if to_return else f(*args, **kwargs)

474 return _f

475

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastai/learner.py in fit(self, n_epoch, lr, wd, cbs, reset_opt)

205 self.opt.set_hypers(lr=self.lr if lr is None else lr)

206 self.n_epoch,self.loss = n_epoch,tensor(0.)

--> 207 self._with_events(self._do_fit, 'fit', CancelFitException, self._end_cleanup)

208

209 def _end_cleanup(self): self.dl,self.xb,self.yb,self.pred,self.loss = None,(None,),(None,),None,None

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastai/learner.py in _with_events(self, f, event_type, ex, final)

153

154 def _with_events(self, f, event_type, ex, final=noop):

--> 155 try: self(f'before_{event_type}') ;f()

156 except ex: self(f'after_cancel_{event_type}')

157 finally: self(f'after_{event_type}') ;final()

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastai/learner.py in _do_fit(self)

195 for epoch in range(self.n_epoch):

196 self.epoch=epoch

--> 197 self._with_events(self._do_epoch, 'epoch', CancelEpochException)

198

199 @log_args(but='cbs')

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastai/learner.py in _with_events(self, f, event_type, ex, final)

153

154 def _with_events(self, f, event_type, ex, final=noop):

--> 155 try: self(f'before_{event_type}') ;f()

156 except ex: self(f'after_cancel_{event_type}')

157 finally: self(f'after_{event_type}') ;final()

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastai/learner.py in _do_epoch(self)

189

190 def _do_epoch(self):

--> 191 self._do_epoch_train()

192 self._do_epoch_validate()

193

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastai/learner.py in _do_epoch_train(self)

181 def _do_epoch_train(self):

182 self.dl = self.dls.train

--> 183 self._with_events(self.all_batches, 'train', CancelTrainException)

184

185 def _do_epoch_validate(self, ds_idx=1, dl=None):

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastai/learner.py in _with_events(self, f, event_type, ex, final)

153

154 def _with_events(self, f, event_type, ex, final=noop):

--> 155 try: self(f'before_{event_type}') ;f()

156 except ex: self(f'after_cancel_{event_type}')

157 finally: self(f'after_{event_type}') ;final()

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastai/learner.py in all_batches(self)

159 def all_batches(self):

160 self.n_iter = len(self.dl)

--> 161 for o in enumerate(self.dl): self.one_batch(*o)

162

163 def _do_one_batch(self):

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastai/learner.py in one_batch(self, i, b)

177 self.iter = i

178 self._split(b)

--> 179 self._with_events(self._do_one_batch, 'batch', CancelBatchException)

180

181 def _do_epoch_train(self):

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastai/learner.py in _with_events(self, f, event_type, ex, final)

153

154 def _with_events(self, f, event_type, ex, final=noop):

--> 155 try: self(f'before_{event_type}') ;f()

156 except ex: self(f'after_cancel_{event_type}')

157 finally: self(f'after_{event_type}') ;final()

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastai/learner.py in _do_one_batch(self)

162

163 def _do_one_batch(self):

--> 164 self.pred = self.model(*self.xb)

165 self('after_pred')

166 if len(self.yb): self.loss = self.loss_func(self.pred, *self.yb)

/opt/conda/envs/fastai/lib/python3.8/site-packages/torch/nn/modules/module.py in _call_impl(self, *input, **kwargs)

720 result = self._slow_forward(*input, **kwargs)

721 else:

--> 722 result = self.forward(*input, **kwargs)

723 for hook in itertools.chain(

724 _global_forward_hooks.values(),

/opt/conda/envs/fastai/lib/python3.8/site-packages/torch/nn/modules/container.py in forward(self, input)

115 def forward(self, input):

116 for module in self:

--> 117 input = module(input)

118 return input

119

/opt/conda/envs/fastai/lib/python3.8/site-packages/torch/nn/modules/module.py in _call_impl(self, *input, **kwargs)

720 result = self._slow_forward(*input, **kwargs)

721 else:

--> 722 result = self.forward(*input, **kwargs)

723 for hook in itertools.chain(

724 _global_forward_hooks.values(),

/opt/conda/envs/fastai/lib/python3.8/site-packages/torch/nn/modules/container.py in forward(self, input)

115 def forward(self, input):

116 for module in self:

--> 117 input = module(input)

118 return input

119

/opt/conda/envs/fastai/lib/python3.8/site-packages/torch/nn/modules/module.py in _call_impl(self, *input, **kwargs)

720 result = self._slow_forward(*input, **kwargs)

721 else:

--> 722 result = self.forward(*input, **kwargs)

723 for hook in itertools.chain(

724 _global_forward_hooks.values(),

/opt/conda/envs/fastai/lib/python3.8/site-packages/torch/nn/modules/container.py in forward(self, input)

115 def forward(self, input):

116 for module in self:

--> 117 input = module(input)

118 return input

119

/opt/conda/envs/fastai/lib/python3.8/site-packages/torch/nn/modules/module.py in _call_impl(self, *input, **kwargs)

720 result = self._slow_forward(*input, **kwargs)

721 else:

--> 722 result = self.forward(*input, **kwargs)

723 for hook in itertools.chain(

724 _global_forward_hooks.values(),

/opt/conda/envs/fastai/lib/python3.8/site-packages/torchvision/models/resnet.py in forward(self, x)

61 out = self.relu(out)

62

---> 63 out = self.conv2(out)

64 out = self.bn2(out)

65

/opt/conda/envs/fastai/lib/python3.8/site-packages/torch/nn/modules/module.py in _call_impl(self, *input, **kwargs)

720 result = self._slow_forward(*input, **kwargs)

721 else:

--> 722 result = self.forward(*input, **kwargs)

723 for hook in itertools.chain(

724 _global_forward_hooks.values(),

/opt/conda/envs/fastai/lib/python3.8/site-packages/torch/nn/modules/conv.py in forward(self, input)

417

418 def forward(self, input: Tensor) -> Tensor:

--> 419 return self._conv_forward(input, self.weight)

420

421 class Conv3d(_ConvNd):

/opt/conda/envs/fastai/lib/python3.8/site-packages/torch/nn/modules/conv.py in _conv_forward(self, input, weight)

413 weight, self.bias, self.stride,

414 _pair(0), self.dilation, self.groups)

--> 415 return F.conv2d(input, weight, self.bias, self.stride,

416 self.padding, self.dilation, self.groups)

417

/opt/conda/envs/fastai/lib/python3.8/site-packages/torch/utils/data/_utils/signal_handling.py in handler(signum, frame)

64 # This following call uses `waitid` with WNOHANG from C side. Therefore,

65 # Python can still get and update the process status successfully.

---> 66 _error_if_any_worker_fails()

67 if previous_handler is not None:

68 previous_handler(signum, frame)

RuntimeError: DataLoader worker (pid 1862) is killed by signal: Killed.

How do I fix this? Why’d I get this problem so early on in the first place? Thanks!

UPDATE:

I deleted and remade the notebook, following the above steps (before the runtime error) and now everything is working as intended. Not sure what happened in the first place, but this was my fix.

Hello, I want to use tex fonts for my matplotlib plots on Gradient. How can I install them on the server? Now I am just getting an error that latex is not found when i try to plot with tex fonts

I’m on a paid Paperspace plan and using the fastaiv2 notebooks.

Following the 10_nlp.ipynb, when running

learn.save('1epoch') I get a “File system is read-only” error.

It seems that learn.path is Path('/storage/data/imdb'), which is read-only.

I can easily set learn.path to something else (e.g., Path(’/storage/models/imdb’)) to solve this immediate issue.

However, I am concerned that doing so might have other implications (for example, will that cause problems with loading data?).

What is the recommended approach to solve this issue?

Thanks

Thanks for the tip re wandb, was running into this problem myself. I hope it’ll be implemented soon, but it’s been many months since your comment and still no tensorboard.