Hi,

I am trying to fit an RNN on a dataset which predicts whether a user going to buy a premium membership (or some other objective) given customer’s sequence of activity.

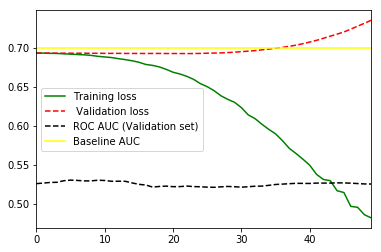

It looks like my data starts overfitting quickly, after a couple of epochs. I have tried few of the following things:-

- increased batch_size

- decreased size of embedding and RNN layer (to reduce complexity)

- changed the learning rate

- Remove bidirectionality in RNN layer.

- Dropout layer [Edited later]

What else can I try?

PS: I am using very basic keras code to build this model.