I have only one question: how do I get the outputs to pass to get_predictions?

You should be able to call get_preds and pass in a DataLoader. (Or just do get_preds without passing anything in mad let it call the validation set)

Thanks. I have an issue before calling your methods that I am investigating. When I call learn.get_preds(dl=dls.valid)

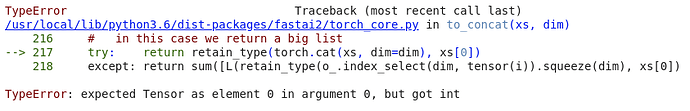

fastai throws an exception

No pressure on anybody, just posting for reference

This is due to the RetinaNet.forward method returning the box predictions, the class predictions and also a list of scales. This caused a few issues for me as well (show_results, etc wont work nicely). I could fix it by passing the scales to the constructor and no computing them dynamically. This of course is far from optimal because the scales depend on the image input size, and they are also required in the loss function. Maybe a callback which computes the scales and stores them for later use in the loss function would be a good idea.

Anyone has a better idea on how to handle this?

More generally I feel that for a model to really nicely integrate with all the fastai functionality the model should only output things like the “ys” of the training set, so that the decoding pipeline can handle this nicely.

Pinging @sgugger, what do you feel would be the best way for integrating this in? (Via a callback like @j.laute recommends?) or some external type-dispatching for the particular datatypes’ show method? Perhaps something similar to how the TensorPoints keep track of the original image input size

The model used should spit things like the ys of the training set, as @j.laute said. Also note that your loss function can have a processing step to help with the show_results method if you implement a decodes in it to make your model output the as the ys. For instance CrossEntropLossFlat() will apply an argmax in this method to make the outputs exactly like the targets.

It’s not just the show method, for example the to_fp16() callback will also fail, as it tries to call to_float on the predictions and it doesn’t expect a list in there (only tensors). I feel either fastai needs to have some global support for the model outputting things which are not part of the actual predictions (similar like n_inp is used to tell the model what part of the data is input and what target), or a callback would just store these things

EDIT: I posted before seeing sguggers reply, I’ll check out the loss function decode and check if I can get it to work this way

@muellerzr I finally got a working mAP evaluation for the output of your RetinaNet using this library, so I can soon start experimenting with dilated xresnet.

As a solution to the problems above (model output not matching prediction target) I have 2 ideas which I will try and code up today:

sizes should be stored in the RetinaNet class in both cases, and can be accessed by the loss function via a reference passed to the constructor.

And then either:

- Have a callback to do score thresholding and non max suppression in the

after_lossstage (modifyinglearn.pred) - do score thresholding and non max suppression directly in the

model.forwardand store theraw_predictionsin the model as well and access them via the reference in the loss function.

I think 1. is better as it should work with the fp16 callback for example

I’ll share a notebook here when I’m done

Any luck?

Quick update:

- got an non-max suppression callback working

- using the object detection metrics library above I got fastai2 metrics for mean AP (Average Precision) and per class AP working (unfortunately the evaluation can be really slow if there are a lot of box detection and images)

- Testing with the dataset sample (2501 images total) in the notebook, after ca. 30 epochs I only get around an mAP of ~12, which is pretty bad. I think this is due to the small dataset size

- fastai2’s

show_resultsis also working (though it doesn’t visually distinguish between the predicted boxes and the actual ones)

I will be training on a bigger dataset (either coco or pascal voc) overnight and see if it can reach reasonable results, then I’ll share the notebook and make a PR to @muellerzr course github project.

(The code is really hacky and awful in some places and I hope we can make concise and clear going forward  )

)

Hello. Just want to be notified about any progress in object detection inference.

Sorry for the delay, had a slow few days…

Here is the link to my fork with the notebook “06_Object_Detection_changed” being the modified notebook. I removed the setup/installation code as I run this locally. In the object_detection_metrics folder the code is copied from rafaelpadilla/Object-Detection-Metrics.

There are 3 modifications to @muellerzr code:

- the

RetinaNetonly returns the box and class predictions (in this order), and the dynamically computed sizes are stored inself.sizes -

RetinaNetFocalLosstakes a reference to the model, from which it gets the computed sizes -

RetinaNetFocalLosshas adecodesmethod, that applies the argmax over the predicted scores over all classes. (We need the scores for the non-max-suppression, but want the argmax for displaying the result)

These changes make it possible to use show_results as the output of the model now is similar to the dependent variables of the dataloaders (boxes and classes).

Then the notebook contains a non-max-suppression callback which uses the torchvision operator, and a mAP metric using the github repo linked above.

If you have any questions, ideas for improvement, or see find any bugs, please share them

DISCLAIMER: THIS IS NOT FINISHED CODE,

I don’t have much time to work on it at the moment and it is not getting results anywhere near good. I’m sharing this so others can have a look and improve the approach so we can get closer to good object detection in fastai2

@j.laute, nice notebook but I still cannot use the trained model for inference. Your codes look good but it might error out if the data is small or the initial model is bad. I made some small changes and am not sure they are correct.

def accumulate(self, learn):

# add predictions and ground truths

#pdb.set_trace()

pred_boxes, pred_scores = learn.pred

# 0 means no prediction

# when the model is bad, no predictions are generated

if pred_scores.shape[1] == 0:

return

in LookUpMetric:

# return -1 if no predictions are generated

@property

def value(self):

if self.reference_metric.res is None:

_ = self.reference_metric.value

return -1

if self.lookup_idx < len(self.reference_metric.res):

return self.reference_metric.res[self.lookup_idx]["AP"]

else:

return -1One more issue: learn.export() does not work and I got this error:

PicklingError: Can’t pickle <function at 0x12a2ef050>: attribute lookup on main failed

Hey @muellerzr,

If we only have one reference image say a retail board of ‘Toysrus’ and detect that in an image of an area which has ‘Toysrus’ among other retailer boards, how do you proceed using fastai2?

We will not have a lot of images of the board i.e. its a standard board which needs to be detected.

Any approach will be helpful.

Thanks

Ganesh Bhat

Has anyone taken a look at the new End-to-End Object Detection with Transformers by Facebook? The advantage being having to do way less hyperparameter tuning as the architecture is more straightforward compared to other approaches.

Might be a candidate to port to fastai2…

I had a look at the paper, models are also available pretrained in pytorch

This video explains it very nicely if anyone is interested: https://www.youtube.com/watch?v=T35ba_VXkMY

We still need the metrics (Average Precision) to have a nice object detection in fastai2 if I’m not wrong. Hope to work on that very soon (there is some preliminary work on the forums.

Has anybody managed to work out how to plot a confusion matrix on the Retinanet multi-object detection notebook ? I’ve been laying about with it but not managed to get anything working.

DETR is fantastic (the end to end transformer). I’m working with it extensively now and it is way smarter than your usual detector b/c of the transformer.

No ports to fastai yet that I know of but I have a basic colab in progress here:

I have a PR in for making it a bit easier to train custom datasets (there is no num_classes as a param in DETR repo) but I will probably just proceed with showing the code changes to do your own training this weekend.

Nice Work!

Cheers mrfabulous1