Hi all,

I am trying to replicate 2018 lesson 8 using fastai v1.

For lesson 8 in 2018, the idea is simple, using resnet 34 as backbone, and predict 4 coordinates outputs.

(Simple network, no multi anchor box, no RetinaNet, no Focal loss needed at the moment)

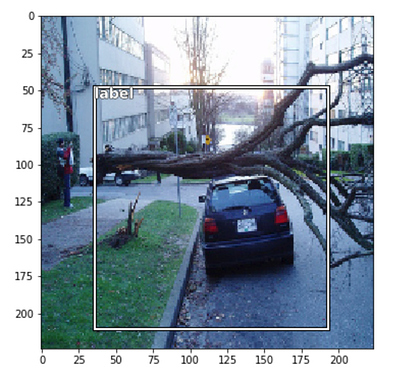

However, the model didn’t predict very well (it always shows a big box right in the middle of the image, it is no where near the 2018 result)

1st I thought it might be the issue with FlowField thing that caused my 4 coordinates prediction transform back to the image being plot incorrectly.

Later on I noticed that the L1Loss finally stuck at 0.3, that makes me wondering that since fastai v1 scales all the BBox coordinates between (-1 and 1), if we use L1Loss() and taking the mean of the loss, if one point is close to 0, there is no way for the network to recognize that since we are taking the average of the 4… the loss will be evenly distributed to 4 coordinates, which end up with the bounding box always stick in the center. (which the network think is the optimal result. This is just my guess)

To explain what I mean, here is the ground truth and prediction

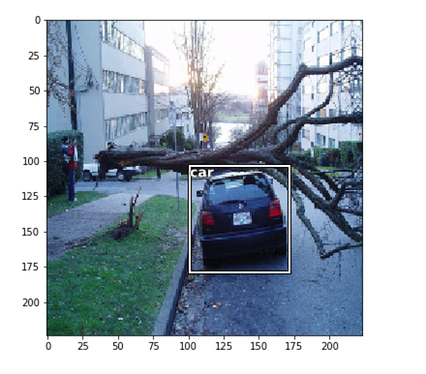

Ground Truth

Prediction

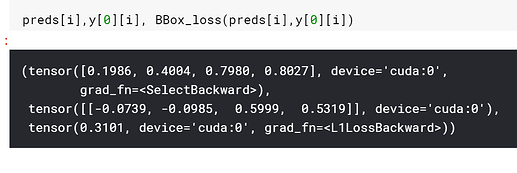

Here is the loss

As I am guessing, it would be extremely hard for the network to figure out the 0.4004 is actually -0.0985, since we are taking L1Loss, the loss will be distributed to 4 numbers.

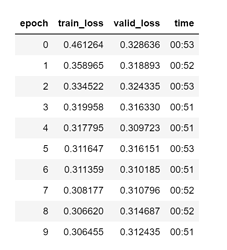

As you can see, after 10 epochs, it starts to overfit, but the prediction is far away from ideal (or anywhere close to 2018 lesson 8)

One possible solution I can think is to override the dataLoader, so I think I can turn the (-1,1) system back to regular coordinates. I don’t think currently the datablock API allows you to do that, since ObjectCategoryProcessor doesn’t allow you to specify scale = False (or is there a way to do so?)

But this will take a lot of time and I think there must be a reason that fastai scales it to (-1,1), again, I think the reason is to have data argumentation to have the coordinates run correctly. But in such a way how should I define the L1Loss… ?

Thanks in advance, any help is appreciated