Hi gang. We’ve written a little Pytorch tutorial which, if approved, may become an official pytorch v1 tutorial once it’s released. So I want to make sure it’s pretty good!  Let me know if you have any thoughts about how to make this better:

Let me know if you have any thoughts about how to make this better:

This is fantastic @jeremy and team!

It is lucid, written with a beginner in mind and easy to follow.

You do describe DataLoaders here:

Pytorch's DataLoader is responsible for managing batches. You can create a DataLoader from any Dataset.

DataLoader makes it easier to iterate over batches. Rather than having to use train_ds[i*bs : i*bs+bs], the DataLoader gives us each minibatch automatically.

I’d love a quick mention on why automated minibatches are better e.g:

- Can this help train on datasets which don’t fit in memory?

- Can this improve GPU utilization? By having GPU not wait on CPU for I/O?

- Isn’t an array lookup O(1) - why should I use this instead?

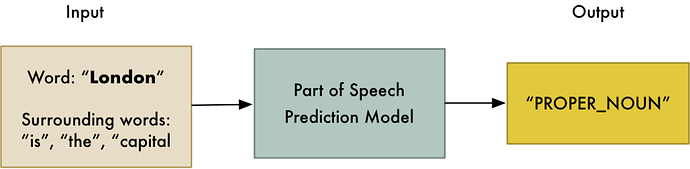

Maybe having a few images is quite helpful to mentally prepare on what’s next?

Example image:

This is from an Adam Geitgey tutorial on NLP.

Is there an environment.yml or requirements.txt for us to ensure we are using appropriate versions of the various python packages?

I just ran through this just before. Apart from a few wording typos the work is great. I am going to directly implement these tools in my work. Thank you so much for this.

This is great.

I don’t know if this is within the scope of what you’re envisioning for the document but I think it would be useful to show how to pull weights, gradients and activations out of the model.

This is an amazing tutorial. Nothing much to add except for a few minor points.

- We can remove

import mathand usenp.sqrtinstead. - In Mnist Logistic, have

self.lin = nn.Linear(784, 10, bias=True). to specifically show that bias is added. - In addition to

nn.Sequential, have a small section onnn.ModuleList.nn.ModuleListis pretty amazing when you want to repeat the same type of layer, but don’t want to define it multiple times. - I am not sure if I am understanding the

preprocesspart correctly. It seems that the preprocess stuff is making the image into (B x 1 x 28 x 28). However, if the image is of size say 56 x 56, it should make it B x 1 x 56 x 56 right? Currently it will make it (4B x 1 x 28 x 28). And I am not very sure if that is the intended. If it is, a little more explanation would be appreciated (I think).

Things I really loved:

- Lambda Module. While I never used it before, it was nice to see some examples.

- Wrapped Data Loader. Again found this pretty fantastic. There are cases where I had to resort to some adhoc methods, but having it in the Wrapped Data Loader itself, would be better.

- Simple definition of NLL loss. I would have probably written the same stuff with an additional 2-3 wasteful lines.

- The refactoring stuff and going bottom-up is a lovely idea.

MNIST data is a single 28*28=768 long vector. We convert it in to an image.

That was thanks to @radek

I’m not sure how Facebook is planning to host this - I’ll try to make sure that there’s something suitable.

It’s simply a refactoring which reduces the lines of code we have to write.

This looks really good. Covers all the basic essentials for building neural networks with pytorch in a very structured and thoughtful manner.

A few suggestions that might improve the understanding further:

- Can the various torch modules being used/explained in the notebook (torch.nn, torch.nn.functional, torch.autograd, torch.optim, torch.utils etc) be introduced initially with a brief overview in a separate section. This gives an overall idea of what imp modules are available in pytorch, what they do and what is being covered in this notebook etc.

- The one that @nirantk already mentioned about data loaders. To be more specific, can the usage of generator inside dataloader be explained as well since it helps with very imp things like reading large datasets

- This might be an overkill but let me put it anyway. Can we add how to handle variable sized inputs using a data loader. Knowing how this can be done comes in handy in certain scenarios, for e.g. during test time where we would like to predict on images with different sizes without cropping, doing pixel wise classification where scaling back on predictions is not desired.

A discussion thread on pytorch forum regarding the same

I love it! It came at a perfect time – I am trying reconstruct the recommendation systems from the lectures using only pytorch, and this notebook really helped me understand datasets/dataloaders.

One question:

- When including validation, you add

model.train()andmodel.eval()to the code. I looked at the documentation and it seems to turn things like dropout on/off. I don’t understand what it is doing in this context though.

Yup basically it’s just for batchnorm and dropout (or any other layer types that have different behaviour at train vs test time).

That’s a good idea. Maybe I’ll add a summary at the end.

I think it’s almost too compact – a tutorial reader would definitely benefit from a short comment of this snippet.

This tutorial is really excellent! I’ve looked at a few recently, and none even came close in terms of discussing the concepts step by step.

In this spirit, I think there should be a comment about the first uses of model.train() and .eval().

The only think I dislike about the tutorial is the loss_batch function, which to me makes the code less clean/readable, but that’s just personal taste.

If it were a DL tutorial, I’d certainly agree - but I’m trying to keep this to just being a pytorch tutorial, so really not explaining any implementation details like that.

OK I’ve added that now.

I’ve added a closing section with this summary now.

Excellent tutorial, just what I needed.

+1 to add a brief description of nn.ModuleList, just reading this comment helped me clarify some ideas.

It’s can be a useful class but I don’t think it belongs in this particular tutorial.

(BTW, I almost never use it any more - I find nn.Sequential(*list) nearly always better.)