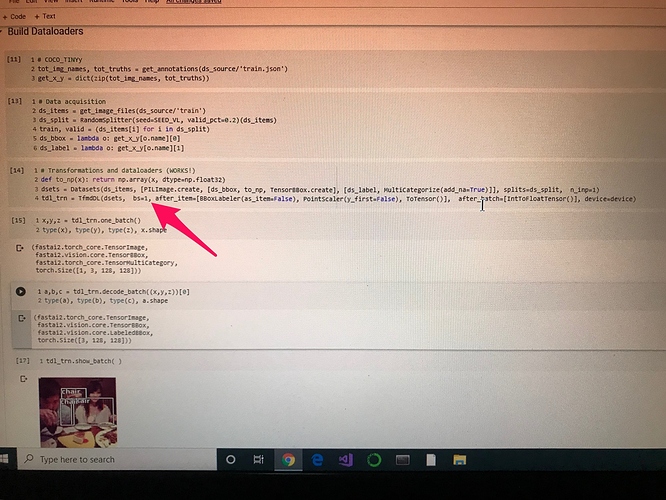

I am trying to use medium-level Fastai2 API, such as Datasets, to create dataloaders for object detection. After a long struggle, I have managed to build a working piece of code using the COCO_TINY dataset( it works with Pascal too); however, I have two serious unsolved problems. The code is as follows:

Problem One

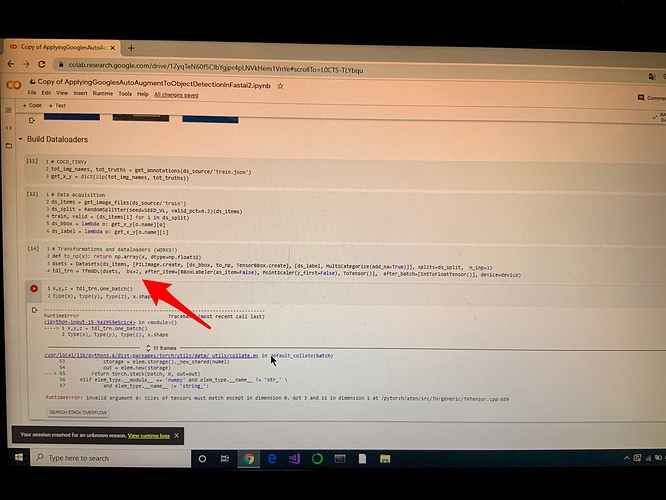

The code above works ONLY when batch size is set to 1 (bs=1). Any value greater than 1, causes any subsequent command, such as show_batch, to crash. An example of the problem is shown below:

Problem Two

I need to resize both the image and the corresponding bounding box. I cannot find any way of doing this. In the documentation, in the section Core Visión, it states “ The safest way that will work accross applications is to always use them as tuple_tfms . For instance, if you have points or bounding boxes as targets and use Resize as a single-item transform, when you get to PointScaleror BBoxScaler (which are tuple transforms) you won’t have the correct size of the image to properly scale your points.“ I simply cannot find ´tuple_tfms´ anywhere. I tried to add Resize to after_batch, but it does not work. Furthermore, I asume that I also need to implement collate_fm to sort the images accordingly. How can I accomplish this?

Any guidance will be appreciated…