Hi,

I can’t make multi label classification to train on tabular data.

I have 5 categorical targets for each row of data.

The y targets have dtype of “CategoricalDtype”

When using lr_find with the following code, I get “ValueError: Expected input batch_size (64) to match target batch_size (320).”

This is the code I used:

to = TabularPandas(df, procs=[Categorify, Normalize],

cat_names=cat_cols,

cont_names=cont_cols,

y_names=y_names,

splits= splits)

dls = to.dataloaders(bs=64)

learn = tabular_learner(dls, metrics=[accuracy])

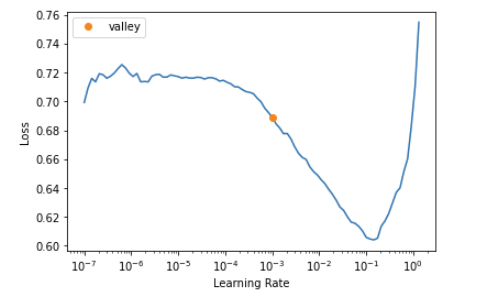

learn.lr_find()

this is the traceback with the error:

---------------------------------------------------------------------------

ValueError Traceback (most recent call last)

Input In [345], in <cell line: 1>()

----> 1 learn.lr_find()

File /opt/conda/lib/python3.9/site-packages/fastai/callback/schedule.py:289, in lr_find(self, start_lr, end_lr, num_it, stop_div, show_plot, suggest_funcs)

287 n_epoch = num_it//len(self.dls.train) + 1

288 cb=LRFinder(start_lr=start_lr, end_lr=end_lr, num_it=num_it, stop_div=stop_div)

--> 289 with self.no_logging(): self.fit(n_epoch, cbs=cb)

290 if suggest_funcs is not None:

291 lrs, losses = tensor(self.recorder.lrs[num_it//10:-5]), tensor(self.recorder.losses[num_it//10:-5])

File /opt/conda/lib/python3.9/site-packages/fastai/learner.py:222, in Learner.fit(self, n_epoch, lr, wd, cbs, reset_opt)

220 self.opt.set_hypers(lr=self.lr if lr is None else lr)

221 self.n_epoch = n_epoch

--> 222 self._with_events(self._do_fit, 'fit', CancelFitException, self._end_cleanup)

File /opt/conda/lib/python3.9/site-packages/fastai/learner.py:164, in Learner._with_events(self, f, event_type, ex, final)

163 def _with_events(self, f, event_type, ex, final=noop):

--> 164 try: self(f'before_{event_type}'); f()

165 except ex: self(f'after_cancel_{event_type}')

166 self(f'after_{event_type}'); final()

File /opt/conda/lib/python3.9/site-packages/fastai/learner.py:213, in Learner._do_fit(self)

211 for epoch in range(self.n_epoch):

212 self.epoch=epoch

--> 213 self._with_events(self._do_epoch, 'epoch', CancelEpochException)

File /opt/conda/lib/python3.9/site-packages/fastai/learner.py:164, in Learner._with_events(self, f, event_type, ex, final)

163 def _with_events(self, f, event_type, ex, final=noop):

--> 164 try: self(f'before_{event_type}'); f()

165 except ex: self(f'after_cancel_{event_type}')

166 self(f'after_{event_type}'); final()

File /opt/conda/lib/python3.9/site-packages/fastai/learner.py:207, in Learner._do_epoch(self)

206 def _do_epoch(self):

--> 207 self._do_epoch_train()

208 self._do_epoch_validate()

File /opt/conda/lib/python3.9/site-packages/fastai/learner.py:199, in Learner._do_epoch_train(self)

197 def _do_epoch_train(self):

198 self.dl = self.dls.train

--> 199 self._with_events(self.all_batches, 'train', CancelTrainException)

File /opt/conda/lib/python3.9/site-packages/fastai/learner.py:164, in Learner._with_events(self, f, event_type, ex, final)

163 def _with_events(self, f, event_type, ex, final=noop):

--> 164 try: self(f'before_{event_type}'); f()

165 except ex: self(f'after_cancel_{event_type}')

166 self(f'after_{event_type}'); final()

File /opt/conda/lib/python3.9/site-packages/fastai/learner.py:170, in Learner.all_batches(self)

168 def all_batches(self):

169 self.n_iter = len(self.dl)

--> 170 for o in enumerate(self.dl): self.one_batch(*o)

File /opt/conda/lib/python3.9/site-packages/fastai/learner.py:195, in Learner.one_batch(self, i, b)

193 b = self._set_device(b)

194 self._split(b)

--> 195 self._with_events(self._do_one_batch, 'batch', CancelBatchException)

File /opt/conda/lib/python3.9/site-packages/fastai/learner.py:164, in Learner._with_events(self, f, event_type, ex, final)

163 def _with_events(self, f, event_type, ex, final=noop):

--> 164 try: self(f'before_{event_type}'); f()

165 except ex: self(f'after_cancel_{event_type}')

166 self(f'after_{event_type}'); final()

File /opt/conda/lib/python3.9/site-packages/fastai/learner.py:176, in Learner._do_one_batch(self)

174 self('after_pred')

175 if len(self.yb):

--> 176 self.loss_grad = self.loss_func(self.pred, *self.yb)

177 self.loss = self.loss_grad.clone()

178 self('after_loss')

File /opt/conda/lib/python3.9/site-packages/fastai/losses.py:35, in BaseLoss.__call__(self, inp, targ, **kwargs)

33 if targ.dtype in [torch.int8, torch.int16, torch.int32]: targ = targ.long()

34 if self.flatten: inp = inp.view(-1,inp.shape[-1]) if self.is_2d else inp.view(-1)

---> 35 return self.func.__call__(inp, targ.view(-1) if self.flatten else targ, **kwargs)

File /opt/conda/lib/python3.9/site-packages/torch/nn/modules/module.py:1110, in Module._call_impl(self, *input, **kwargs)

1106 # If we don't have any hooks, we want to skip the rest of the logic in

1107 # this function, and just call forward.

1108 if not (self._backward_hooks or self._forward_hooks or self._forward_pre_hooks or _global_backward_hooks

1109 or _global_forward_hooks or _global_forward_pre_hooks):

-> 1110 return forward_call(*input, **kwargs)

1111 # Do not call functions when jit is used

1112 full_backward_hooks, non_full_backward_hooks = [], []

File /opt/conda/lib/python3.9/site-packages/torch/nn/modules/loss.py:1163, in CrossEntropyLoss.forward(self, input, target)

1162 def forward(self, input: Tensor, target: Tensor) -> Tensor:

-> 1163 return F.cross_entropy(input, target, weight=self.weight,

1164 ignore_index=self.ignore_index, reduction=self.reduction,

1165 label_smoothing=self.label_smoothing)

File /opt/conda/lib/python3.9/site-packages/torch/nn/functional.py:2982, in cross_entropy(input, target, weight, size_average, ignore_index, reduce, reduction, label_smoothing)

2916 r"""This criterion computes the cross entropy loss between input and target.

2917

2918 See :class:`~torch.nn.CrossEntropyLoss` for details.

(...)

2979 >>> loss.backward()

2980 """

2981 if has_torch_function_variadic(input, target, weight):

-> 2982 return handle_torch_function(

2983 cross_entropy,

2984 (input, target, weight),

2985 input,

2986 target,

2987 weight=weight,

2988 size_average=size_average,

2989 ignore_index=ignore_index,

2990 reduce=reduce,

2991 reduction=reduction,

2992 label_smoothing=label_smoothing,

2993 )

2994 if size_average is not None or reduce is not None:

2995 reduction = _Reduction.legacy_get_string(size_average, reduce)

File /opt/conda/lib/python3.9/site-packages/torch/overrides.py:1394, in handle_torch_function(public_api, relevant_args, *args, **kwargs)

1388 warnings.warn("Defining your `__torch_function__ as a plain method is deprecated and "

1389 "will be an error in PyTorch 1.11, please define it as a classmethod.",

1390 DeprecationWarning)

1392 # Use `public_api` instead of `implementation` so __torch_function__

1393 # implementations can do equality/identity comparisons.

-> 1394 result = torch_func_method(public_api, types, args, kwargs)

1396 if result is not NotImplemented:

1397 return result

File /opt/conda/lib/python3.9/site-packages/fastai/torch_core.py:341, in TensorBase.__torch_function__(self, func, types, args, kwargs)

339 convert=False

340 if _torch_handled(args, self._opt, func): convert,types = type(self),(torch.Tensor,)

--> 341 res = super().__torch_function__(func, types, args=args, kwargs=kwargs)

342 if convert: res = convert(res)

343 if isinstance(res, TensorBase): res.set_meta(self, as_copy=True)

File /opt/conda/lib/python3.9/site-packages/torch/_tensor.py:1142, in Tensor.__torch_function__(cls, func, types, args, kwargs)

1139 return NotImplemented

1141 with _C.DisableTorchFunction():

-> 1142 ret = func(*args, **kwargs)

1143 if func in get_default_nowrap_functions():

1144 return ret

File /opt/conda/lib/python3.9/site-packages/torch/nn/functional.py:2996, in cross_entropy(input, target, weight, size_average, ignore_index, reduce, reduction, label_smoothing)

2994 if size_average is not None or reduce is not None:

2995 reduction = _Reduction.legacy_get_string(size_average, reduce)

-> 2996 return torch._C._nn.cross_entropy_loss(input, target, weight, _Reduction.get_enum(reduction), ignore_index, label_smoothing)

ValueError: Expected input batch_size (64) to match target batch_size (320).

Thanks in advance!