Hi!

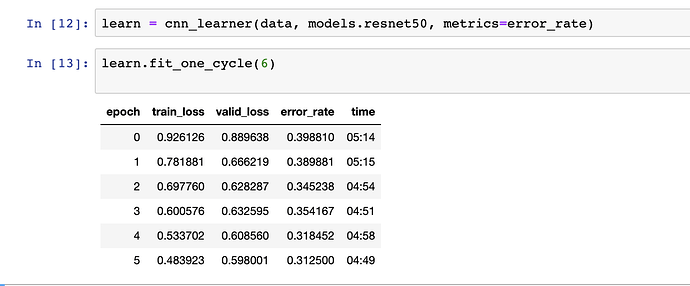

I’m playing with some simple vision CNNs and trying something similar to what someone tried between class1 and class2, categorizing similar looking relatives. After 6 epochs I’m seeing something like in the image below:

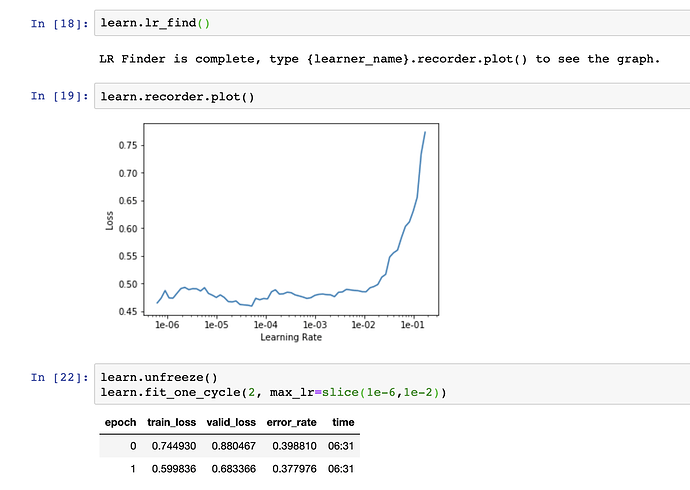

What is weird is that after adjusting the learning rate the performance seems to be much worse.

This seems counterintuitive. I’m likely doing something wrong but I can’t figure out what.

Separately, which pretrained models are known to perform better for face recognition? I saw mentions of VGGFace2,. Even if it’s overkill it might be good practice to try to use it with fast.ai.

Thank you!