Hi @zache,

Thanks for all the feedback (and excellent data chart) and very happy to hear that some of our techniques here were of benefit for you!

Definitely appreciate all the feedback as great to see what is working/not working on things beyond Woof/Nette.

There’s more new ideas being tested so may have some new stuff for you to try soon as well.

Thanks again for the feedback!

Less

Wow, that’s very odd. I haven’t tried turning BN off - the only thing I’ve tested is the switch for order of BN vs Mish (i.e. Mish first, then BN).

I wonder if putting running batch norm in there might glean some better insight?

I will try that soon  I’m currently working on preparing a difficult keypoints dataset, as fastai lacks in this (I believe) and I’m making it similar to imageWoof/nette (hopefully Jeremy likes the idea?)

I’m currently working on preparing a difficult keypoints dataset, as fastai lacks in this (I believe) and I’m making it similar to imageWoof/nette (hopefully Jeremy likes the idea?)

A new paper came out yesterday that looks very compelling:

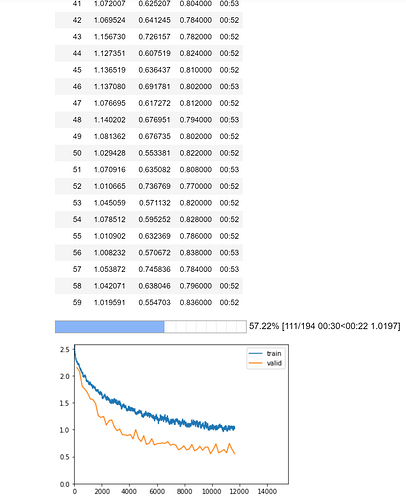

I’ve made a new beta drop of Ranger that uses it instead of RAdam and am testing now. First impressions are that it greatly stabilizes training in the mid-range and beyond where things get a lot more erratic on average without it.

I’m running an 80 epoch right now with it, as they show that this calibration outperforms SGD+Momentum over the long run.

(use the Ranger913A.py file).

You should definitely make a blogpost about this summarizing your findings. Would be awesome. Do you have a repo on this?

@LessW2020 I am trying to run running batch norm as according to this post: Running batch norm tweaks and when it runs update stats I get the following:

--> 24 s = x .sum(dims, keepdim=True)

25 ss = (x*x).sum(dims, keepdim=True)

26 c = self.count.new_tensor(x.numel()/nc)

IndexError: Dimension out of range (expected to be in range of [-2, 1], but got 2)

Which I believe has to due with the fact of how tabular tensors are passed in as two separate entities (cont and cat vars). Where should I go about fixing this?

Super interesting thread and discussion!

As far as I got everything correct from the previous posts, this was so far the best model setup:

- Mish activation function

- change the order to Conv-Mish-BN (this order seems to be already included into the

ConvLayerclass of fastai v2 dev.) - hyper parameters, optimizer, and training schedule like in the notebook

I tried my Squeeze-Excite-XResNet implementation with AdaptiveConcatPool2d with Mish based on the fastai XResNet and got the following results after 5 runs:

0.638, 0.624, 0.668, 0.700, 0.686 --> average: 0.663

… so this was not really improving the SimpleSelfAttention approach from the notebook.

Then I combined the MXResNet from LessW2020 with the SE block to get the SEMXResNet (  ) and got the following results (+ Ranger + SimpleSelfAttention + Flatten Anneal):

) and got the following results (+ Ranger + SimpleSelfAttention + Flatten Anneal):

0.748, 0.748, 0.746, 0.772, 0.718 --> average: 0.746

And with BN after the activation:

0.728, 0.768, 0.774, 0.738, 0.752 --> average: 0.752

And with AdaptiveConcatPool2d:

0.620, 0.628, 0.684, 0.714, 0.700 --> average: 0.669

With the increased parameter count (e.g., SE block and/or double the parameters from the FC head input stage after the AdaptiveConcatPool2d) the 5 epochs are very likely not enough to really compare it to the models with fewer parameters, as it will need more time to train (like mentioned above).

I also have seen the thread from LessW2020 about Res2Net. - Did somebody already tried it in a similar fashion and got some (preliminary) results?

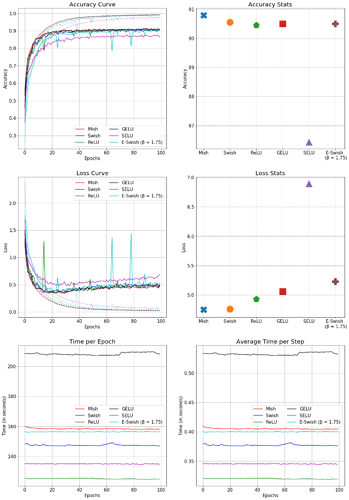

Just completed a thorough benchmark on SE-ResNet-50 on CIFAR-10.

Parameters:

epochs = 100

batch_size = 128

learning_rate = 0.001

Optimizer = Adam

Observation Notes - Mish Loss is extremely stable as compared to others especially when compared to E-Swish and ReLU. Mish is faster than GELU in comparison. Mish also is the only activation function which crossed 91% in the 2 runs while other activation functions went to a max of 90.7%.

Mish highest Test Top-1 Accuracy - 91.248%. SELU performed the worst throughout.

(Please click on the picture to view it in larger aspect)

I think you mixed up the axes labels on the accuracy curve and loss curve graphs. epochs should be x, not y.

Corrected!

Not yet – I was going to wait until after I am finished with the project since this was just a small part of something much bigger. But I’ll definitely share it here when I do!

I just wanna note here as I reran my tabular models and here is one bit I noticed. The increase in accuracy was noticed specifically when I included information via time-based feature engineering. When the paper is published I will be able to share more details, but I definitely notice the change specifically there. In all other instances there was no radical difference. The moment that was introduced accuracy shot from 92% to 97% with negligible error. This I believe is what was missing. I will note that this time-based feature engineering is not like Rossmann. I will try plugging in a Mish activation as I believe that this could shine here.

I would really like some more in depth on this. Are you working on a paper as per what you wrote? If yes, do send the arXiv link, once it’s up.

I am, hence why I can’t say too much on here right now!  But I will definitely send the arXiv once it’s up

But I will definitely send the arXiv once it’s up

Awesome. Do provide the Mish scores once you have them.

The reasoning for hoping for Mish is I have been thoroughly testing these new optimizer + Mish on a variety of datasets with no changes whatsoever. But with this dataset I saw direct changes using the new optimizer + scheduler. Sadly, the only bit that did not change was Mish in the end  My model was set up with two hidden layers of 1000 and 500. I ran it in three separate areas that I saw various improvements on (with the optimizer and in general) but to no avail

My model was set up with two hidden layers of 1000 and 500. I ran it in three separate areas that I saw various improvements on (with the optimizer and in general) but to no avail  Sorry @Diganta! The tabular mystery is still a thing! (because this whole experiment has gotten me re-thinking tabular models as a whole)

Sorry @Diganta! The tabular mystery is still a thing! (because this whole experiment has gotten me re-thinking tabular models as a whole)

I do agree that tabular models are a mystery of their own. But I would really like to see your progress.

I’ll send you a DM

I’ve been out of the loop here for a bit as I have a lot of consulting work in progress, but @muellerzr happy to see you might have a paper out soon!

Re: tabular data - here’s a recent paper and apparently open source code that may be of great interest:

I want to test it out but too swamped atm.

Also, I wanted to add that I’m having really good results with the Res2Net Plus architecture and the Ranger beta optimizer. I’ll have an article out soon on Res2Net plus, and then the Ranger beta and the paper it’s based on but it trains really well…not fast enough for leaderboards, but excellent for production work.

Hope you guys are doing great!

@LessW2020 very good find! I’ll try it out and report back  Hope you are doing well too (and not too too swamped!

Hope you are doing well too (and not too too swamped!  )

)

Only bit that concerns me: “Our implementation is memory inefficient and may require a lot of GPU memory to converge”