I’ve watched lecture 3 of the fastai course. I am toying around with the notebook: How does a neural network work? provided in the lesson resources.

The notebook ends with a DIY prompt asking you to create a neural net of 3 ReLu’s to approximate a quadratic function.

Here’s my code snippet:

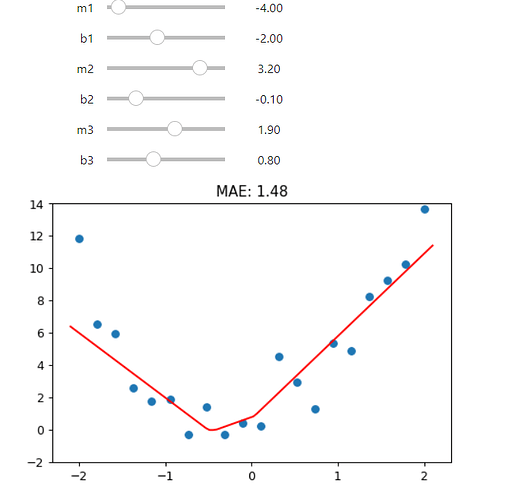

def triple_relu(m1,b1,m2,b2,m3,b3,x):

return rectified_linear(m1,b1,x) + rectified_linear(m2,b2,x) + rectified_linear(m3,b3,x)

@interact(m1=-1.5, b1=-1.5, m2=1.5, b2=1.5, m3=1.5, b3=1.5)

def plot_triple_relu(m1, b1, m2, b2, m3, b3):

plt.scatter(x,y)

loss = mae(f(x), y)

plot_function(partial(triple_relu, m1,b1,m2,b2,m3,b3), ylim=(-2,14), title=f"MAE: {loss:.2f}")

My concern is that the MAE doesn’t change when I tweak the parameters.

I want the plot function to behave similarly to what Jeremy shows in his lecture – the MAE changes when you move the sliders giving you a sense of whether you’re sliding in the right direction.

Thanks for the help!