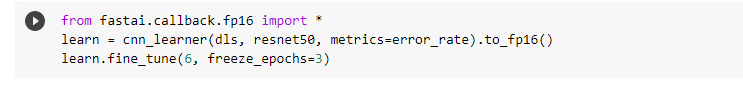

fine_tune has default values: (see doc here)

Learner.fine_tune(epochs, base_lr=0.002, freeze_epochs=1, lr_mult=100, pct_start=0.3, div=5.0, lr_max=None, div_final=100000.0, wd=None, moms=None, cbs=None, reset_opt=False)

if you do not specify any lr, it will use base_lr=0.002. But this may not be optimum. You are beter off trying lr_find() and then define an optimum lr.

1 Like

I try with another data and my error_rate when using discriminative is bigger than error_rate not using it. What happens with my model and how can I fix it?

This may depend on many things:

- the lr you use (if not optimum for the specific network and dataset, will fare poorly)

- other hyperparameters

- the number of epochs

Also, you have a training error and a validation error, how do they evolve?

Refer to fastbook so see whether you are overfitting or not and how to pick the right lr, …

Hard to give an absolute answer like that. Sorry

1 Like

thanks a lot. I think my data is bad. I create data from Bing Search API.