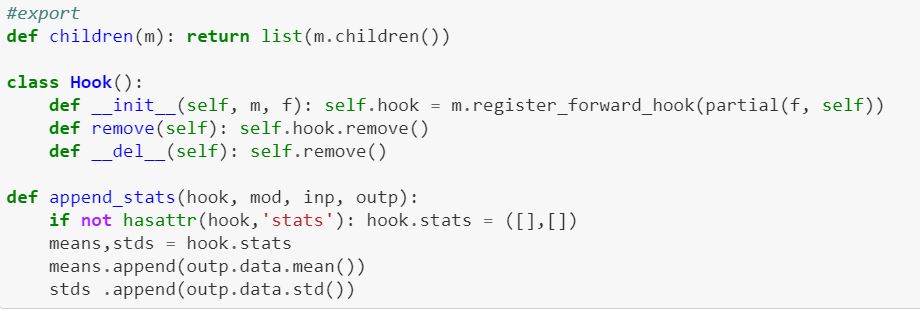

In lesson 10 notebook, Jeremy introduces the concept of hook and apply that to capture activation statistics (mean, std.) across different layers.

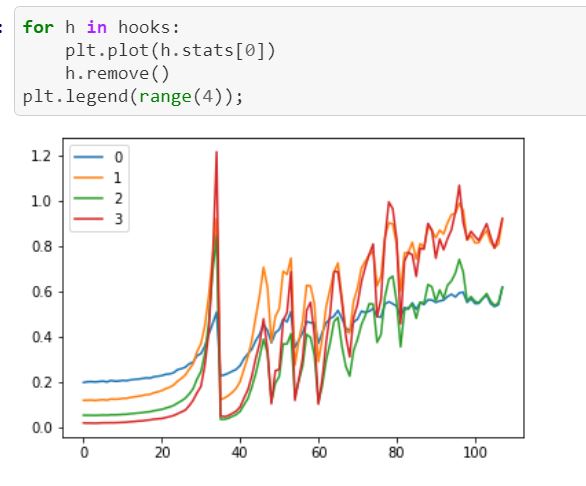

Below is what’s shown when a conv net is trained without a proper initiation. (also attach the related hook class snippet for your reference)

As shown, there is an obvious fluctuation (aka abrupt jump) on activation’s mean over iteration. Though I know Jeremy did mention the phenomenon. I am still not clear why there is such a fluctuation and why it is bad.

Is there any intuitive explanation that?