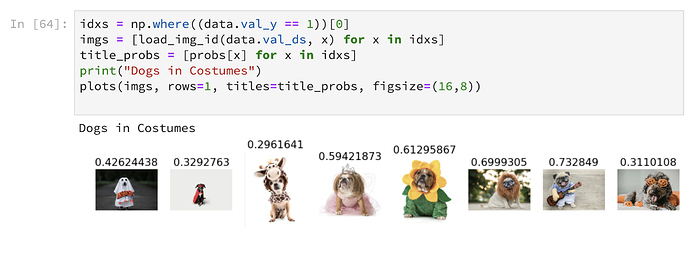

I ended up replacing the “valid/dogs” images with pictures of dogs in costumes to see how the existing dog/cat classifier would do & what features it might be relying most heavily upon for classification. I think there’s something about the dogs’ eyes that make that an important part of the classification, but I’d need to do more tests to be sure. The tongue on the dog wearing the pumpkin glasses didn’t seem to be a strong enough feature (although for me that’s one of the FIRST things I think of when I think about dogs!).

Nice idea!

As for the question of what features are important for the classification, I’m gonna recommend that article again:

Really a nice way of visualizig what was important for the classification. The visualization technique is called CAM (class activation mapping). An improved version, grad-CAM, works on any CNN model. I tested this with a generic pytorch VGG model and it worked great. Unfortunately I didn’t have the time to test it with a resnet. While the technique works for any kind of CNN, it requires a bit of setup…

Anyway, here are the original grad-CAM paper and the example implementation I used. Maybe you can apply it to your classification problem?

Greetings,

Oliver

Watch out deep neural network “understanding” of objects like dogs is quite naive. Your last statement “for me that’s one of the FIRST things I think of when I think about” could mislead you. Current DNNs do not either think about or interpret things. After long training phases, NNs, like CNNs, memorize patterns in the input signal, and end up correctly labeling a small subset of the entire reality, and then struggle with new observations that often are out-of-distribution, namely not from the training distribution.

Thanks so much for these resources! I’m constantly looking for ways to engage non-programmers with AI & see something like this simple activity as a way to encourage people to think more critically about “recognition”.

Last year I was working on some tutorial materials for keras/Tensorflow in R (https://github.com/IBM/using-tensorflow-with-r). I had been experimenting with embedding the heatmap transformations used as examples in the Deep Learning with R book, so it’s good to see another view of this in Python.

This was an article Ali Zaidi shared with me at an R conference I attended in late 2017 when I was first wrapping my head around all of this stuff. It might be a little dated now, but I like this perspective - https://azure.github.io/learnAnalytics-DeepLearning-Azure/fooling-images.html I thought this was also a nicely applied example in a similar vein of the above that shows the potential impacts (face recognition as login) - https://blogs.msdn.microsoft.com/uk_faculty_connection/2017/03/07/hijacking-faces-with-deep-learning-techniques-and-microsoft-azure/

I’m really glad you hit on that comment, I was hoping someone would. I mention it more as a comparison of modes of understanding. To you & I, humans that possesses episodic memory & commonsense reasoning, “a deep neural network “understanding” of objects like dogs is quite naive”. Rather than treating these modes of understanding as separate unrelated buckets, I see these are different lenses of the same thing (a “dog” or a “cat”). So, the algorithm’s “understanding” of a dog is a dot product & a prediction. My understanding is memories & sensory experiences. The collection of these multifaceted perspectives is what gives us “understanding” & I think this is the value of AI. As humans we use metaphors to integrate new experiences into our contexts… we are innate dot connectors. So rather than thinking of the algorithm’s definition as separate from my own, I think of it as another perspective that I can integrate into my own.

Paintings, poems, stories, & even rational narratives are ways of connecting that human experience of sense & memory to our understanding of cats & dogs. How could you metaphorically integrate a dot-product/prediction perspective into someone’s understanding of a dog or a cat?

Another question (& the focus of my own research as an anthropologist in this space) is how do I integrate my experiential perspective into the the dot-product/prediction perspective? How can I express my own feelings, memories, & experiences about dogs into a compatible form (eg, as a dataset)?

Hi, glad you liked my comment! Reading your reply I’m a bit confused by your way of using metaphors.

Let me clarify this point. For what I know, metaphor is a figure of speech at thought level, since it is used as “meaning carrier”, namely abstracting away from cognitive aspects. Rather you describe them as acting at lower level too: cognitive stimuli. Seems cool but I never read anything about. Is there any study you can point me out ?

About your last question, well you’d need a kind of magic language (more or less formal I have no idea!!) in order to express and write down your feelings, memories, experiences. It is a tricky question btw. It has not easy answers. Moreover it seems to me that it is out of the scope of this forum.

A first step would be for deep neural nets to understand concepts. In lesson 4, embeddings are introduced. They are a way to express abstract categories (like days of the week, colors, …) in terms of vectors (something that a neural net can work with). This is absolutely fascinating, I’m sure you’ll like this lesson very much

The last question is an open-ended research question

Just an example of language used to transcribe conversations in social science:

PETRI

Claus

Leonore

PETRI DIETMAR

Minna

DIETMAR DIETMAR

(1.1)

u:h (.) <yes but> yeah (.) if you think about (the character)

[( )

[((flash from wide screen, everyone but Bert turn gaze to screen))

[((Bruno and Minna frown, shake heads; Bruno and Leonore turn heads

to left; Bruno whispers to Hannu))

((Hannu leans forward, gaze directed at laptop screen)) H---->*

((Leonore and Claus giggle quietly, Herman sneers))

((Bruno whispers to Marja, leans back, smiles at people sitting opposite)) ((Minna leans forward, Hannu straightens posture)) H---->*

((Samantha raises hand on pursed lips))

[no- now it’s clear

[((Claus turns gaze to Leonore, raises right hand holding up index finger, smiles)) ((Minna, Samantha, Leonore, Sarah and Herman turn gaze to Claus))

£↑a(h)h£ ((Leonore raises left hand holding up index finger))

((laughter among local participants))

()

thank you very much I can (.) fully agree on that one that sounds

like a prominent thing I totally get your point (0.2) fully agreed

°I don’t understand°

((Minna turns gaze to Leonore, leans back))

[((Leonore shakes head, Hannu opens right palm))

[uhm (.) Ricardo

((Hannu leans back))

any chip from you

((Hannu, Minna and Claus turn gazes to screen one after the other;

Bruno and Marja gaze to each other, smile))Hi, I don’t think it is right called it a language: the conversation has been transcribed following some annotation protocol, just that. I have been working/researching on natural language and knowledge representation for almost 10 years now, I am quite confident of any kind of annotations alone is not enough to convey our mental states. For that reason I said “magic language” in my previous reply. You, working at IBM, are supposed to have access to limitless hardware resources, so you can easily try different paths- Just use your imagination