I have been digging inside the fastai library to find out where the loss function is defined for the learner in Lesson 1. The optimizer is by default “Adam” and is defined in the class Learner which is inherited by ConvLearner. But I am not able to find where the loss function which happens to be F.cross_entropy is defined. Any help is appreciated.

Thanks—maybe there is nothing better to do than what you and Michael suggested. I was just hoping there is a way to reduce the human effort. I guess in addition to checking the validation set, one could train and validate on different subsets and gradually clear up the mislabeled data on the whole dataset. I’d love it if there was any additional automation to reduce the effort in such tasks.

Hi,

I got this resolved. The issue is because of the extension .tar.gz. untar_data function is not treating tar.gz as a gzip file. But actually .tar.gz extension means the same as .tgz. I managed to get the dataset URL with .tgz extension and using this URL excluding the extension solved my issue.

@rameshsingh - Thank you so much

Hi all,

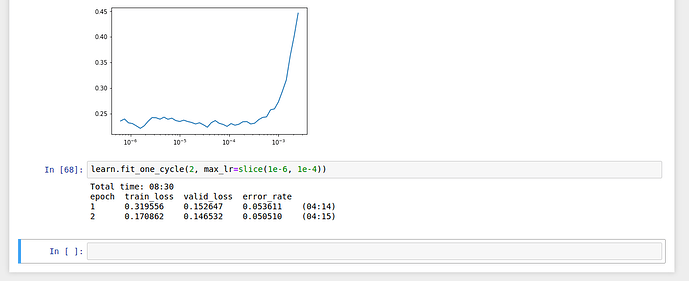

Over the weekend, I have tried training a model with a different dataset and here I am to share my results

I have taken the CIFAR-10 dataset inorder to train my model.

The CIFAR-10 dataset consists of 60000 images of 10 classes, each class having 6000 images

There is a research paper(Convolutional Deep Belief Networks on CIFAR-10) which claimed that they achieved an error rate of 21.1% on training a model with CIFAR-10 dataset in 2010

Now by using fastai library as taught in Lesson1, I could achieve an error rate of 5% upon training a model with CIFAR-10 dataset

Happy to have tried this

@marcmuc Nice! You tagged the wrong guy though  Its @raghavab1992

Its @raghavab1992

Also, I still prefer aomething like the below as its simpler, but is there any disadvantage of using this? (speed etc)

num_train=len(data.train_ds)

trainClasses = []

for i in range(num_train):

trainClasses.append(data.train_ds.ds[i][1])

from collections import Counter

Counter(trainClasses)

@jeremy - sorry to bother you, but I don’t know how to get email notifications and I suspect the same thing is happening to others without them knowing. I have it set to Watching in several places but still don’t get email notifications. Am I missing something obvious? thanks

Sorry I don’t get it can you be explain a bit more?

It would certainly be useful to have some automation but would need human intervention - maybe iterate through the highest loss images with a Skip, Delete, Move option for each. It beats scrolling through the images - but haven’t had time to write it.

Thanks for pointing that out, I corrected the tag in my post.

Well, there is nothing wrong with your code, but the disadvantage will be the speed. You may not notice it very much on small datasets, but if you have something with e.g. millions of examples this will mean a difference of waiting a few seconds vs. waiting a few minutes. The reason for that is that pandas uses optimized c code in the background and vectorized implementations, whereas your version uses a for loop in python which is much slower. Also, if you want to use a loop for some reason, your code would be more efficient if you used python set datatype instead of list for trainClasses (and len(trainClasses) instead of counter). Or a dict with key=classes and value=counters inside the loop.

I didn’t have that error so not sure about the contexts. Someone might be able to help you if you post a full error with the code (e.g. screen shot).

i reduced size to 224 and bs to 20 to run restnet50 and it runs on gcp p100.

purpose of data.normalize(imagenet_stats)

did not find the reference of imagenet_Stats

You can use r to return from the method or function.

Or put a breakpoint in the required file. Then press c to reach break point.

can any one paste lesson1 class discussion chat link

Hi,

I’m also having the same issue with the padding_mode needs to be ‘zeros’ or ‘border’ but got reflection.

Please suggest how to fix this issue.

I am running the code on windows 10

torchvision-nightly-0.2.1

torchvision 0.2.1

torch 0.4.1

Thanks,

Ritika

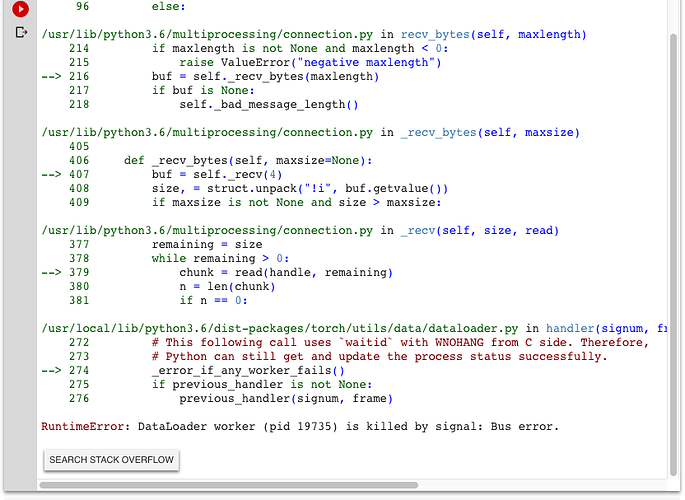

Hello ,

I keep getting this error when I run learn.fit_one_cycle(4).

I’m not sure if it has to do with the images I’m using or if it could be something else I’m not aware of. Any help is appreciated.

Thanks