Thanks a lot @hiromi too. And they keep getting better with every course

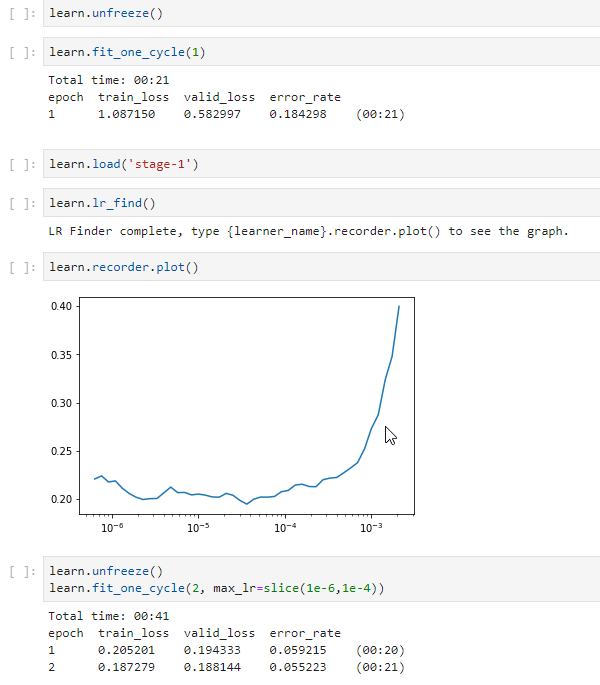

@kofi yes we need to run lr_unfreeze() twice and lr_find() in between these unfreeze() calls. The 1st unfreeze tells us how much the resnet weights were helpful for this dataset classification and later lr_find helps in finding the best lr and we use the same with our again unfreezed model. I hope this helps :). (Sorry for late reply).

hey, nevermind. I saw the imagedatabunch does random split for training/test ds. That make sense now.

thanks!

Hey, I’m sorry about that. Could you try with:

pip install fastai --upgrade

Please let me know if it works.

Thank you @brismith. Ran “conda install nb_conda_kernels” and “conda install ipykernel” and I can see all the kernels.

Can anyone please help me in enabling the nbextensions for Jupyter notebook in GCP setup? Using this I think Gist and HTML page creations will be really easy.

Thanks Francisco. I ran that; the first line returned was:

Requirement already up-to-date: fastai in /home/ubuntu/anaconda3/lib/python3.6/site-packages (1.0.15)

…which tells me it’s still working in the anaconda3 folder, which the Salamander nb’s don’t seem to looking in for fastai. And fastai.show_install(0) in the nb still shows 1.0.11

Support for more deeper models? Which model is proven to work (deeper than ResNet50) for large scale image classification (approx. 35M ) images ? I was thinking of ResNext or Inception but haven’t much played around with them.

@mayanksatnalika while it is true that an unfreezed model has more parameters to train than a freezed model, number of parameters in the last fully connected layers is very high compared to early layers(unfreezed part). So the difference in the parameters comparitively might not be that high when FC layers are thrown in the mix. So this might be a reason for less traintime difference

Hi.

I’ve NVIDIA driver 398.26 and I’m on windows.

On running conda install -c pytorch pytorch-nightly cuda92 throws following error :

PackagesNotFoundError: The following packages are not available from current channels:

- pytorch-nightly

- https://conda.anaconda.org/pytorch/win-64

- https://conda.anaconda.org/pytorch/noarch

- https://repo.anaconda.com/pkgs/main/win-64

- https://repo.anaconda.com/pkgs/main/noarch

- https://repo.anaconda.com/pkgs/free/win-64

- https://repo.anaconda.com/pkgs/free/noarch

- https://repo.anaconda.com/pkgs/r/win-64

- https://repo.anaconda.com/pkgs/r/noarch

- https://repo.anaconda.com/pkgs/pro/win-64

- https://repo.anaconda.com/pkgs/pro/noarch

- https://repo.anaconda.com/pkgs/msys2/win-64

- https://repo.anaconda.com/pkgs/msys2/noarch

Also running pip install torch_nightly -f https://download.pytorch.org/whl/nightly/cu92/torch_nightly.html

throws error :

Collecting torch_nightly

Could not find a version that satisfies the requirement torch_nightly (from versions: )

No matching distribution found for torch_nightlyBy pure intuition, I can see why it is easy to tell a grizzly and polar bear apart: one is black and another is white. Another potential reason might be the fact that ImageNet is trained with animal and even bear image categories, so Resnet is already good at these categories.

Here is a very rough explanation. When you are learning a move in a sports lesson, you don’t simply watch the coach do the move one time and practice on your own forever. Almost always, it is instructive to repeatedly watch the move. What’s more, when you get more familiar with the move yourself, you will learn more from watching the coach.

Thanks for the explanation. Never knew such a mapping exists. . Really handy tool.

. Really handy tool.

@skottapa If you check the notebooks here

you will see that the learn model was unfreezed and then it was executed to fit one cycle. This was just to check if the model (resnet34) we used has significant impact on our dataset or not. then we load the freezed model and then try to find the lr with which the loss minima can be achieved as early as possible. And we unfreeze the model and train again with this new learning rate so that the loss minima would be the actual minima or just around it. I hope this helps. Please comment and post if there is any thing wrong with my understanding,

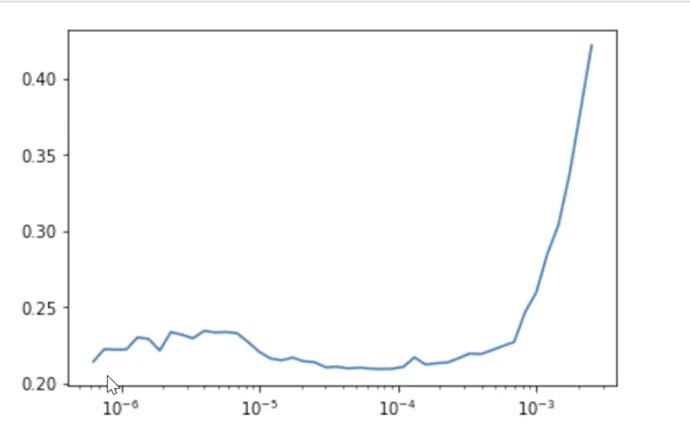

In the lecture, Jeremy picked 1e-6 to 1e-4 because the error rate remain similarly low with learning rate in this range. Here is the image for your reminder.

In your case, however, it is clear that the error rate is much lower between 10e-5 and 10e-3, and even lower between 10e-4 and 10e-3. Maybe it would help to use these two ranges instead? Just my guess.

Check the information printed out on the terminal screen after you start the Jupyter Notebook/Lab server. Make sure that the directory the system used to start Jupiter is the fast.ai library.

What could have happened is that it started a Jupyter notebook from another environment, which of course does not contain the fast.ai library.

Well, looking at the output of most_confused and check those images by hand sounds quite systematic to me.

Got that working - yes thanks. reinstall worked.

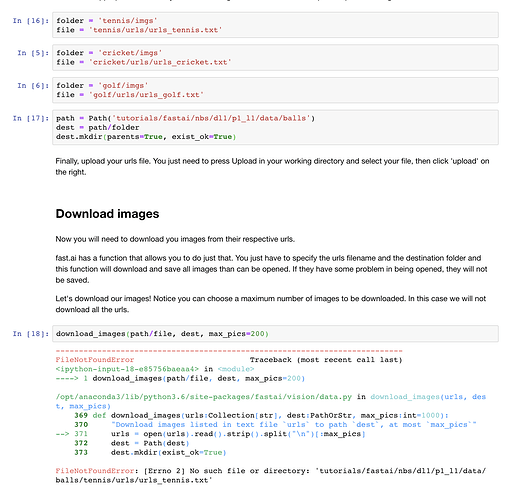

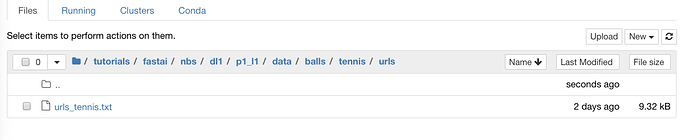

I’m now getting a strange error re not being able to find file/dir which I’m certain exists as per the images below. Am I not seeing something patently obvious?:

I’ve executed the folder/file cell for tennis and also path and Dest cells without error but then download_images is failing.

This is the screen grab of my file structure:

What is the glaring error I’m not seeing?