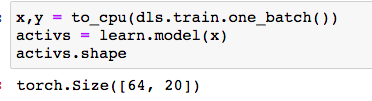

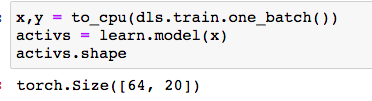

In the Lesson 06_multicat, at the start of the development for the Learner code, a to_cpu function was passed:

May I ask for the rationale for this please? I’m a DL beginner, coming from a non-CS domain.

Thank you!

Maria

In the Lesson 06_multicat, at the start of the development for the Learner code, a to_cpu function was passed:

May I ask for the rationale for this please? I’m a DL beginner, coming from a non-CS domain.

Thank you!

Maria

Hi @yrodriguezmd,

The to_cpu function just moves the x and y tensors to live on the CPU. PyTorch tensors can live on the CPU or the GPU. PyTorch models can also be either on the CPU or GPU. Both the model and the tensors you’re calling it on have to be on the same device for it to work.

We can check if a tensor is on the CPU or GPU by checking its device attribute. cpu means it’s on the CPU and cuda means it’s on the GPU.

Here are some screenshots breaking down the book example and explaining things more:

Brandon

Dear Brandon,

Thank you for the explanation, I have a better idea now on device implications!

Follow-up questions:

I do not see any other code that pertains to the location of the model. Is the model always defaulted to the CPU?

How is this cpu vs cuda location affected if I change my Colab Runtime type (CPU to GPU for the runtime accelerator)?

Thank you!

Maria

Hi Maria,

I’m not too sure about these ones haha.

.cuda() would return an error or just not work. I’m not sure.I encourage you to try out these things to see what happens. That’s what I did to solidify my knowledge about the .cpu() and .cuda() methods to answer your earlier question

Brandon

Thank you!

Found the answer to follow-up question1:

On Lesson 13 Convolutions, it says that fastai puts data on the GPU when using datablocks, by default.