Basic attempts with Italian in two weeks time here!

Hello folks does anyone have any advice on where I can host a pre-trained language model for the community without it costing me a bunch of money? I was thinking of hosting it on Google Cloud Bucket but it charges me for every download. Does anyone have any advice?

I’m happy to host any language models people create (with credit, of course).

Jeremy setup a new website for NLP (http://nlp.fast.ai/). There is a place holder for Model Zoo. Keep working on it.

I’m going to give Danish (https://da.wikipedia.org) and Norwegian (https://no.wikipedia.org) a shot

I tried to run @binga data preparation for Telugu, but I’d like to know the exact format used for wikipedia text. The wikipedia data is not on github, and it seems the IMDB’s data format was used, 'cause the code is similar to the one used in the notebook for lesson 10.

Thanks in advance

I’m trying out the LM on BPE (from sentencepiece) input with 30k subword units, and I’m getting ridiculously low perplexity (3, but still not finished training) and high accuracy (81% so far).

My guess is that the LM has an easy time on sentencepiece input, because a lot of the time the “tokens” are just a single character, so maybe the LSTM is just learning to remember the word vocabulary rather than any meaningful semantics.

So my question: anyone else running experiments using sentencepiece input? What kinds of perplexities and accuracies are you seeing? And any results on downstream classification tasks already? I would be very interested in seeing a comparison between sentencepiece-tokenized and word-tokenized input for classification.

Hi @monilouise,

Thank you for going through my work.

The objective of the language model in Telugu was to take the wikipedia articles in Telugu language and build a LM on top of it.

The prepare_data.sh file pretty much contains all that you need.

- It obtains the all the telugu wikipedia articles of the telugu language from wikimedia dumps (where all the language wiki dumps are hosted). These are html files

- The WikiExtractor.py parses the html files and converts them into json articles.

- The notebook in the same repo reads the json files, extracts the body of the wikipedia article, converts all the data into one single dataframe and from there you should be good to go.

Jeremy prefers to convert all the source datasets into a common format so that the downstream code doesn’t have to be changed and it fits the fastai framework - way of doing this. We prefer the same approach and hence you see the IMDB way of doing things. The data preparation section in my notebook essentially does that.

I hope this helps. Feel free to ask any questions.

Also, thanks for pointing this out. I fixed a bug in my code while writing this answer.

Thank you very much, @binga!

I saw that there are multiple kinds of Wikipedia dumps (at least for Portuguese), and I suspect these different formats lead to different results when we run the notebook, despite using WikiExtractor.

I’ll give German a shot over the next couple of weeks. I was thinking about using Wikipedia or maybe Projekt Gutenberg-DE. I’ll start with a literature review since there have to be language models and classifiers of all sorts already. Hopefully the state of the art can be improved upon

Hi Kristian

Great! I was surprised that noone was looking at de when I look yesterday. I’ll keep you updated, but I’ll also be very interested to hear what you are doing.

Best regards

Thomas

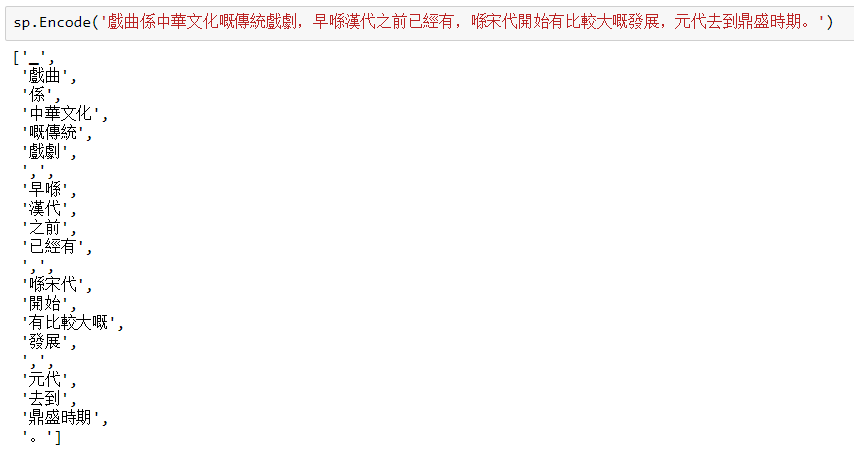

I found similar problem. Due to limited documentation from SentencePiece (SP) official website, here are the observations from my experiment (for Chinese Transitional*). It seems that we need to set the “right” vocab_size to get meaningful semantics:

-

When I set

vocab_sizejust right (exact numbers of words plus 4 (automatically added by SP) or rounded up to the nearest thousand, the tokenization is restricted to each word. -

When I set

vocab_sizetoo high, say 103M, I got an error message to adjust to “soft limit”

-

When I used

--hard_vocab_size = False(ie soft limit), SP appliedvocab_size = 8000(ie just right limit) for me. You can usesp.GetPieceSize()to check yourvocab_size. -

Then, I played with different

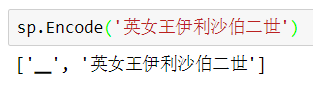

vocab_sizeover and over again and usedsp.Encode()to test a sample sentence. I found 200k tokens (around 27 times ofalphabet size) work well for me. Here is the example:

Now, I need to do the LM with the new set of tokens before answering the rest of your question. I just found more ways which did not work!

Note: *word = character for Chinese Tranditional

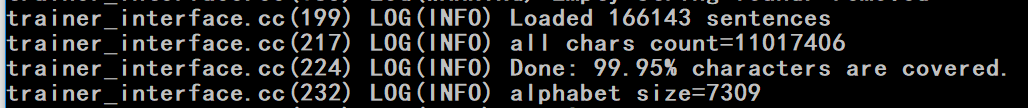

My dataset’s basic information (including alphabet size)

@t-v Perfect, I was looking for your name because I saw it in the wiki but didn’t find it in the thread. I’ll keep you updated. Also interested in hearing what you do

Hi,

Does the 100 milion limit refer to the total number of words in all the documents?

You probably don’t want this to get much higher that 30,000 or you’ll have memory and speed problems when training the LM, FYI.

Thanks for the advice. I am planning to try with high vocab_size to capture more interesting phases. Then, I will increase the minimum frequency for each word when training LM. My concern is:

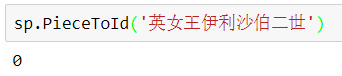

Using the Queen Elizabeth II of United Kingdom (英女王伊利沙伯二世) as an example:

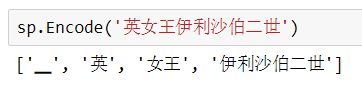

When vocab_size = 30K, sp.PieceId = 0 which means out of range. It was encoded as ‘英’ + ‘女王’ + ‘伊利沙伯二世’.

However, using bigger vocab_size (ie 200k in this case), sp returned it as one token.

I am expecting the same embedding matrix for ‘英女王伊利沙伯二世’ will be updated during forward and backward training. But, I am not sure the embedding for ‘英’ (multiple meanings) -> ‘女王’ (Queen) -> ‘伊利沙伯二世’ (name) vs ‘伊利沙伯二世’ (name) -> ‘女王’ (Queen) -> ‘英’ (as surname here?) will generate the same embedding. Is my concern valid?

Re: the sentencepiece discussion

The official sp repo has two example data files here: botchan.txt and wagahaiwa_nekodearu.txt

In botchan, a “sentence” is just the text line-wrapped at 80 or so characters. There is no significance given to actual semantic sentences, and line breaks occur basically randomly across the text. In wagahaiwa_nekodearu, a “sentence” is more like a paragraph, consisting of multiple semantic sentences.

@Moody, how did you approach sentence tokenization to feed to sentencepiece?

In the SP website, there is a Python wrapper link under the pip install instruction. Then, follow the instructions to tokenise a wiki file (with minimal preprocessing). You can specify the number of output tokens accordingly.

I am using the python wrapper, have downloaded Korean wiki, and have no problems getting SP to run in general (and output “Pieces”).

The problem is it’s kind of unclear what the right “minimal preprocessing” is, to derive sentences.

I can: