I am having the same issue

I finally figured it out. There are two cats vs dogs datasets on kaggle. make you you login into kaggle and check you approve of terms and conditions for cats vs dogs kernel edition (https://www.kaggle.com/c/dogs-vs-cats-redux-kernels-edition)

However all this effort is moot because in the Lesson1 notes (http://wiki.fast.ai/index.php/Lesson_1_Notes), under Necessary Files, Jeremy provides a direct link to the catvsdogs dataset (http://files.fast.ai/data/dogscats.zip). You should download this zipped file because he’s setup the folder directory to support VGG. That is he’s separated the cats and dogs into separate folders and created a validation folder as well. You’ll need this folder structure to run VGG.

Thank you!

Hey everyone,

I just took some time out and made another (unofficial) kaggle command line interface called kli.

It was mainly to learn how kaggle-cli worked, but I started using it as my day-to-day kaggle data downloader. I thought I’d share it as I found it quite useful after a while.

To tell you more about it,

At the time I started the project, kaggle-cli didn’t have many of the features that it has today, so kli looks like a stripped down version of kaggle-cli now.

- The README in the Github page is quite comprehensive, and should show you how to use it.

- It is recommended that you install via pip through

pip install kliwithin your virtualenv. - I’ve not tested its install by cloning the Github, so please don’t try it.

- It is only tested on python3 so I cannot guarantee its usefulness if you have a python2 setup.

- It does not submit, as I found Jeremy’s method (mentioned in the tutorials) much better.

I’ve been using it for a few weeks now, and ironed out many issues so far.

As its quite a small project, I did not write any tests for it. (but it does perform some checks for the user within runtime, so that makes up for quite a bit of the testing)

If you do find any issues, please let me know about it or you can request pulls from Github itself.

This seems to be the only thread to talk about this, do let me know if you find another thread that I can move this endorsement to.

Thanks for the tip!

Hey! I’m running a notebook on a fast.ai AWS EC2 image.

When I run this code in a notebook:

!kg download -u <username> -p <password> -c dogs-vs-cats-redux-kernels-edition

I get the following:

downloading https://www.kaggle.com/c/dogs-vs-cats-redux-kernels-edition/download/test.zip

test.zip N/A% | | ETA: --:--:-- 0.0 s/B

Warning: download url for file test.zip resolves to an html document rather than a downloadable file.

Is it possible you have not accepted the competition's rules on the kaggle website?

I have accepted the rules, and sure enough when I click the link the data starts downloading to my local machine.

Any ideas why this is happening?

(I realize that the data is provided by fast.ai, but I would like to have the Kaggle CLI working.)

EDIT: Yeah, like @asthagarg, this was a username issue. I reset my Kaggle account in order to be able to use the Kaggle CLI. However, it turns out that I need to enter in my email as my username in order for Kaggle CLI to work.

(deleting my CurlWget comment in case it’s distracting from the main issue)

Hey thomzi, I am running into the same issue as you using kaggle-scli. I accepted the rules of the competition, but bash keeps asking me if I accepted the rules.

downloading https://www.kaggle.com/c/state-farm-distracted-driver-detection/download/sample_submission.csv.zip

sample_submission.csv.zip N/A% | | ETA: --:–:-- 0.0 s/B

Warning: download url for file sample_submission.csv.zip resolves to an html document rather than a downloadable file.

Is it possible you have not accepted the competition’s rules on the kaggle website?

Can you shed some more light or direct me to a website which explains how to use CurlWget to download the data?

Try using the Curlget chrome extension…

I couldn’t figure out how to use the curlget extension, but finally managed to download the data through kaggle cli. I found that my username was wrong. I fixed that and was able to use kg download after that.

This happened to me too, after I added an SMS number to be able to download the State Farm dataset. Like with @asthagarg and @thomzi12, using my email instead of my username fixed the issue.

Do we have to use the next edition? i.e. kernel edition? I can’t seem to get this working with the first version

I was getting the same error. Nothing seemed to work, no issue for this on github…

Attempted one last thing - installed directly from github (on version 0.13 now) still didn’t work… deleted configs in .kaggle-cli, ran again… works now.

'NoneType' object has no attribute 'find_all'

Is the error i am getting, even after running

pip install --upgrade kaggle-cli

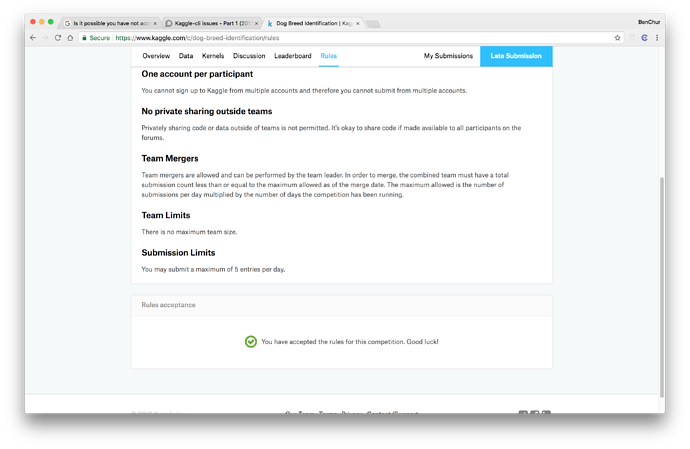

I had the same issue even after upgrading pip, and kaggle-cli. Other reasons why the issue may persist may be

i)You have not accepted the terms and conditions of the competition

ii)You might not have mentioned the competition’s name is all lower case (eg: dog-breed-identification)

This worked for me

Thanks, I ended up getting it to work!

As of a totally fresh install of kaggle-cli today on a paperspace ubuntu instance, I am unable to downlaod the dog-breed-identification dataset either.

- I’ve tried using both my email address and my username

- I’ve deleted the

~/.kaggle-cli/configfile - I’ve logged into the kaggle website as both my username and my email address and made sure I accepted their disclaimer.

Don’t know of any other possible solutions?

Greetings!

This is my first post on Discourse. Please let me know if I need to make changes.

I’m attempting to use the kaggle-cli tool on my AWS account. Everything appears to work correctly, except that when I attempt to download the files from a past competition (which is now closed) using:

kg download -u username -p password -c sberbank-russian-housing-market -f train.csv.zip

I get the error:

train.csv.zip already downloaded!

But I see nothing in my directory that corresponds to the above file. I find this very confusing. I had in the past used the ‘kg’ command to download the data for the dog-breeds-identification competition, and it worked fine. Does anyone have any thoughts on this?

Thank you for your time!

I ended up switching to the command-line-interface supported by Kaggle. This was accomplished by downloading the kaggle.json file to my home computer, changing directory to the .ssh directory, and then using:

scp -i awskey.pem /home/user/kaggle.json ubuntu@ipaddress: ~/.

to send the file to my active amazon instance. Running the command kaggle causes a .kaggle directory to be made, so I just moved the kaggle.json file into that. Then, all I had to do was type:

kaggle -c competition-name

and all of the files downloaded into ~/.kaggle/competitions/comeptition-name.

Don’t forget to secure your credentials, see: How to download data for Lesson 2 from Kaggle for Planet Competition