Would be nice to know as folks prep for next week.

you may check this tweet: https://mobile.twitter.com/jeremyphoward/status/1104096530103885825

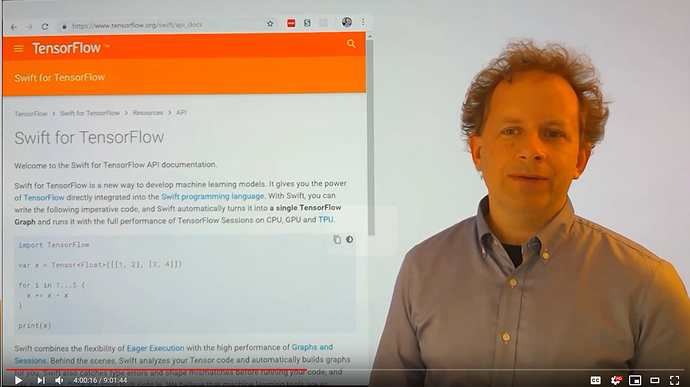

also read somewhere (cannot find source) now that Swift 4 TensorFlow will be 2 lesson in part 2.

Yes I think it was in the TF Dev summit, it was announced that Chris Lattner (Creator of swift language) will be teaching the last 2 lessons, which will cover Swift 4 Tensorflow.

and some more revealed by Jeremy here: https://twitter.com/jeremyphoward/status/1105916794978484224

Wow great progress on JIT from the

@PyTorch team - I just managed to get a BatchNorm layer that I wrote from scratch running nearly as fast as cudnn’s! Still rough edges, but the foundations are there and looking great. For details, join our next course!

We’ll basically be trying to write as much of fastai from scratch as we can. First in Python (5 weeks) then in Swift (2 weeks). We’ll be using the process to learn about the papers that are implemented there, how and why things are implemented as they are - and even rebuilding much of pytorch from scratch too (hence the above tweet).

Sounds intense! (and like a lot of fun!)

YES !!!

Sounds fun.

Are we going to be covering things like seq2seq models/data/training as part of this?

In previous years, course 2 has always focused on more advanced things such as seq2seq, object detection, GANs, and so I wonder if and where these things are going to fit into part 2.

Also as an aside, is there a reason why the TF folks chose Swift over a more established language like C#?

Thanks

The team has written a rather detailed document answering this question here.

- https://github.com/tensorflow/swift/blob/master/docs/WhySwiftForTensorFlow.md

- https://github.com/tensorflow/swift/blob/master/docs/WhySwiftForTensorFlow.md#evaluating-swift-against-our-language-properties

Also, the recent talk at the TF Dev Summit. https://www.youtube.com/watch?v=s65BigoMV_I

(kinda bummed that Rust didn’t make the cut because of usability concerns, but Swift is a very nice+modern language too, that allows for a gradual ramp up while learning. Also, extremely happy that the team didn’t settle on Go.)

So excited for this!

Yes, sequence to sequence and object detection will be included. We already covered GANs in part 1

Wow thats exciting.

Would be great if it is possible also to spend a bit of time for deployment and scaling questions. Like, ImageNet training, distributed computations, etc. I know there are a few repositories under fastai organization repo devoted to these topics but would like to discuss it during lectures also.

From podcast with one of PyTorch creators Adam Paszke I’ve learned that there’s a lot of Pytorch development effort going on in production area, integration with Caffe2 framework. Not sure if that work is ready though…

I’m certainly hoping to cover some of that.

Would be great if we could cover Transformers as well.

We will!

For version 3 part 1 I used Anaconda and python 3.6.8 is this fine for part 2 non swift environment. I say this as nightly swift installs on ubuntu 18.04 requires the native python3 . Thanks