Hello everyone, is it possible to run my classifier in my own computer without using an internet connection?

Sure! How I got mine going for little show tests/concepts is I followed the steps for Render and ran the serve.py file locally on my own machine.

Let me know after a bit of digging if you can’t get it to work and I can try to help. Basic steps are to export your model, modify the existing files for Render deployment, then run it locally. So long as you have all the libraries installed it should do fine.

Here’s a link to the documentation guide:

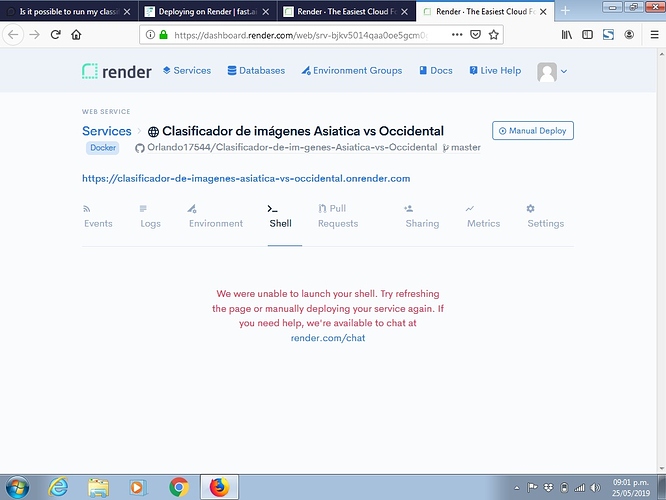

Thanks for answer, when I try to access to the terminal I receive a message like in the photo:

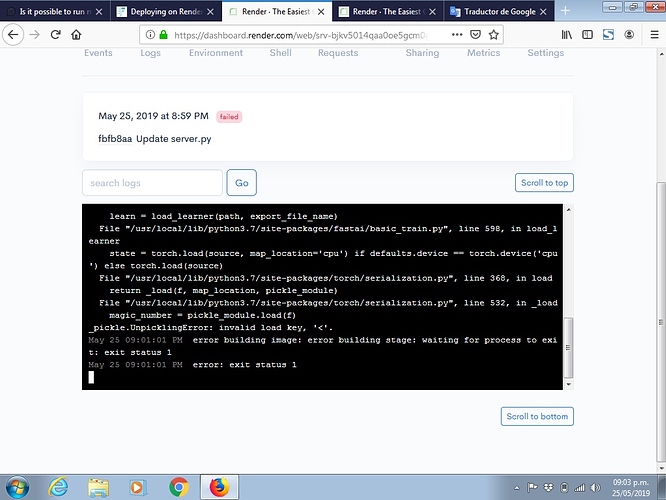

In the “Manual deploy”, I got and photo like this:

This is the code of deploy:

May 25 08:59:43 PM ==> Cloning from https://github.com/Orlando17544/Clasificador-de-im-genes-Asiatica-vs-Occidental…

May 25 08:59:43 PM ==> Checking out commit fbfb8aa819e094ab726d86afa6e359dadc7461c2 in branch master

May 25 08:59:45 PM INFO[0000] Downloading base image python:3.7-slim-stretch

May 25 08:59:46 PM INFO[0001] Downloading base image python:3.7-slim-stretch

May 25 08:59:55 PM INFO[0010] RUN apt-get update && apt-get install -y git python3-dev gcc && rm -rf /var/lib/apt/lists/*

May 25 09:00:11 PM INFO[0026] COPY requirements.txt .

May 25 09:00:11 PM INFO[0026] RUN pip install --upgrade pip

May 25 09:00:11 PM INFO[0026] RUN pip install --no-cache-dir --upgrade -r requirements.txt

May 25 09:00:55 PM INFO[0070] COPY app app/

May 25 09:00:55 PM INFO[0070] RUN python app/server.py

May 25 09:01:00 PM Traceback (most recent call last):

File “app/server.py”, line 45, in

learn = loop.run_until_complete(asyncio.gather(*tasks))[0]

File “/usr/local/lib/python3.7/asyncio/base_events.py”, line 584, in run_until_complete

return future.result()

File “app/server.py”, line 33, in setup_learner

learn = load_learner(path, export_file_name)

File “/usr/local/lib/python3.7/site-packages/fastai/basic_train.py”, line 598, in load_learner

state = torch.load(source, map_location=‘cpu’) if defaults.device == torch.device(‘cpu’) else torch.load(source)

File “/usr/local/lib/python3.7/site-packages/torch/serialization.py”, line 368, in load

return _load(f, map_location, pickle_module)

File “/usr/local/lib/python3.7/site-packages/torch/serialization.py”, line 532, in _load

magic_number = pickle_module.load(f)

_pickle.UnpicklingError: invalid load key, ‘<’.

May 25 09:01:01 PM error building image: error building stage: waiting for process to exit: exit status 1

May 25 09:01:01 PM error: exit status 1