Hello! I am getting this issue, and any help would be appreciated.

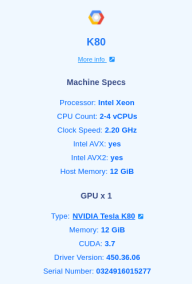

I have included the code, stack trace, as well as all installed packages below. I am using Paperspace Gradient, on a K-80 vm.

The error is: RuntimeError: cuDNN error: CUDNN_STATUS_EXECUTION_FAILED

UPDATE: Adding torch.backends.cudnn.enabled = False above the code allowed it to run… but is this the right thing to do?

UPDATE 2: Switching to a P-5000 machine worked

Code (I tried with bs=32 as well):

from fastai.text.all import *

dls = TextDataLoaders.from_folder(untar_data(URLs.IMDB), valid='test', bs=16)

learn = text_classifier_learner(dls, AWD_LSTM, drop_mult=0.5, metrics=accuracy)

learn.fine_tune(4, 1e-2)

Output / Stacktrace:

epoch train_loss valid_loss accuracy time

0 0.000000 00:00

---------------------------------------------------------------------------

RuntimeError Traceback (most recent call last)

<ipython-input-24-b19ad903618e> in <module>

5 learn = text_classifier_learner(dls, AWD_LSTM, drop_mult=0.5, metrics=accuracy)

6

----> 7 learn.fine_tune(4, 1e-2)

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastai/callback/schedule.py in fine_tune(self, epochs, base_lr, freeze_epochs, lr_mult, pct_start, div, **kwargs)

155 "Fine tune with `freeze` for `freeze_epochs` then with `unfreeze` from `epochs` using discriminative LR"

156 self.freeze()

--> 157 self.fit_one_cycle(freeze_epochs, slice(base_lr), pct_start=0.99, **kwargs)

158 base_lr /= 2

159 self.unfreeze()

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastai/callback/schedule.py in fit_one_cycle(self, n_epoch, lr_max, div, div_final, pct_start, wd, moms, cbs, reset_opt)

110 scheds = {'lr': combined_cos(pct_start, lr_max/div, lr_max, lr_max/div_final),

111 'mom': combined_cos(pct_start, *(self.moms if moms is None else moms))}

--> 112 self.fit(n_epoch, cbs=ParamScheduler(scheds)+L(cbs), reset_opt=reset_opt, wd=wd)

113

114 # Cell

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastai/learner.py in fit(self, n_epoch, lr, wd, cbs, reset_opt)

204 self.opt.set_hypers(lr=self.lr if lr is None else lr)

205 self.n_epoch = n_epoch

--> 206 self._with_events(self._do_fit, 'fit', CancelFitException, self._end_cleanup)

207

208 def _end_cleanup(self): self.dl,self.xb,self.yb,self.pred,self.loss = None,(None,),(None,),None,None

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastai/learner.py in _with_events(self, f, event_type, ex, final)

153

154 def _with_events(self, f, event_type, ex, final=noop):

--> 155 try: self(f'before_{event_type}') ;f()

156 except ex: self(f'after_cancel_{event_type}')

157 finally: self(f'after_{event_type}') ;final()

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastai/learner.py in _do_fit(self)

195 for epoch in range(self.n_epoch):

196 self.epoch=epoch

--> 197 self._with_events(self._do_epoch, 'epoch', CancelEpochException)

198

199 def fit(self, n_epoch, lr=None, wd=None, cbs=None, reset_opt=False):

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastai/learner.py in _with_events(self, f, event_type, ex, final)

153

154 def _with_events(self, f, event_type, ex, final=noop):

--> 155 try: self(f'before_{event_type}') ;f()

156 except ex: self(f'after_cancel_{event_type}')

157 finally: self(f'after_{event_type}') ;final()

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastai/learner.py in _do_epoch(self)

189

190 def _do_epoch(self):

--> 191 self._do_epoch_train()

192 self._do_epoch_validate()

193

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastai/learner.py in _do_epoch_train(self)

181 def _do_epoch_train(self):

182 self.dl = self.dls.train

--> 183 self._with_events(self.all_batches, 'train', CancelTrainException)

184

185 def _do_epoch_validate(self, ds_idx=1, dl=None):

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastai/learner.py in _with_events(self, f, event_type, ex, final)

153

154 def _with_events(self, f, event_type, ex, final=noop):

--> 155 try: self(f'before_{event_type}') ;f()

156 except ex: self(f'after_cancel_{event_type}')

157 finally: self(f'after_{event_type}') ;final()

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastai/learner.py in all_batches(self)

159 def all_batches(self):

160 self.n_iter = len(self.dl)

--> 161 for o in enumerate(self.dl): self.one_batch(*o)

162

163 def _do_one_batch(self):

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastai/learner.py in one_batch(self, i, b)

177 self.iter = i

178 self._split(b)

--> 179 self._with_events(self._do_one_batch, 'batch', CancelBatchException)

180

181 def _do_epoch_train(self):

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastai/learner.py in _with_events(self, f, event_type, ex, final)

153

154 def _with_events(self, f, event_type, ex, final=noop):

--> 155 try: self(f'before_{event_type}') ;f()

156 except ex: self(f'after_cancel_{event_type}')

157 finally: self(f'after_{event_type}') ;final()

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastai/learner.py in _do_one_batch(self)

162

163 def _do_one_batch(self):

--> 164 self.pred = self.model(*self.xb)

165 self('after_pred')

166 if len(self.yb): self.loss = self.loss_func(self.pred, *self.yb)

/opt/conda/envs/fastai/lib/python3.8/site-packages/torch/nn/modules/module.py in _call_impl(self, *input, **kwargs)

725 result = self._slow_forward(*input, **kwargs)

726 else:

--> 727 result = self.forward(*input, **kwargs)

728 for hook in itertools.chain(

729 _global_forward_hooks.values(),

/opt/conda/envs/fastai/lib/python3.8/site-packages/torch/nn/modules/container.py in forward(self, input)

115 def forward(self, input):

116 for module in self:

--> 117 input = module(input)

118 return input

119

/opt/conda/envs/fastai/lib/python3.8/site-packages/torch/nn/modules/module.py in _call_impl(self, *input, **kwargs)

725 result = self._slow_forward(*input, **kwargs)

726 else:

--> 727 result = self.forward(*input, **kwargs)

728 for hook in itertools.chain(

729 _global_forward_hooks.values(),

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastai/text/models/core.py in forward(self, input)

79 #Note: this expects that sequence really begins on a round multiple of bptt

80 real_bs = (input[:,i] != self.pad_idx).long().sum()

---> 81 o = self.module(input[:real_bs,i: min(i+self.bptt, sl)])

82 if self.max_len is None or sl-i <= self.max_len:

83 outs.append(o)

/opt/conda/envs/fastai/lib/python3.8/site-packages/torch/nn/modules/module.py in _call_impl(self, *input, **kwargs)

725 result = self._slow_forward(*input, **kwargs)

726 else:

--> 727 result = self.forward(*input, **kwargs)

728 for hook in itertools.chain(

729 _global_forward_hooks.values(),

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastai/text/models/awdlstm.py in forward(self, inp, from_embeds)

104 new_hidden = []

105 for l, (rnn,hid_dp) in enumerate(zip(self.rnns, self.hidden_dps)):

--> 106 output, new_h = rnn(output, self.hidden[l])

107 new_hidden.append(new_h)

108 if l != self.n_layers - 1: output = hid_dp(output)

/opt/conda/envs/fastai/lib/python3.8/site-packages/torch/nn/modules/module.py in _call_impl(self, *input, **kwargs)

725 result = self._slow_forward(*input, **kwargs)

726 else:

--> 727 result = self.forward(*input, **kwargs)

728 for hook in itertools.chain(

729 _global_forward_hooks.values(),

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastai/text/models/awdlstm.py in forward(self, *args)

51 # To avoid the warning that comes because the weights aren't flattened.

52 warnings.simplefilter("ignore", category=UserWarning)

---> 53 return self.module(*args)

54

55 def reset(self):

/opt/conda/envs/fastai/lib/python3.8/site-packages/torch/nn/modules/module.py in _call_impl(self, *input, **kwargs)

725 result = self._slow_forward(*input, **kwargs)

726 else:

--> 727 result = self.forward(*input, **kwargs)

728 for hook in itertools.chain(

729 _global_forward_hooks.values(),

/opt/conda/envs/fastai/lib/python3.8/site-packages/torch/nn/modules/rnn.py in forward(self, input, hx)

579 self.check_forward_args(input, hx, batch_sizes)

580 if batch_sizes is None:

--> 581 result = _VF.lstm(input, hx, self._flat_weights, self.bias, self.num_layers,

582 self.dropout, self.training, self.bidirectional, self.batch_first)

583 else:

/opt/conda/envs/fastai/lib/python3.8/site-packages/fastai/torch_core.py in __torch_function__(self, func, types, args, kwargs)

317 # if func.__name__[0]!='_': print(func, types, args, kwargs)

318 # with torch._C.DisableTorchFunction(): ret = _convert(func(*args, **(kwargs or {})), self.__class__)

--> 319 ret = super().__torch_function__(func, types, args=args, kwargs=kwargs)

320 if isinstance(ret, TensorBase): ret.set_meta(self, as_copy=True)

321 return ret

/opt/conda/envs/fastai/lib/python3.8/site-packages/torch/tensor.py in __torch_function__(cls, func, types, args, kwargs)

993

994 with _C.DisableTorchFunction():

--> 995 ret = func(*args, **kwargs)

996 return _convert(ret, cls)

997

RuntimeError: cuDNN error: CUDNN_STATUS_EXECUTION_FAILED

Packages:

adal==1.2.5

argon2-cffi @ file:///home/conda/feedstock_root/build_artifacts/argon2-cffi_1605217004767/work

async-generator==1.10

attrs @ file:///home/conda/feedstock_root/build_artifacts/attrs_1605083924122/work

azure-cognitiveservices-search-imagesearch==2.0.0

azure-common==1.1.26

backcall @ file:///home/conda/feedstock_root/build_artifacts/backcall_1592338393461/work

backports.functools-lru-cache==1.6.1

bleach @ file:///home/conda/feedstock_root/build_artifacts/bleach_1600454382015/work

blis==0.4.1

brotlipy==0.7.0

catalogue @ file:///home/conda/feedstock_root/build_artifacts/catalogue_1605613584677/work

certifi==2020.11.8

cffi @ file:///home/conda/feedstock_root/build_artifacts/cffi_1602537222527/work

chardet @ file:///home/conda/feedstock_root/build_artifacts/chardet_1602255302199/work

cryptography @ file:///home/conda/feedstock_root/build_artifacts/cryptography_1604179079864/work

cycler==0.10.0

cymem @ file:///home/conda/feedstock_root/build_artifacts/cymem_1604919356432/work

dataclasses==0.6

decorator==4.4.2

defusedxml==0.6.0

entrypoints @ file:///home/conda/feedstock_root/build_artifacts/entrypoints_1605121927639/work/dist/entrypoints-0.3-py2.py3-none-any.whl

fastai==2.1.9

fastai2==0.0.30

fastbook==0.0.16

fastcore==1.3.12

fastprocess==2.0.0

fastprogress @ file:///home/conda/feedstock_root/build_artifacts/fastprogress_1597932925331/work

future==0.18.2

graphviz==0.15

idna @ file:///home/conda/feedstock_root/build_artifacts/idna_1593328102638/work

importlib-metadata @ file:///home/conda/feedstock_root/build_artifacts/importlib-metadata_1602263269022/work

install==1.3.4

ipykernel @ file:///home/conda/feedstock_root/build_artifacts/ipykernel_1605455374814/work/dist/ipykernel-5.3.4-py3-none-any.whl

ipython @ file:///home/conda/feedstock_root/build_artifacts/ipython_1604159561527/work

ipython-genutils==0.2.0

ipywidgets @ file:///home/conda/feedstock_root/build_artifacts/ipywidgets_1599554010055/work

isodate==0.6.0

jedi @ file:///home/conda/feedstock_root/build_artifacts/jedi_1605054524035/work

Jinja2==2.11.2

joblib @ file:///home/conda/feedstock_root/build_artifacts/joblib_1601671685479/work

jsonschema @ file:///home/conda/feedstock_root/build_artifacts/jsonschema_1602551949684/work

jupyter-client @ file:///home/conda/feedstock_root/build_artifacts/jupyter_client_1598486169312/work

jupyter-console @ file:///home/conda/feedstock_root/build_artifacts/jupyter_console_1598728807792/work

jupyter-core @ file:///home/conda/feedstock_root/build_artifacts/jupyter_core_1605735009305/work

jupyterlab-pygments @ file:///home/conda/feedstock_root/build_artifacts/jupyterlab_pygments_1601375948261/work

jupyterthemes==0.20.0

kiwisolver @ file:///home/conda/feedstock_root/build_artifacts/kiwisolver_1604322295622/work

lesscpy==0.14.0

MarkupSafe @ file:///home/conda/feedstock_root/build_artifacts/markupsafe_1602267312178/work

matplotlib @ file:///home/conda/feedstock_root/build_artifacts/matplotlib-suite_1605180228501/work

mistune @ file:///home/conda/feedstock_root/build_artifacts/mistune_1605115651871/work

msrest==0.6.19

msrestazure==0.6.4

murmurhash==1.0.4

nbclient @ file:///home/conda/feedstock_root/build_artifacts/nbclient_1602859080374/work

nbconvert==5.6.1

nbdev==1.1.5

nbformat @ file:///home/conda/feedstock_root/build_artifacts/nbformat_1602732862338/work

nest-asyncio @ file:///home/conda/feedstock_root/build_artifacts/nest-asyncio_1605195931949/work

notebook @ file:///home/conda/feedstock_root/build_artifacts/notebook_1605103633466/work

numpy @ file:///home/conda/feedstock_root/build_artifacts/numpy_1604945996350/work

nvidia-ml-py3==7.352.0

oauthlib==3.1.0

olefile @ file:///home/conda/feedstock_root/build_artifacts/olefile_1602866521163/work

packaging @ file:///home/conda/feedstock_root/build_artifacts/packaging_1589925210001/work

pandas==1.1.4

pandocfilters==1.4.2

parso @ file:///home/conda/feedstock_root/build_artifacts/parso_1595548966091/work

pexpect @ file:///home/conda/feedstock_root/build_artifacts/pexpect_1602535608087/work

pickleshare @ file:///home/conda/feedstock_root/build_artifacts/pickleshare_1602536217715/work

Pillow @ file:///home/conda/feedstock_root/build_artifacts/pillow_1604748700719/work

plac==0.9.6

ply==3.11

preshed @ file:///home/conda/feedstock_root/build_artifacts/preshed_1605166129992/work

prometheus-client @ file:///home/conda/feedstock_root/build_artifacts/prometheus_client_1605543085815/work

prompt-toolkit @ file:///home/conda/feedstock_root/build_artifacts/prompt-toolkit_1605053337398/work

ptyprocess==0.6.0

pycparser @ file:///home/conda/feedstock_root/build_artifacts/pycparser_1593275161868/work

Pygments @ file:///home/conda/feedstock_root/build_artifacts/pygments_1603558917696/work

PyJWT==1.7.1

pyOpenSSL==19.1.0

pyparsing==2.4.7

PyQt5==5.12.3

PyQt5-sip==4.19.18

PyQtChart==5.12

PyQtWebEngine==5.12.1

pyrsistent @ file:///home/conda/feedstock_root/build_artifacts/pyrsistent_1605115595652/work

PySocks @ file:///home/conda/feedstock_root/build_artifacts/pysocks_1602326928339/work

python-dateutil==2.8.1

pytz @ file:///home/conda/feedstock_root/build_artifacts/pytz_1604321279890/work

PyYAML==5.3.1

pyzmq==20.0.0

qtconsole @ file:///home/conda/feedstock_root/build_artifacts/qtconsole_1599147533948/work

QtPy==1.9.0

requests @ file:///home/conda/feedstock_root/build_artifacts/requests_1605186911681/work

requests-oauthlib==1.3.0

scikit-learn @ file:///home/conda/feedstock_root/build_artifacts/scikit-learn_1604232448678/work

scipy @ file:///home/conda/feedstock_root/build_artifacts/scipy_1604304779838/work

Send2Trash==1.5.0

sentencepiece==0.1.94

six @ file:///home/conda/feedstock_root/build_artifacts/six_1590081179328/work

spacy @ file:///home/conda/feedstock_root/build_artifacts/spacy_1605692171608/work

srsly @ file:///home/conda/feedstock_root/build_artifacts/srsly_1605085673973/work

terminado @ file:///home/conda/feedstock_root/build_artifacts/terminado_1602679586280/work

testpath==0.4.4

thinc @ file:///home/conda/feedstock_root/build_artifacts/thinc_1605620876750/work

threadpoolctl @ file:///tmp/tmp79xdzxkt/threadpoolctl-2.1.0-py3-none-any.whl

torch==1.7.0

torchvision==0.8.1

tornado @ file:///home/conda/feedstock_root/build_artifacts/tornado_1604105045397/work

tqdm @ file:///home/conda/feedstock_root/build_artifacts/tqdm_1605543106900/work

traitlets @ file:///home/conda/feedstock_root/build_artifacts/traitlets_1602771532708/work

typing-extensions @ file:///home/conda/feedstock_root/build_artifacts/typing_extensions_1602702424206/work

urllib3 @ file:///home/conda/feedstock_root/build_artifacts/urllib3_1603125704209/work

utils==1.0.1

wasabi @ file:///home/conda/feedstock_root/build_artifacts/wasabi_1600272362626/work

wcwidth @ file:///home/conda/feedstock_root/build_artifacts/wcwidth_1600965781394/work

webencodings==0.5.1

widgetsnbextension @ file:///home/conda/feedstock_root/build_artifacts/widgetsnbextension_1605475534911/work

zipp @ file:///home/conda/feedstock_root/build_artifacts/zipp_1603668650351/work

VM Specs: