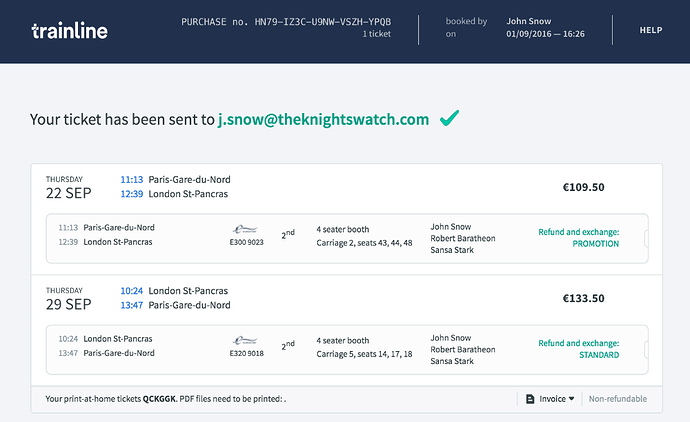

Hello all. I’m trying to design a pipeline to extract label-value pairs (e.g. DATE: 9-22-16) from screenshots of train ticket confirmation pages like this one:

The screenshots are user-submitted, so the precise position of each field varies from image to image. I understand that this is an OCR and Text Recognition task. Simply running the whole image through Tesseract isn’t producing great results. I’m thinking of trying to identify the regions holding specific information such as the departure date or departure trip price, then running OCR on each region individually to get the value associated with each label. Is this approach viable, and how would I go about identifying the regions?

I was thinking object recognition, but the position and format of a region is more important for its identification than the specific characters that appear. For example, the only difference between the return and outgoing trips in the above image is their position (outgoing is above return). Are there approaches that could be used for this sort of task?

Any information about similar tasks would also be helpful.

Thanks.