I was playing around with dynamic sparse training techniques around the time that I found nbdev and the result is a tiny new “fastai-addon” library: fastsparse, (docs).

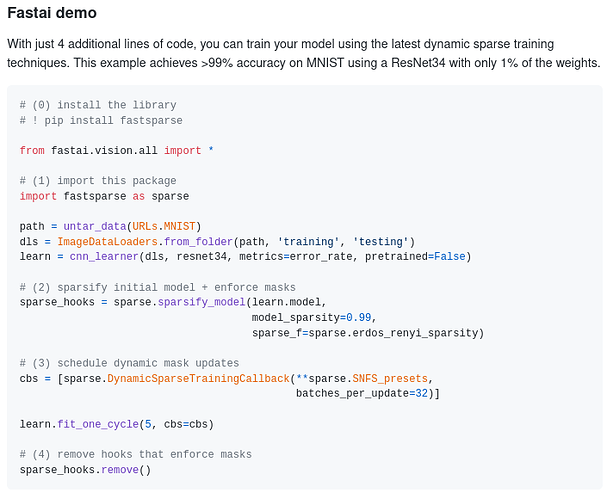

In only 4 lines of code (okay…5 if you count pip-installing the library), you can sparsify any PyTorch model and run SOTA sparse-training techniques including Sparse Networks From Scratch (SNFS) and Rigged Lottery (RigL). Additionally, this fastai-addon makes it super easy to build your own custom sparse training techniques simply by defining a new weight-scoring heuristic to tell the model which weights to drop and/or grow (see docs for example).

I hope some folks here might find this fun to experiment with! And if you really, really like it, don’t forget to drop a star on the repo!

Oh, one more thing. Nbdev is amazing. Still kinda reeling that I actually went from messy exploratory code to a continously-integrated, pip-installable, fully-documentated library in just one day! (Okay, okay…plus a few more days to tidy up, but who’s counting? The work is never quite done.)