There’s a new paper out from FB research about parallelizing SGD across multiple GPUs in large batches that allows them to train Resnet on the full imagenet dataset in 1 hour. Pretty cool stuff!

1 Like

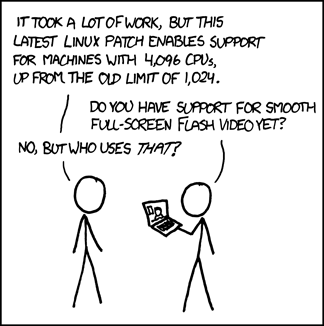

I suspect this xkcd comic will not be as funny in a few months: