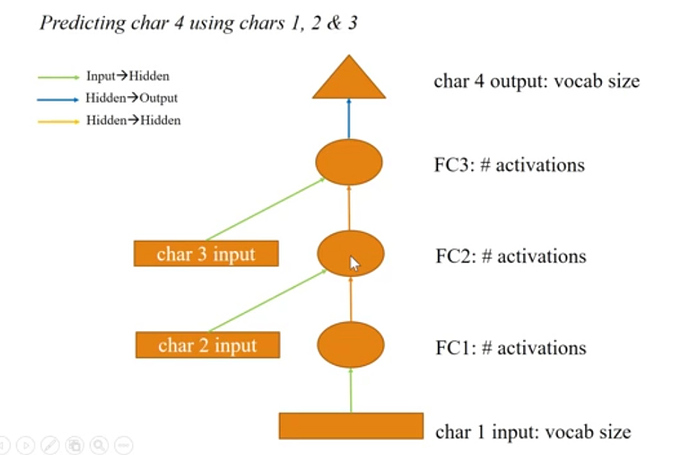

I found the Neural networks diagrams in lesson 6 a bit difficult to understand and map with the given code. The comments (in code) say the green arrows correspond to input-hidden layer operation, while the orange ones correspond to hidden-hidden layer operation. But in code we apply l_hidden three times while we only have two orange arrows.

Could anybody please clarify this confusion ? Here’s the code and the diagram

def forward(self, c1, c2, c3):

in1 = F.relu(self.l_in(self.e(c1)))

in2 = F.relu(self.l_in(self.e(c2)))

in3 = F.relu(self.l_in(self.e(c3)))

h = V(torch.zeros(in1.size()).cuda())

h = F.tanh(self.l_hidden(h+in1))

h = F.tanh(self.l_hidden(h+in2))

h = F.tanh(self.l_hidden(h+in3))

return F.log_softmax(self.l_out(h))