Has anyone tried deploying a fastai tabular data solution with flask ?

If so how did you make predictions using the model loaded into the .py file and output the results ?

I have not done tabular specifically yet, but deployment for all FastAI models are the same. Export and then when you want to predict, I’d recommend something along the lines of specify fields people fill in, convert that all to a pandas array in the background, and pass this into learn.predict() as that’s all we are doing for any model. Here is a GitHub repo where someone deployed an Image classifier, and it should be pretty easy to restructure it for tabular models. If you have issues let me know (I’ve deployed both NLP and Image classification models at this point)

Or let me step back. How much data are you hoping to process in one iteration and how do you plan on getting the input data? If it’s a CSV document or such you’d need to parse that.

Hi @spacecadet

We are working on an open source project to help to solve this exact problem. It called bentoml(github.com/bentoml/bentoml). From model in jupyter notebook to production ML service in 5 minutes. You can play around with the quick start notebook on Google Colab.

For fast.ai. I am working on support it at the moment. It should be out this or next week. Stay toned.

Here is a quick example of how it will works in your scenario:

After you trained your learner with FastAI, on the next cell you spec out your service

%%writefile my_fastai_model_service.py

from bentoml import api, env, BentoService, artifacts

from bentoml.artifact import FastaiModelArtifact

@env(conda_env=['fastai'])

@artifacts([FastaiModelArtifact('learner')])

class MyFastAIService(BentoService):

@api(DataframeHandler)

def predict(self, df):

return self.artifacts.learner.predict(df)

In the next cell, we can pack our learner artifact and archive our service as archive in the file system

from my_fastai_model_service import MyfastAIService

service = MyFastAIService.pack(learner=learner)

saved_path = service.save('/tmp/bento_archive')

Now we can server it as Rest API endpoints without you write single line of web server code! We can call inside jupyter notebook or in the commandlin

# inside notebook

! bentoml serve {saved_path}

or

bentoml serve PATH/TO/ARCHIVE

It will automatically handle parsing csv file to dataframe for us.

I will ping you guys once we bring the fast ai model support online!

Cheers

Bo

I’m having a hard time just using the tabular module in fastai. Have you guys got an examples where using fastai is better than rf/knn?

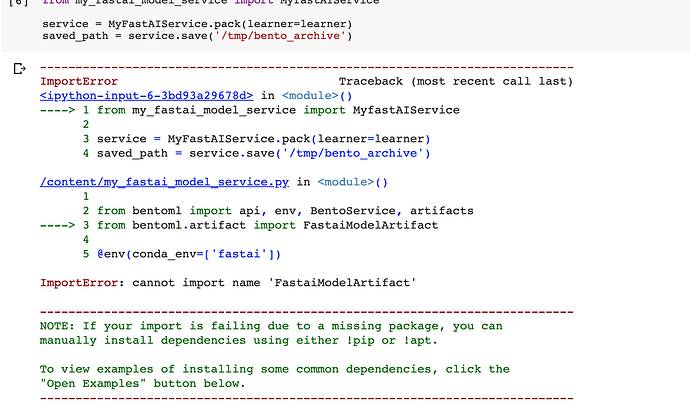

@yubozhao thanks a lot for the tip, so i tried it out but i kept getting stuck with "

ImportError: cannot import name ‘FastaiModelArtifact’" . i tried to go through the docs, but still, haven’t been able to figure out the issue .

anyone with any ideas on this?

ohh am guessing its because the fastai model support isnt yet online ?

Yes. The fastai model suport is not online yet. You can check out the work on this PR about it (https://github.com/bentoml/BentoML/pull/198). It should be merged and published this week or next week. We want to do more tests for it, before share with the community in large.

You just had a preview of it

If you are at the very early stage, then perhaps the following post I wrote might help?

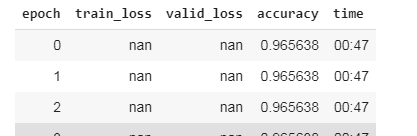

Hey, I’m just doing something wring and getting nan for both train_loss and test_loss

Thanks for sharing, I will have a go at this.

The optimization has diverged. You would need to change the hyper parameters, e.g. the learning rate is perhaps too big in your model?

I’ll try it out and give the update. Thanks

@spacecadet

We just released our fastai support for BentoML 0.3 version, try it out! The example notebook is in this directory https://github.com/bentoml/gallery/tree/master/fast-ai. We use both lesson 1 (pet classification) and lesson 4(tabular-csv) as examples.

Let me know what you think!

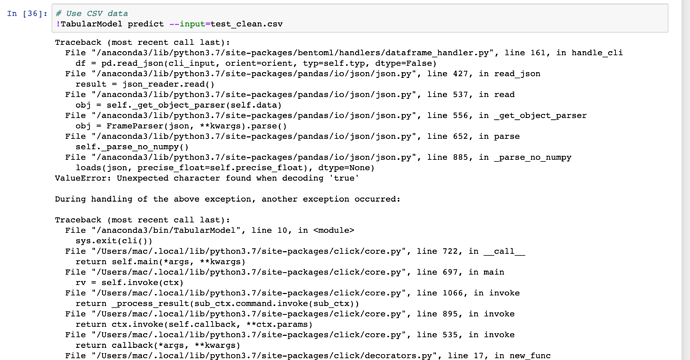

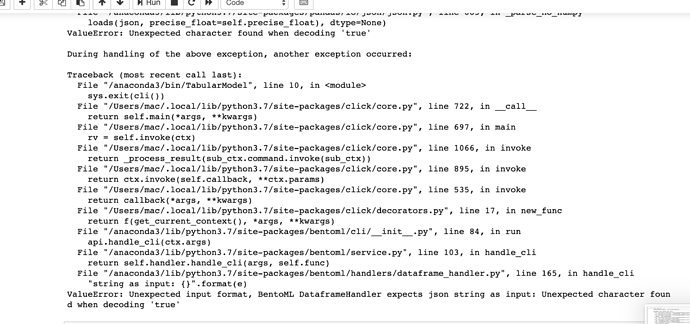

@yubozhao so i tried bentoml the example for tabular for fastai and it worked fine

i later wanted to try it with a different dataset (kaggle rossmann data ) but i kept getting some errors while trying to use bentoMl archive as a CLI tool

am yet to figure out the issue but once i do i will update

any ideas would be highly appreciated .

to further get a clearer picture of the model

you can find it here

Hi @spacecadet.

I clone and try out your notebook. You can find it here (https://github.com/yubozhao/RossmanSales/blob/master/rossman_sales_bentoml.ipynb)

I didn’t encounter the issue you post. I did used a smaller testing dataset. The 19Mb one took too long for me.

# this is what I used to generate the testing data csv

c = df.iloc[0:4]

print(c)

c.to_csv('./small_test.csv')

If you can go through that and let me know did you encounter any issues or not. Love to help you figure out what happen and how we can solve this.

Bo

Hi @yubozhao sorry i couldn’t get back to you earlier , i tried it again ,and it worked fine the first issue from the screenshots was due to a grammatical error in the function predict i hadnt properly identified the model artifact, the 2nd time i had an issue i had got was it was taking forever on the full test set but it worked quick once i used a smaller testing set.

Thanks for help

i will try out a couple more solutions and i will ping you guys on the results