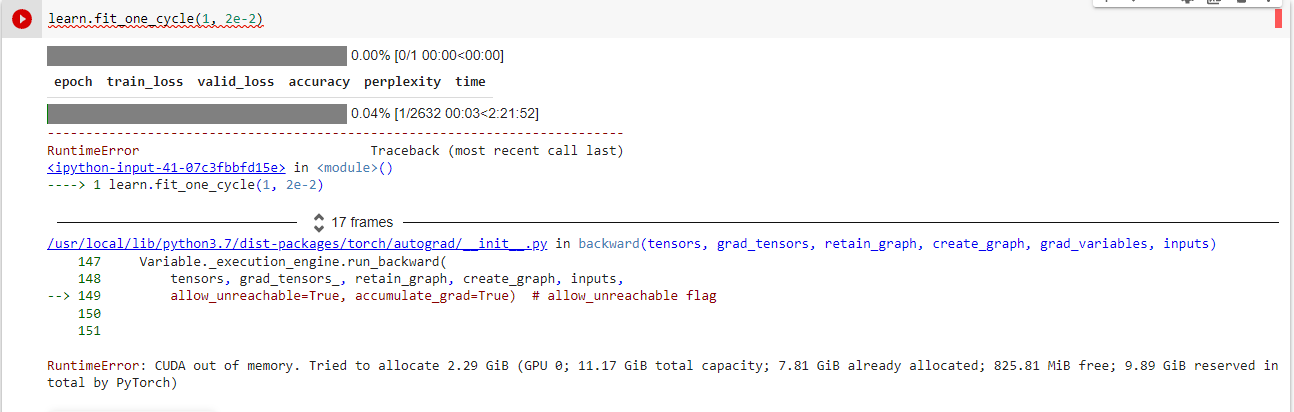

What is your batch size. If the batch size is large and the available GPU RAM is limited then CUDA out of memory error may occur

This happens with large models.

Try to reduce the batch size to 64 or 128.

1 Like

Also if using a jupyter notebook, you might need to restart the kernel.

1 Like

How to train the model faster, I change bs= 32 but it is still slow.

Ideally increasing batch size makes training faster. Usually if GPU RAM is the bottleneck then you will have to experiment with the largest batch size that you can use without stumbling upon CUDA out of memory issue.

The other thing is that if you are experimenting with your model/data better to take a smaller subset of your data. This would make training faster and would help you to experiment more.

1 Like