Hi @jeremy

Very interesting issue here, also should be easy to recreate. I was just trying to modify the source a little bit, primarily because I have a double GPU machine, and thought I’d try to anyways… for a lot of people, I understand this could be completely irrelevant (hence not that high priority of an issue).

Steps to recreate

-

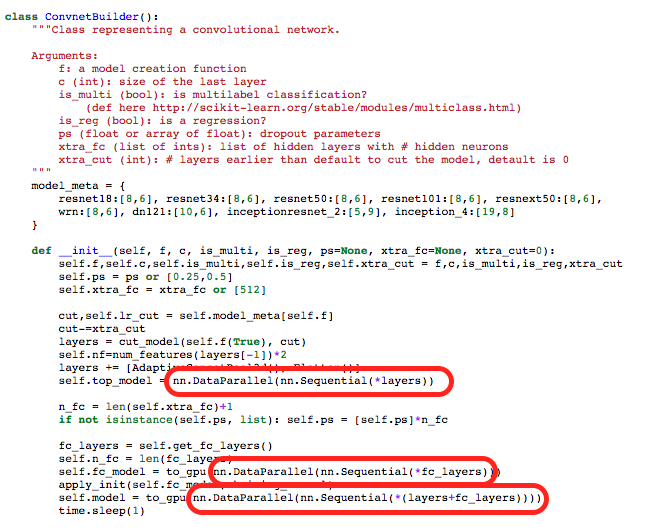

Modify ConvnetBuilder.py, so that you surround the instantiation of each “Sequential” model creation with nn.DataParallel(…)"

-

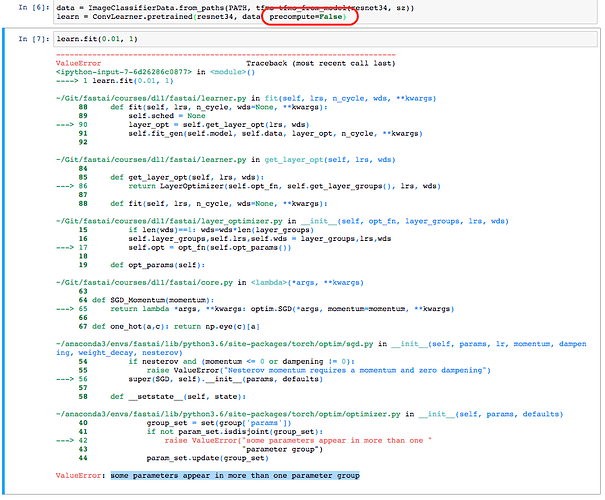

In lesson1.ipynb, attempt to create a learner with precompute=False.

-

Try running learn.fit(…)

-

A stack-exception will be thrown in the torch library.

Strangely enough, I don’t see this if precompute=True. Hence, I figured it might be an issue with the fastai library too. I might also attempt to contact py-torch directly.

My motivation for doing the code change above is to have multiple gpu utilization using PyTorch’s out of the box nn.DataParallel module. In a simple experiment, I was able to follow the above pattern to run the model on multiple GPUs. Additionally, by setting precompute=True and running the model, I can still seem to run the model against both the GPUs.

Let me know. I’ve attached screenshots: