I am running into some issues about net.eval() inconsistency in PyTorch. I’ve trained a model which has batchnorm and dropout, then I’ve evaluated model performance on validation set but every time I am getting a different result. I was wondering if anyone else faced a similar problem. I shared my problem here as well https://discuss.pytorch.org/t/model-eval-gives-incorrect-loss-for-model-with-batchnorm-layers/7561/3.

But hope and believe you guys can help me better understand my problem.

def evaluate(net, valid_dl):

val_true = []

val_pred = []

for i, data in enumerate(valid_dl, 0):

#get the inputs

inputs, labels = data

#wrap them in Variable

inputs, labels = V(inputs.float()), V(labels)

outputs = net(inputs)

#get cross entropy loss and accuracy

val_true.append(labels.data)

val_pred.append(outputs.data)

#define validation variables

val_pred_concat = V(torch.cat(val_pred))

val_true_concat = V(torch.cat(val_true))

#validation cross entropy loss

val_loss = criterion(val_pred_concat, val_true_concat).data[0]

#validation accuracy

_, class_pred = torch.max(val_pred_concat, 1)

val_acc = sum((class_pred == val_true_concat).data) / len(class_pred)

#confusion matrix

cmat = confusion_matrix(val_true_concat.data.numpy(), class_pred.data.numpy())

return val_loss, val_acc, cmat

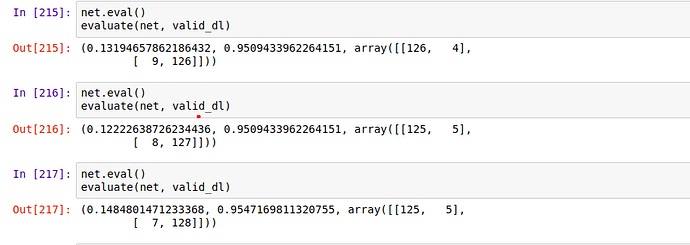

Without Batchnorm and Dropoout

Edit

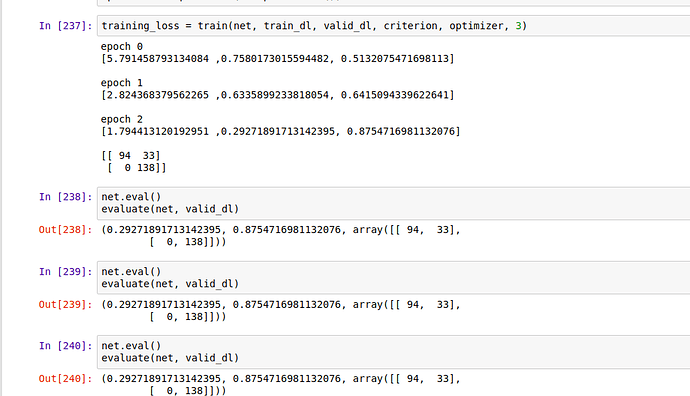

Ok it’s solved, a silly mistake. For others who may do the same thing, is that my dropout training argument was hard-coded.

Bad Mistake

x = F.dropout(x, p=0.5, training=True)

Correct way

x = F.dropout(x, p=0.5, training=self.training)

Thanks !