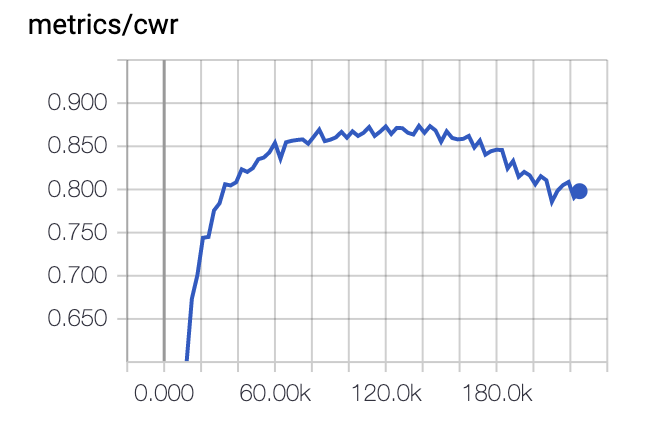

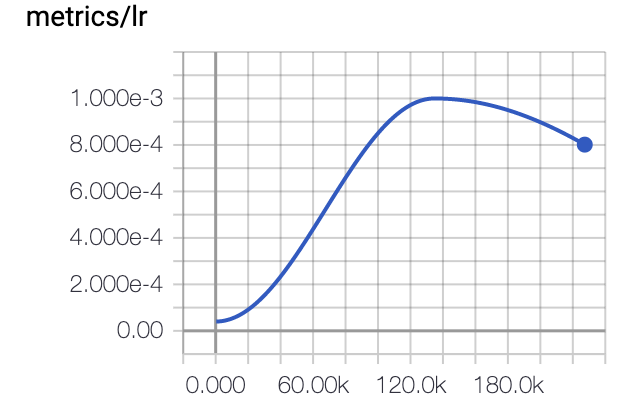

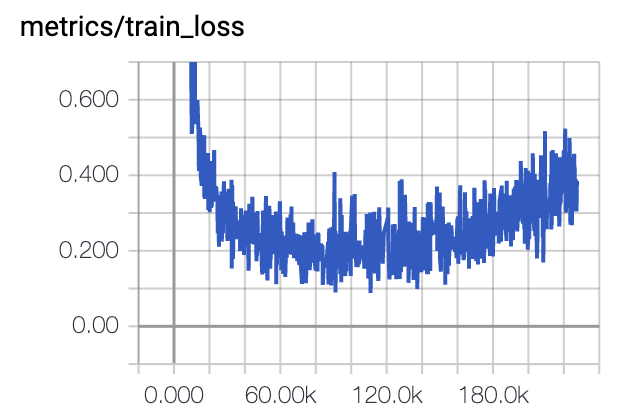

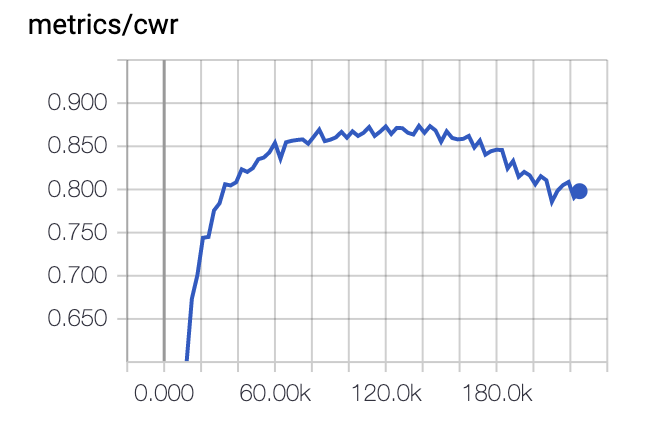

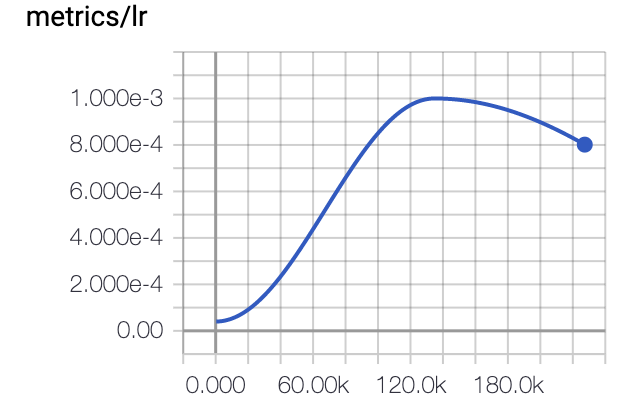

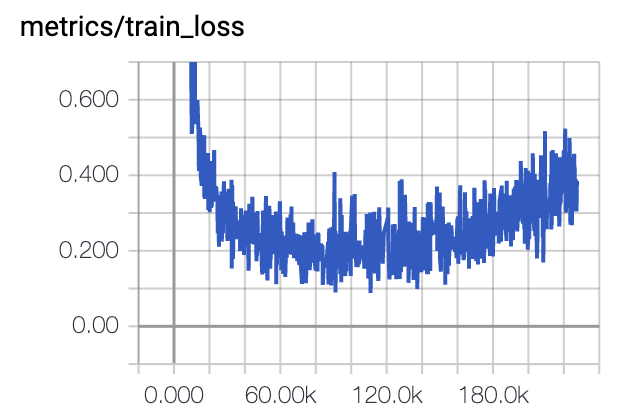

I use one cycle to training my model. it seems that this is not overfitting as the training loss also decreases. what’s the reason why reducing the learning rate hurt the performance?

I use one cycle to training my model. it seems that this is not overfitting as the training loss also decreases. what’s the reason why reducing the learning rate hurt the performance?