Hi all,

Kaggle has some competitions that use MAPK as evaluation metric(whale identification,doodle recognition…etc), but currently fast-ai metric only supports top-k accuracy. I am wondering if a PR to add MAPK is needed?

According to this poston Kaggle, I implemented the MAPK and tested on fast-ai Cifar10 dataset.

The idea is simple,

-

calculate single_prediction MAPK value

If targs has batch size (m, classes), then single_pred has size (1,classes) -

take average of the single_prediction MAPK of the batch, fast-ai library will take care of the whole validation set.

def mapk(preds,targs,k=5):

batch_pred = preds.sort(descending=True)[1] #batch_size * classes

return torch.tensor(np.mean([single_map(p,l,k) for l,p in zip(targs,batch_pred)])) #return tensor instead of npdarray

def single_map(pred,label,k=5):

try:

return 1/ ((pred[:k] == label).nonzero().item()+1) #scalar division

except ValueError:

return 0.0

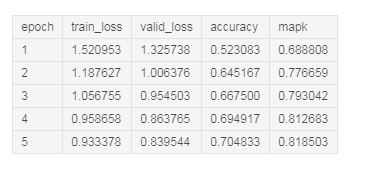

Here are some test result:

By default, it calculates MAP5

You can also use partial(mapk,k) to change it to desired MAPk metric.

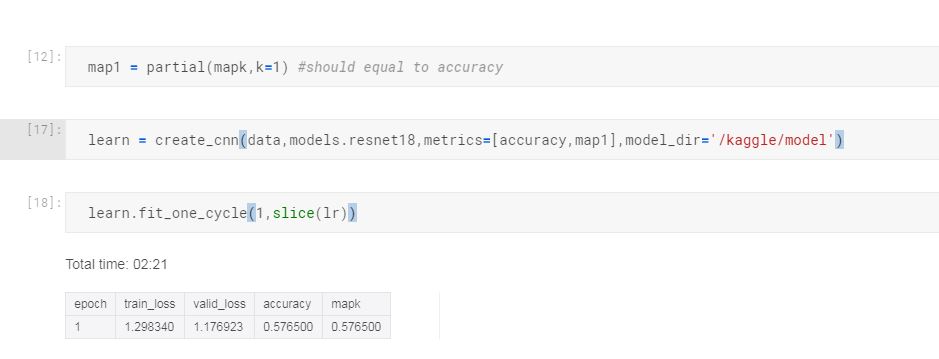

Here is an example of MAP1, it should match accuracy since it is calculating MAP1