I was digging into the adagrad paper to figure out how it worked and I got stuck on this part:

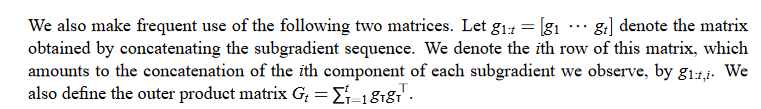

This is the most important part of the paper as far as I can tell so it was important to overcome my confusion. So lets just think about what g_1 is. This is the first gradient produced by our loss. What the second line is saying is just that in order to make this vector, you concat all of your gradients for your model.

Next, let’s consider what G_t = \sum_{T=1}^t(g_Tg_T {^\intercal}) is saying. Basically it is saying to take your gradient vectors from your first one to your final one and transpose them together. This later gets ruled out as being computationally infeasible and is replaced with a diagonal at each time step. so g_1g_1{^\intercal} aka g_1{^2}. So in practice, G_t ends up being a vector of the gradients squared up to that time step. G_t = sum([g_1{^2}, g_2{^2}, ..., g_t{^2}]).

The full formula for adagrad is

g_{t, i} = \nabla_\theta J( \theta_{t, i} )

\theta_{t+1} = \theta_{t} - \dfrac{\eta}{\sqrt{G_{t} + \epsilon}} \odot g_{t}

so \eta is constant, but G_t is always increasing or staying the same. g_t is just a vector of the gradients at the current time step.