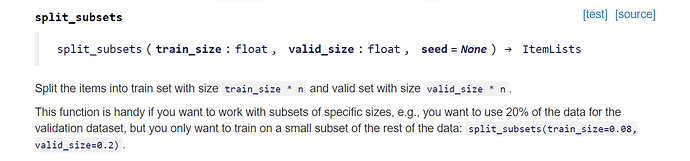

Does somebody know an equivalent of this in fastai2, it was fantastic to experiment with a portion of the total dataset

It looks like no but give me a moment.

If you want to try to figure out a way to go about implementing it, take a look at RandomSplitter:

def RandomSplitter(valid_pct=0.2, seed=None, **kwargs):

"Create function that splits `items` between train/val with `valid_pct` randomly."

def _inner(o, **kwargs):

if seed is not None: torch.manual_seed(seed)

rand_idx = L(int(i) for i in torch.randperm(len(o)))

cut = int(valid_pct * len(o))

return rand_idx[cut:],rand_idx[:cut]

return _inner

I might play with that this weekend if no one else does, i’ve been wanting something like that. only thought i had is if there isn’t shuffling of items somewhere or some kind of check to make sure you are not missing labels, you might end up missing labels in your train/valid.

@foobar8675 I was imagining something similar to this where once after they’re split into train valid then you sample those lists. Or you do a wrapper around any split that way we can get any split function used, not just random splitter.

Hi muellerzr hope all is well!

I built an image classifier model on colab about five minutes ago, I use the starter code for render.com as my baseline.

When trying to deploy the app locally i get the following error.

raise RuntimeError('Attempting to deserialize object on a CUDA '

RuntimeError: Attempting to deserialize object on a CUDA device but torch.cuda.is_available() is False. If you are running on a CPU-only machine, please use **torch.load with map_location=torch.device('cpu')** to map your storages to the CPU.

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "app/server.py", line 52, in <module>

learn = loop.run_until_complete(asyncio.gather(*tasks))[0]

File "/opt/anaconda3/lib/python3.7/asyncio/base_events.py", line 579, in run_until_complete

return future.result()

File "app/server.py", line 45, in setup_learner

raise RuntimeError(message)

RuntimeError:

This model was trained with an old version of fastai and will not work in a CPU environment.

Please update the fastai library in your training environment and export your model again.

I thought I saw a link where you had created a starter repo for fastai2 but haven’t been able to find it again.

My local machine does not have a GPU but I have at least 70 different classifers which run no problem using fastai1-v3 on my local machine.

What do I need to change or configure to resolve this error?

Many thanks for your help mrfabulous1

This means that the model is being loaded in as cuda when in fact you don’t have a GPU. How are you getting the model in? Via load_learner? If so, try doing as the suggestion says, and instead load in your model as:

learn = torch.load('mymodel.pkl', map_location=torch.device('cpu')

Hi muellerzr

learn = torch.load('mymodel.pkl', map_location=torch.device('cpu'))

That’s fabulous I tried something similar but I left ‘with’ in there.

Thanks for your help mrfabulous1

Doing something very similar right now, that’s how I caught it, I did the same thing of sorts

Thanks for doing this. I’m mostly interested in NLP and also tabular data these days but I’m starting to watch the videos and catching up now as all my work so far has been with version 1. Eagerly awaiting Block 2+3

Hi @muellerzr,

Would I be able to join the group just for the tabular data lectures without going through the previous lectures? Please let me know.

I’d recommend the previous notebooks at the very least so you get used to the new API. Up to lesson 3 and you should be alright. But sprinkled in the lessons are techniques that can be applied for tabular and NLP too (like Cross Validation, etc) so to each their own. Weeks 5- end of vision not so much, as those are very vision specific.

I found using Ranger optimtizer and Mish activation function makes efficientnet training much easier! Heres my repo with fastaiv2 examples: https://github.com/morganmcg1/stanford-cars

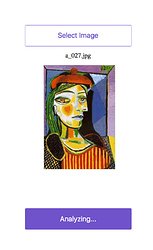

I am trying to deploy a classifier based on fastai2 using these resources 02_Deployment.ipynb, teddy bear classifier.

The model works fine on colab and I have a fastai-v3 version running successfully,

however when running the fastai2 version of the code locally it is stuck in analyzing.

I receive the following error in the console (sorry about the length of the error but I wanted to give as much information as possible).

(venv) (base) Mrs-MacBook-Pro:fastai-v3-master fabulous$ python app/server.py serve

INFO: Started server process [97187]

INFO: Waiting for application startup.

INFO: Uvicorn running on http://0.0.0.0:5000 (Press CTRL+C to quit)

INFO: ('127.0.0.1', 57587) - "GET / HTTP/1.1" 200

INFO: ('127.0.0.1', 57587) - "GET /static/style.css HTTP/1.1" 200

INFO: ('127.0.0.1', 57588) - "GET /static/client.js HTTP/1.1" 200

INFO: ('127.0.0.1', 57589) - "GET /favicon.ico HTTP/1.1" 404

INFO: ('127.0.0.1', 57635) - "POST /analyze HTTP/1.1" 500

ERROR: Exception in ASGI application

Traceback (most recent call last):

File "/Users/fabulous/fastai2_20200114/artists_classifier/venv/lib/python3.7/site-packages/uvicorn/protocols/http/httptools_impl.py", line 368, in run_asgi

result = await app(self.scope, self.receive, self.send)

File "/Users/fabulous/fastai2_20200114/artists_classifier/venv/lib/python3.7/site-packages/starlette/applications.py", line 133, in __call__

await self.error_middleware(scope, receive, send)

File "/Users/fabulous/fastai2_20200114/artists_classifier/venv/lib/python3.7/site-packages/starlette/middleware/errors.py", line 122, in __call__

raise exc from None

File "/Users/fabulous/fastai2_20200114/artists_classifier/venv/lib/python3.7/site-packages/starlette/middleware/errors.py", line 100, in __call__

await self.app(scope, receive, _send)

File "/Users/fabulous/fastai2_20200114/artists_classifier/venv/lib/python3.7/site-packages/starlette/middleware/cors.py", line 84, in __call__

await self.simple_response(scope, receive, send, request_headers=headers)

File "/Users/fabulous/fastai2_20200114/artists_classifier/venv/lib/python3.7/site-packages/starlette/middleware/cors.py", line 140, in simple_response

await self.app(scope, receive, send)

File "/Users/fabulous/fastai2_20200114/artists_classifier/venv/lib/python3.7/site-packages/starlette/exceptions.py", line 73, in __call__

raise exc from None

File "/Users/fabulous/fastai2_20200114/artists_classifier/venv/lib/python3.7/site-packages/starlette/exceptions.py", line 62, in __call__

await self.app(scope, receive, sender)

File "/Users/fabulous/fastai2_20200114/artists_classifier/venv/lib/python3.7/site-packages/starlette/routing.py", line 585, in __call__

await route(scope, receive, send)

File "/Users/fabulous/fastai2_20200114/artists_classifier/venv/lib/python3.7/site-packages/starlette/routing.py", line 207, in __call__

await self.app(scope, receive, send)

File "/Users/fabulous/fastai2_20200114/artists_classifier/venv/lib/python3.7/site-packages/starlette/routing.py", line 40, in app

response = await func(request)

File "app/server.py", line 158, in analyze

pred = learn.predict(BytesIO(img_bytes))[0]

File "/Users/fabulous/fastai2_20200114/artists_classifier/venv/lib/python3.7/site-packages/fastai2/learner.py", line 325, in predict

dl = self.dls.test_dl([item], rm_type_tfms=rm_type_tfms)

File "/Users/fabulous/fastai2_20200114/artists_classifier/venv/lib/python3.7/site-packages/fastai2/data/core.py", line 315, in test_dl

test_ds = test_set(self.valid_ds, test_items, rm_tfms=rm_type_tfms) if isinstance(self.valid_ds, Datasets) else test_items

File "/Users/fabulous/fastai2_20200114/artists_classifier/venv/lib/python3.7/site-packages/fastai2/data/core.py", line 305, in test_set

if rm_tfms is None: rm_tfms = [tl.infer_idx(test_items[0]) for tl in test_tls]

File "/Users/fabulous/fastai2_20200114/artists_classifier/venv/lib/python3.7/site-packages/fastai2/data/core.py", line 305, in <listcomp>

if rm_tfms is None: rm_tfms = [tl.infer_idx(test_items[0]) for tl in test_tls]

File "/Users/fabulous/fastai2_20200114/artists_classifier/venv/lib/python3.7/site-packages/fastai2/data/core.py", line 222, in infer_idx

assert idx < len(self.types), f"Expected an input of type in \n{pretty_types}\n but got {type(x)}"

AssertionError: Expected an input of type in

- <class 'pathlib.PosixPath'>

- <class 'pathlib.Path'>

- <class 'str'>

- <class 'torch.Tensor'>

- <class 'numpy.ndarray'>

- <class 'bytes'>

- <class 'fastai2.vision.core.PILImage'>

but got <class '_io.BytesIO'>

The snippet of code I believe could be generating the error is located in the server.py file.

@app.route('/analyze', methods=['POST'])

async def analyze(request):

img_data = await request.form()

img_bytes = await (img_data['file'].read())

pred = learn.predict(BytesIO(img_bytes))[0]

return JSONResponse({

'results': str(pred)

Question?

Has anyone got any suggestions how I might fault find the cause of this error?

I have had a look here in fastai2.vision.core but not sure if this is a cause or symptom.

Cheers mrfabulous1

@mrfabulous1 predict should be able to use BytesIO okay… (the lesson 2 from the course even shows it). @sgugger is the deployment example wrong and we need to convert BytesIO into something else?

It all depends if you can feed that to PILImage.create successfully or not. If you can, then that type needs to be added in the type annotation. It’s possible it works, it’s just predict has a defensive mechanism to make sure it gets data of the right type, and if it hasn’t seen that type at training (here it has seen Paths, not io objects) then it relies on the type annotation of the transforms to know it’s a safe type to get.

I’ll take a look today and put in a PR if it does

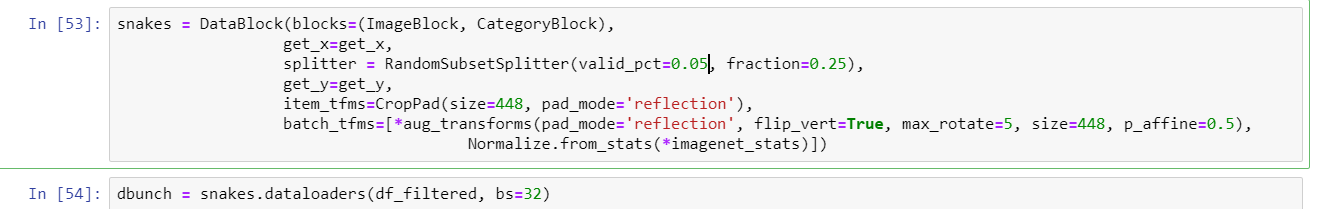

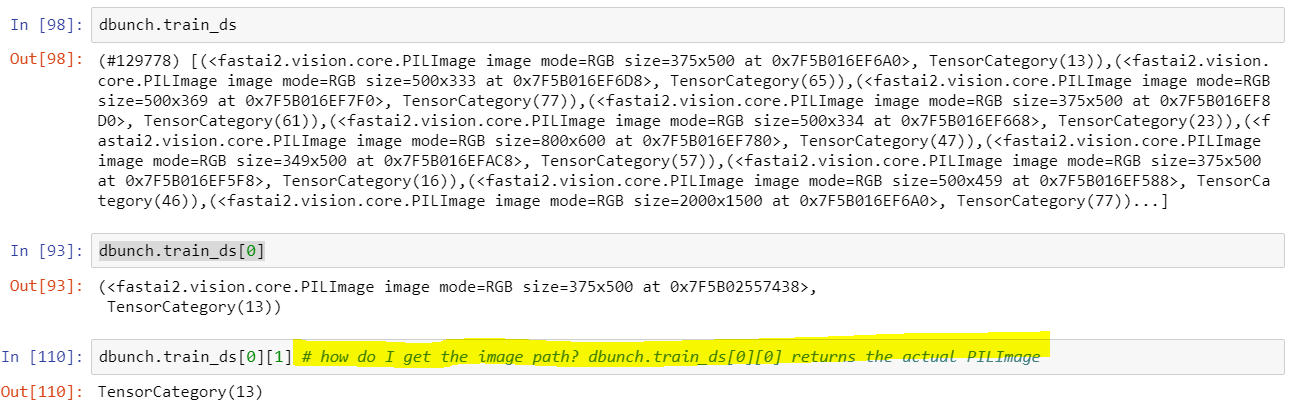

How do you get the image path from this dbunch? I can easily get the category but the x returns me the image… I just cannot figure it out

Once the dbunch has been created…

@mgloria I may be a moment as I won’t get to look at things until tonight. Can you do a .path on it? (Curious) or .name? You can see the attributes by doing dir() on a variable

Sure! No dbunch.name, dbunch.path returns Path(’.’) and available methods as follow:

['__class__',

'__delattr__',

'__dict__',

'__dir__',

'__doc__',

'__eq__',

'__format__',

'__ge__',

'__getattr__',

'__getattribute__',

'__getitem__',

'__gt__',

'__hash__',

'__init__',

'__init_subclass__',

'__le__',

'__lt__',

'__module__',

'__ne__',

'__new__',

'__reduce__',

'__reduce_ex__',

'__repr__',

'__setattr__',

'__setstate__',

'__sizeof__',

'__str__',

'__subclasshook__',

'__weakref__',

'_default',

'_device',

'_dir',

'_docs',

'_xtra',

'add',

'add_na',

'after_batch',

'after_batch',

'after_item',

'after_item',

'after_iter',

'append',

'argwhere',

'as_item',

'as_item_force',

'attrgot',

'before_batch',

'before_batch',

'before_iter',

'bs',

'c',

'cat',

'categorize',

'chunkify',

'clear',

'concat',

'copy',

'count',

'cpu',

'create_batch',

'create_batches',

'create_item',

'cuda',

'cycle',

'dataloaders',

'dataloaders',

'dataset',

'decode',

'decode',

'decode',

'decode_batch',

'decodes',

'default',

'device',

'device',

'do_batch',

'do_item',

'drop_last',

'encodes',

'enumerate',

'fake_l',

'filter',

'from_dblock',

'fs',

'get_idxs',

'index',

'indexed',

'infer',

'infer_idx',

'init_enc',

'input_types',

'itemgot',

'items',

'items',

'loaders',

'loss_func',

'map',

'map_dict',

'map_zip',

'map_zipwith',

'n',

'n_inp',

'n_inp',

'n_subsets',

'n_subsets',

'new',

'new_empty',

'new_empty',

'nw',

'o',

'offs',

'one_batch',

'order',

'overlapping_splits',

'overlapping_splits',

'path',

'pin_memory',

'pop',

'prebatched',

'product',

'randomize',

'range',

'reduce',

'remove',

'retain',

'reverse',

'rng',

'sample',

'set_as_item',

'set_split_idx',

'setup',

'setups',

'show',

'show',

'show_batch',

'show_results',

'shuffle',

'shuffle',

'shuffle_fn',

'sort',

'sorted',

'split',

'split_idx',

'split_idx',

'splits',

'splits',

'stack',

'starmap',

'subset',

'subset',

'sum',

'tensored',

'test_dl',

'tfms',

'timeout',

'tls',

'train',

'train',

'train',

'train_ds',

'train_setup',

'transform',

'types',

'unique',

'use_as_item',

'val2idx',

'valid',

'valid',

'valid',

'valid_ds',

'vocab',

'weighted_databunch',

'wif',

'zip',

'zipwith']