Have a quick and dirty example notebook created a few weeks just to show a working example. I did not actually see @johnri99 notebook(did I miss it?) which I am sure is superior but here is one for viewing. Also, had trouble with the flag, is_reg, thus the nb.

Update

I fixed it: Added .cuda()

mixedinputmodel = MixedInputModel(emb_szs, len(df.columns)-len(cat_vars),

0.04, 1, [1000,500], [0.001,0.01], y_range=y_range).cuda()

Original:

I’m getting this error as well. Did you find a solution?

Based on Rossman data:

mixedinputmodel = MixedInputModel(emb_szs, len(df.columns)-len(cat_vars), 0.04, 1, [1000,500], [0.001,0.01], y_range=y_range)

bm = BasicModel(mixedinputmodel, 'mixedInputRegression')

md = ColumnarModelData.from_data_frame(PATH, val_idx, df, yl.astype(np.float32), cat_flds=cat_vars, bs=128, test_df=df_test)

learn = StructuredLearner(md, bm)

learn.lr_find()

~/anaconda3/envs/fastai/lib/python3.6/site-packages/torch/nn/_functions/thnn/sparse.py in forward(cls, ctx, indices, weight, padding_idx, max_norm, norm_type, scale_grad_by_freq, sparse)

55

56 if indices.dim() == 1:

—> 57 output = torch.index_select(weight, 0, indices)

58 else:

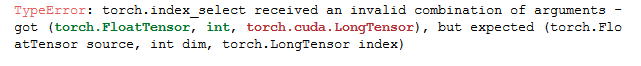

59 output = torch.index_select(weight, 0, indices.view(-1))TypeError: torch.index_select received an invalid combination of arguments - got (torch.FloatTensor, int, torch.cuda.LongTensor), but expected (torch.FloatTensor source, int dim, torch.LongTensor index)

Do we have to explicitly convert categorical variables as categories before passing them to our model? This part occupies a lot of memory. I believe we do that to calculate the emb_szs primarily. Instead, we can calculate emb_szs without converting it to categorical data as well. But I am not sure how pytorch will treat these variables if we did not convert them to categorical variables. Has anyone tried this before?

I’ve been reading through this thread and it seems the answer is somehow contained within, however I still have had no luck with getting a binary classification working with a structured data set.

I am trying to identify a rare event (about 1% occurrence) in a time series data set. To make the dataset more balanced I’ve stripped back most of the non-occurrences so that its roughly 50/50 for the event occurring however my training set is now only 70,000 rows.

So my prediction should either be true or false. I’m using is_reg=False

md = ColumnarModelData.from_data_frame(PATH, val_idx, df, y1, is_reg=False, cat_flds=cat_vars, bs=64)

I initially tried setting my dep var to

y=[0, 0, 0, 1, 0, 0, 0, 1, 0....]

and out_sz = 1

m = md.get_learner(emb_szs, n_cont = len(df.columns)-len(cat_vars),

emb_drop = 0.04, out_sz = 1, szs = [1000, 500], drops = [0.001,0.01], use_bn = True)

but this throws

RuntimeError: cuda runtime error (59) : device-side assert triggered at /opt/conda/conda-bld/pytorch_1518244421288/work/torch/lib/THC/generic/THCTensorCopy.c:20

I then tried 0ne hot encoding my dep and set out_sz = 2

y = [[0, 1], [0, 1], [1, 0], [0, 1] ..... ]

m = md.get_learner(emb_szs, n_cont = len(df.columns)-len(cat_vars),

emb_drop = 0.04, out_sz = 2, szs = [1000, 500], drops = [0.001,0.01], use_bn = True)

however that throws an error on

df ,y, nas, mapper = proc_df(model_samp, 'pred_label', do_scale=True)

AttributeError: Can only use .cat accessor with a 'category' dtype

I’ve tried setting my one hot encodings to both int64 and category types but both throw this same error.

I’m a little stumped on the correct way to set this up. I’m clearly missing something though.

I think you want y = [0, 1, 1, 0, 1] format and out_sz = 2. I also had to do y.astype(‘int’) in ColumnarModelData, so you might see if that helps.

For imbalance, I duplicated the rarer targets:

dfx = df[df[‘RESOLUTION’] < 10]

dfy = df[df[‘REJECTED’] < 10]

frames = [df, dfy, dfx, dfx, dfx, dfx, dfx, dfx, dfx, dfx, dfx, dfx]

df = pd.concat(frames)

And shuffled afterwards.

My multiclass target combines rejected and resolution later in the data processing.

Does this example help?

Wow, I don’t know how I managed to miss that combination. Thank you. Just to confirm my understanding of how fastai/pytorch is evaluating this model’s loss.

With

is_reg = False

y=[1, 0, 0, 0, 1]

out_sz=2

The last layer of the model is outputting a rank 1 tensor shape [2], one for class 1 and one for class 2. Fast.ai (because is_reg=False) is setting the loss function to log_softmax

//column_data.py

if not self.is_reg:

if self.is_multi:

x = F.sigmoid(x)

else:

x = F.log_softmax(x)

which is taking those two values, and scaling them to [0, 1] and adding to 1 and then taking the Log

e.g softmax([3.4, 9.8]) -> [0.2575, 0.7424]

log([3.4, 9.8]) -> [-1.3567, -0.2973]

So if the target value was y = 1 then in the above example is it treating 1 is the i=1 index into an array of 2 classes? So does it then compare -0.2973 to 1?? or does it take the log of 1 (the target) and compare -0.2973 to ln(1) which would give a loss of 0.2973?

I just want to make sure I really understand what the loss calculation is actually telling me. Given the rarity of the event I’m trying to predict I want to make sure that I maintain a low false positive rate and a high accuracy on the true positive probability even at the sacrifice of increasing the false negative rate. i.e I’d rather miss 9/10 events so long as the one time it does predict an event I can have assurance that it is highly likely to occur.

Thanks, this example is helpful. You mention at the end that perhaps this is not the best use of a neural network. What approach would you have used instead?

You can define a custom loss function. In this case I used negative log loss:

def imbalanced_loss(inp,targ):

return F.nll_loss(inp,targ,weight=T([.01,.99]))

learn = StructuredLearner(...)

learn.crit = imbalanced_loss

I have tested this out with the imbalanced TalkingData dataset from Kaggle (notebook). The results are not bad!

Interesting that you get good results with this approach. I have tried using weighted loss functions with the Kaggle credit card fraud dataset (which is extremely unbalanced) and found that depending upon the weighting it either predicts far too many fraudulent transitions or non at all. I guess it might be possible to find an optimum but I couldn’t do it and had to look at other approaches (in my case use of an autoencoder).

For information I also tried oversampling the smaller class and undersampling the larger class to give even class numbers, neither approach worked especially well but the over sampling seemed better - intuitively I think this is because you throw away less data

Going back to the question raised, I think it is slightly confusing that Pytorch avoids the one hot encoding for the input to the loss function, but then the output has one column per class. I guess its pretty obvious why but can be easy to get confused.

Regards

John

UPDATE: Turns out the system did not hang, but instead for some reason after the epoch was complete took another ~ hour to spit out the output.

Original:

Thank you for the excellent notebook. Always helpful to learn by example.

When running your notebook, the processing hangs when I fit the model. Any idea what’s happening here? I watched it work it’s way through the epoch and then after it finished instead of providing output it just hangs. Low memory? I’m running on a 64gb system. Let me know if i can help better define the problem on my end

Have you tried data augmentation only on the smaller class to make it bigger? Perhaps that might also have nice results.

Yes that was one of the approaches I tried. That produced the best results but still nowhere near as good as the Autoencoder. The issue is the tradeoff between precision and recall. Over-sampling the small class to give the same overall numbers for both classes tends to result in catching almost all of the minority class (the fraudulent transactions) but also classifying many other transactions as fraudulent. To me predicting genuine transactions as fraud is not quite as bad as missing a genuine fraudulent activity but its not too much worse since it is likely to result in customer frustration.

At the same time you can also adjust the weights of the two classes to juggle between precision and recall but its difficult to get both to a good value.

Is there a way to see the values for the created embeddings. I’ve been using EmbeddingModel/MixedInputModel and it’s mostly training but I’d like to see query the Embedding model for the values.

I can see:

emb_model

and get:

EmbeddingModel(

(embs): ModuleList(

(0): Embedding(6183, 50)

but is there a way to feed in one of my categories into that layer 0 and get back the 50 length vector?

You can get the embedding vectors through model.embs.parameters()

try something like

embedsnp = list()

for param in model.embs.parameters():

embedsnp.append(param.data.cpu().numpy())

(Indent that last line)

I’m trying to understand the MixedInputModel.forward method inputs (x_cat and x_cont). Where is it specified there will be two inputs? I’m interested in extending forward to include a third class of input type that is a sequence of integers to which I’ll apply an additional pre-computed embedding on each element, flatten and then torch.cat with the continuous and categorical Tensors. I thought maybe the parameters expected by forward were controlled by the getitem method in ColumnarDataset. Any thoughts? Thanks for any insight.

For the MixedInputModel, what is the difference between emb_drops and drops? They are both passed to the Dropout function:

self.emb_drop = nn.Dropout(emb_drop)

self.drops = nn.ModuleList([nn.Dropout(drop) for drop in drops])

Do these parameters essentially do the same thing? Would a model use only one of these, or is there a use case for having both types of dropout?

Emb drop applies dropout on embedding weights and drops essentially do the same but for linear layers. So you can define different level of dropout for both independently.

hey i am working on this structured data model for a completed competition on kaggle (airbnb). I am having trouble getting the get_learner to work . If anybody could look at the code and give me suggestions on how to move forward it would be great

Hello @shriram ,

I have a similar problem like you but my setup crashes one step later when I want to call lr_find().

I use the proc_df() supplied with fast.ai to process my dataframe into a format that should be able to be handled later. For that I use do_scale=True to scale my continuous data and ignore_flds= to ignore the categorical data. I use the the mapper and the na_dict/nas from the train df on the test and val df (which I separated before).

After that I create a MixedInputModel(...) and with that a basic model with bm = BasicModel(model, 'binary_classifier') (this approach is from https://github.com/KeremTurgutlu/deeplearning/blob/master/avazu/FAST.AI%20Classification%20-%20Kaggle%20Avazu%20CTR.ipynb by @kcturgutlu).

Next, I created the model data with md = ColumnarModelData.from_data_frames(...).

After that I can create the learner with learn = md.get_learner(...).

But then it crashes when I call learn.lr_find() with the following output:

...

~/fastai/courses/dl1/fastai/column_data.py in <listcomp>(.0)

113 def forward(self, x_cat, x_cont):

114 if self.n_emb != 0:

--> 115 x = [e(x_cat[:,i]) for i,e in enumerate(self.embs)]

116 x = torch.cat(x, 1)

117 x = self.emb_drop(x)

~/anaconda3/envs/fastai/lib/python3.6/site-packages/torch/nn/modules/module.py in __call__(self, *input, **kwargs)

489 result = self._slow_forward(*input, **kwargs)

490 else:

--> 491 result = self.forward(*input, **kwargs)

492 for hook in self._forward_hooks.values():

493 hook_result = hook(self, input, result)

~/anaconda3/envs/fastai/lib/python3.6/site-packages/torch/nn/modules/sparse.py in forward(self, input)

106 return F.embedding(

107 input, self.weight, self.padding_idx, self.max_norm,

--> 108 self.norm_type, self.scale_grad_by_freq, self.sparse)

109

110 def extra_repr(self):

~/anaconda3/envs/fastai/lib/python3.6/site-packages/torch/nn/functional.py in embedding(input, weight, padding_idx, max_norm, norm_type, scale_grad_by_freq, sparse)

1062 [ 0.6262, 0.2438, 0.7471]]])

1063 """

-> 1064 input = input.contiguous()

1065 if padding_idx is not None:

1066 if padding_idx > 0:

RuntimeError: cuda runtime error (59) : device-side assert triggered at /opt/conda/conda-bld/pytorch_1525909934016/work/aten/src/THC/THCTensorCopy.cu:204

Maybe this helps you?

Maybe somebody has another hint on what is not working?

I also tried debugging with ipdb (from IPython.core.debugger import set_trace; set_trace(), see https://pythonconquerstheuniverse.wordpress.com/2009/09/10/debugging-in-python/) but so far I could not pinpoint the problem.

Best regards

Michael

EDIT:

I found a better way to start the debugging when the error occurred with this helpful code (from https://stackoverflow.com/questions/242485/starting-python-debugger-automatically-on-error):

import pdb, traceback, sys

if __name__ == '__main__':

try:

learn.lr_find() # <-- put the function that crashes here!

except:

extype, value, tb = sys.exc_info()

traceback.print_exc()

pdb.post_mortem(tb)

The problem in my case comes from the torch embedding function.

I created the embedding sizes with emb_szs = [(c, min(50, (c+1)//2)) for _,c in cat_sz] from the video. But the embedding sizes shouldn’t be a problem, right?