@thunderingtyphoons @davecg I don’t think being on the east coast is a handicap that is totally debilitating…  If we book a room, we can easily have a skype running at the same time, or just use private chat on the forums.

If we book a room, we can easily have a skype running at the same time, or just use private chat on the forums.

@thunderingtyphoons @davecg Indeed, but even if we don’t live stream Friday’s session I’m sure we can still collaborate if this interests you.

For example, we have a slack channel for coordinating and sharing progress. I can invite you if you’d like just ping me an email address.

What time were you guys planning to meet (PST) and what’s the focus. I’m super interested in style transfer and segmentation right now and would love to participate if time permits.

count me in! I’m super excited about the recent paper on photorealistic style transfer, anyone else want to try it together?

@xinxin.li.seattle @Even ping your email address and I’ll add you to the slack channel where we are coordinating the work.

Right now @Matthew is exploring Arbitrary Style Transfer, @sravya8 is working on Style Transfer For Videos, and I’m exploring segmentation.

Friday 12pm - 5pm at 101 Howard 5th floor study room.

Photorealistic style is the one that interests me too. I’m going to focus on the photorealism component of the loss function to start and see if I can’t figure out how to port it to Keras.

I’m also going to dive back into the Wasserstein GAN paper implementations and see if I can get (or ideally find) earthmovers distance working in Tensorflow. I feel like the insights there are actually a lot broader than just for GANs and it has a lot of applicability to a project i’m working on.

I’ve seen a few papers that use histogram difference minimizations to try to improve the style transfer and I’m curious to see if they’re improved by using earthmovers.

I’m thinking of starting at 10a. 5 hours doesn’t seem enough time to implement this…

Even better. 10am then. I’ll see if I can find a room. We’re going to need a healthy head start.

Do you have any success to run code form the paper (deep photo style transfer)?

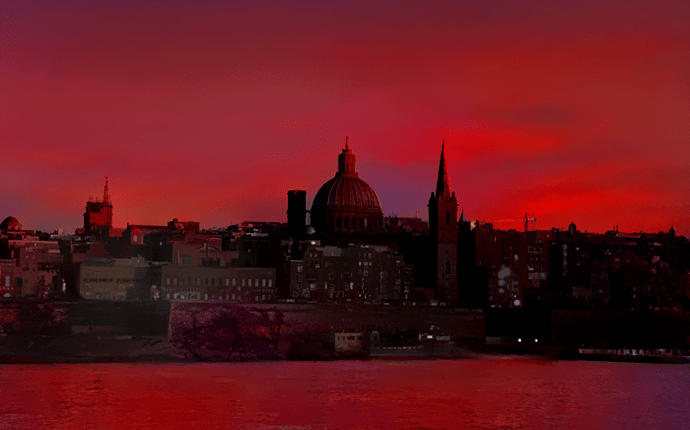

I took a look at this after your post. It was a journey… The results are good though:

I don’t think he used Linux or LuaJIT. I had to:

- Use an alternative Matting Laplacian impl (https://github.com/luanfujun/deep-photo-styletransfer/issues/24)

- Re-install Torch w/ Lua 5.2 because of LuaJIT memory limits (http://torch.ch/docs/getting-started.html)

- Re-write part of cuda_utils.cu to avoid luaL_openlib because it’s not in Lua 5.2 (http://stackoverflow.com/questions/19041215/lual-openlib-replacement-for-lua-5-2)

Here is an older Kaiming He paper that might offer a faster alternative to calculating the Matting laplacian.

I agree. This is the one that I was looking at. Although there’s an AVPR17 paper that’s more accurate.

Wow! Impressive work. Up for a hackathon this weekend?

Thank you for sharing. Did you manage to run code on custom images/masks?

I just ran it on the first input+target from his examples.

which paper is that? I found this alternative matting laplacian repo. They all seems to be done in matlab, is there a reason why nobody implemented it in python?

I am thinking about implementing the deep photo style transfer in keras built upon lesson 8. Seems to me the changes are 3 folds, do you think i missed anything?

- matt laplacian

- semantic segmentation (the paper used DilateNet’s code)

- augmented style loss function

nope, i didn’t try to run the code from the paper, did you? did you try it on custom images/masks?

Nice! Let me know your progress as I will be working on that part too.

In the paper implementation detail section, the author suggests a 2 stage optimization rather than solving for the overall loss function. (This twostage optimization works better than solving for Equation 4 directly, as it prevents the suppression of proper local color transfer due to the strong photorealism regularization) It seems very costly to me and I wonder if we have to have 2 stage optimization. What do you think?

First let’s cover our bases. You should get the code from the repo to work, then we can have a conversation from there.

OT @ibarinov - I just noticed you’ve got an app doing artistic filters! https://itunes.apple.com/US/app/id1208272322?mt=8 . How’s it getting along? Are you thinking of implementing some of these new techniques? That would be pretty great…